Data Engineering Services

Data Prism offers end-to-end data engineering solutions for high-performance pipelines, integration and scalable architectures for business intelligence.

Data Engineering Services we Offer

We bring clarity and control to complex data environments. From real-time ETL pipelines to modern data lakes and cloud migration, our services are tailored to drive agility, security and performance across your data stack.

Data Integration

Unify data from multiple sources and platforms into a single, consistent view. We synchronize systems, APIs and databases to ensure seamless access and consistency across your organization.

Data/ETL Pipelines

Automate the entire data journey — from ingestion to transformation and delivery. We build ETL/ELT pipelines optimized for scale, real-time streaming and efficient orchestration.

Data Warehousing

Create centralized repositories that support fast querying and scalable storage. Our solutions are designed for BI tools, dashboards and high-volume analytics.

Data Cloud Strategies

Leverage the power of cloud platforms like AWS, GCP and Azure with custom strategies that match your infrastructure, budget and growth goals.

Data Migration

Seamlessly migrate legacy systems, databases, or cloud platforms with zero data loss and minimal downtime. We ensure a secure and smooth transition to your new architecture.

Data Lake Implementation

Build modern data lakes to store structured, semi-structured and unstructured data in its raw form — enabling advanced analytics, ML and data discovery.

Data Management

Govern and maintain your data with best-in-class management practices covering accessibility, lineage, compliance and lifecycle optimization.

Technologies We Use for Data Solutions

Programming Languages

Node Js

Python

JavaScript

Data Orchestration

Apache Airflow

Azure Data Factory

Databricks

Dagster

RESTful Services

Postman

Rest

SOAP

Requests

Databases

MySQL

SQL Server

PostgreSQL

MongoDB

DynamoDB

SQLite

Redis

Firebase

Data Warehouses

Snowflake

BigQuery

Redshift

Data Visualization

PowerBI

Tableau

Looker Studio

Cloud Platforms

AWS

Azure

GCP

Heroku

Data Transformation

AWS Glue

Talend

Apache Kafka

DBT

Security

OAuth

SSL/TLS

Containerization

Docker

Kubernetes

Our Data Engineering Process

We collect data from diverse structured and unstructured sources, including databases, APIs, and files, ensuring a continuous and secure flow into your systems for downstream processing.

Before any transformation begins, we apply rigorous checks to validate data accuracy, completeness, and consistency, preventing issues that could compromise reporting or decision-making later.

Using ETL/ELT processes, we clean, enrich, and reformat raw data into a structured, analytics-ready format tailored to your specific business intelligence or machine learning use cases.

Transformed data is securely stored in high-performance storage solutions like data warehouses, lakes, or cloud-native repositories, designed to scale and support real-time or batch querying.

We automate recurring workflows and manage task dependencies using orchestration tools like Airflow or Prefect, ensuring timely, error-free, and fully governed data operations.

The final processed data is delivered through dashboards, APIs, or reporting layers, making it accessible to business users, analysts, and downstream systems in real time or scheduled intervals.

Success Stories

We’ve partnered with fast-growing startups and global enterprises to design intelligent data ecosystems that power smarter decisions and digital growth.

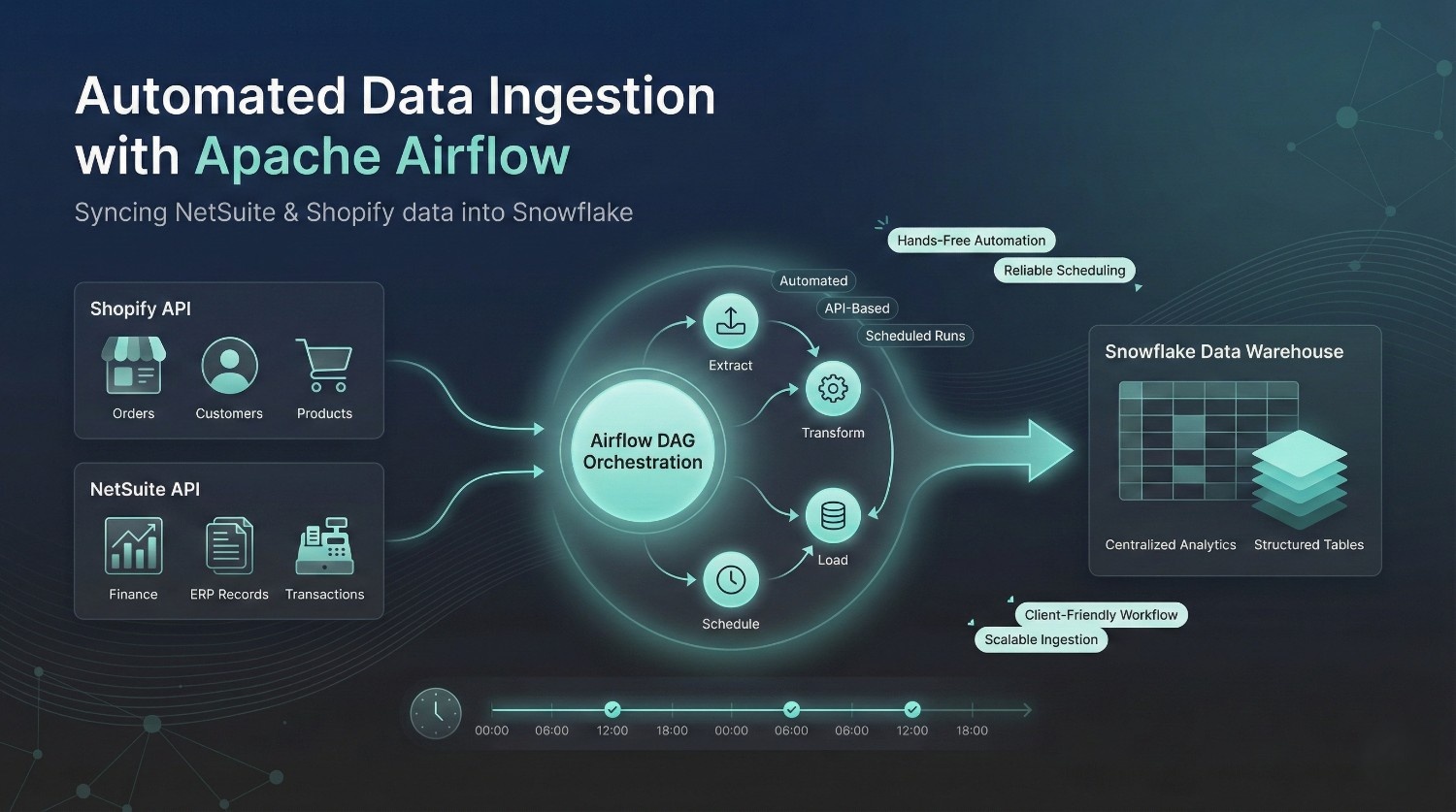

Ingestion of NetSuite and Shopify with Airflow and Snowflake

The client needed an automated way to move NetSuite and Shopify data into Snowflake. DataPrism built a scheduled Airflow pipeline that extracted data through APIs and loaded it into Snowflake efficiently.

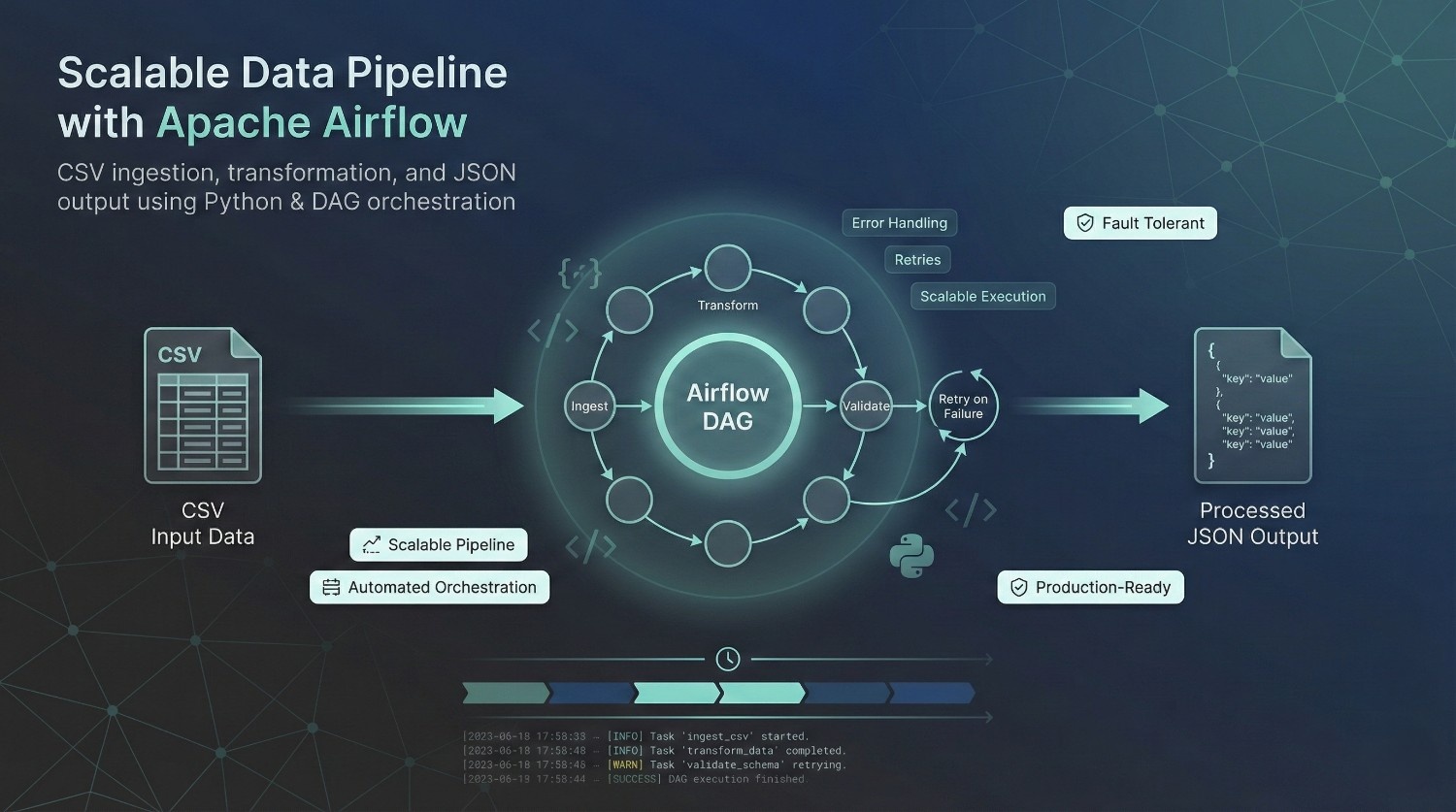

Data Pipeline Using Python and Apache Airflow

The client needed a scalable CSV-to-JSON data pipeline. DataPrism built a Python and Apache Airflow workflow to ingest, process, and transform the data reliably.

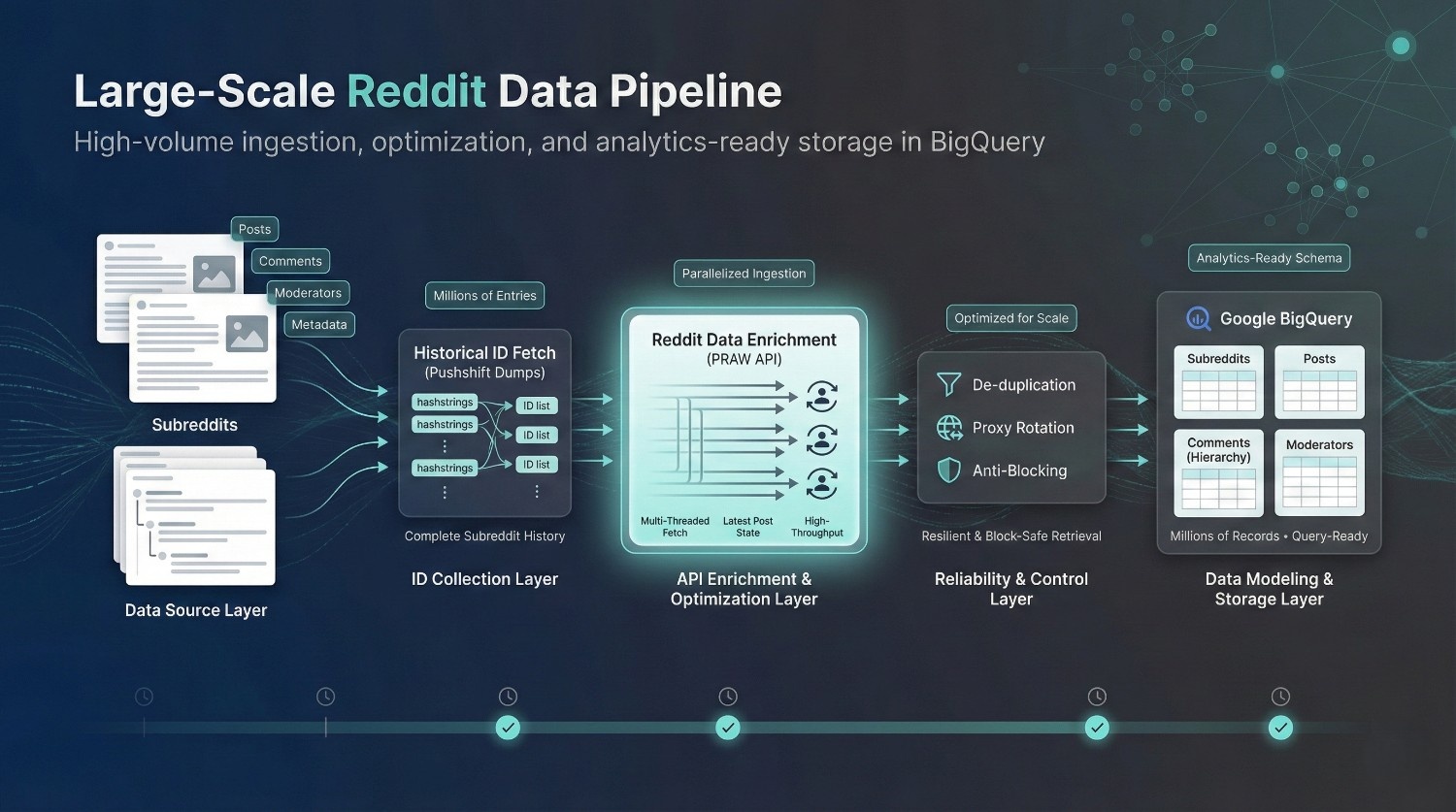

Reddit Data Collector (Boston University)

Boston University needed large-scale Reddit data for a research project. DataPrism built an optimized pipeline to collect, clean, de-duplicate, and store subreddit, post, and moderator data in BigQuery.

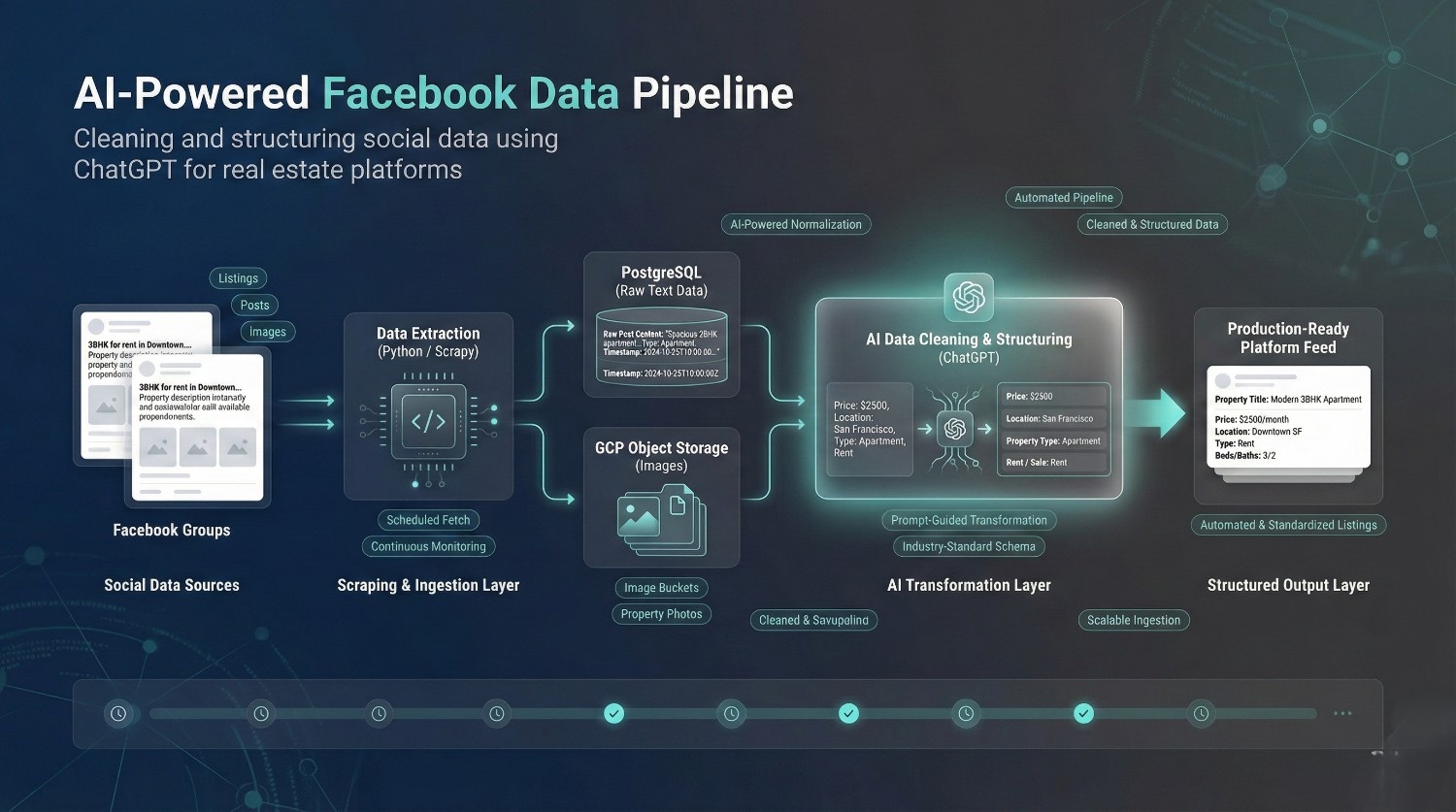

Facebook Data Pipeline using ChatGPT (Knok’d)

Knok’d needed Facebook group data for its real estate listings platform. DataPrism built a Python and ChatGPT-powered pipeline to extract, clean, transform, and deliver the data in a structured format.

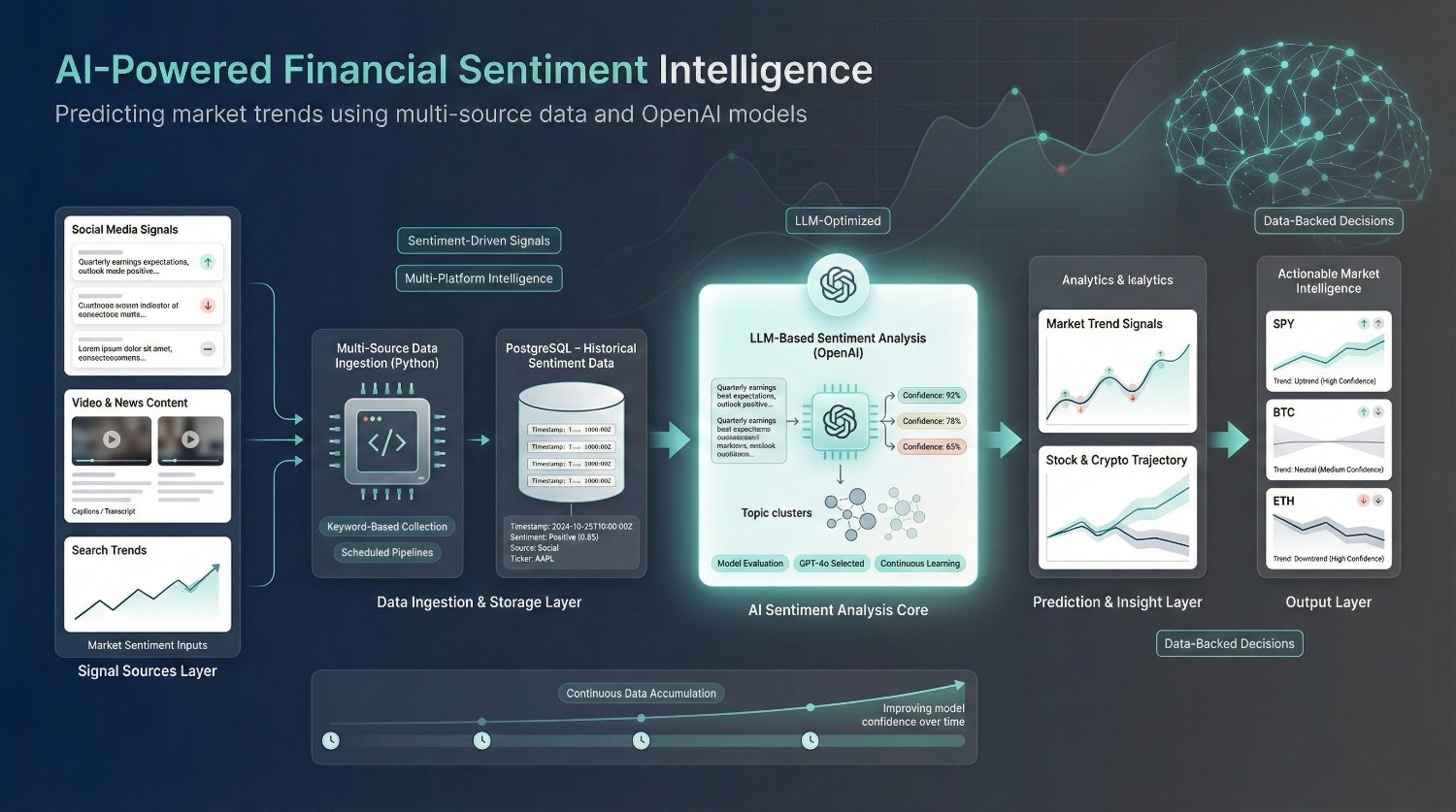

Financial Predictor using Sentiment Analysis via OpenAI API (Maxx Source)

Maxx Source needed a sentiment analysis system for stocks and cryptocurrencies. DataPrism built a pipeline that gathered multi-platform data and used GPT-powered analysis to predict market trends.