Table of Contents

If your dashboards are refreshing every few minutes (even without new data), you’re paying for unnecessary compute and it should be optimized. Very small intervals between refreshes can also affect the performance and may result in slower response times.

Implementing a sensible refresh rate, using materialized views, and caching repetitive results can save a lot of money. The following table can serve as an ideal example to understand their importance.

Introduction

The Term Everyone Uses and Nobody Defines

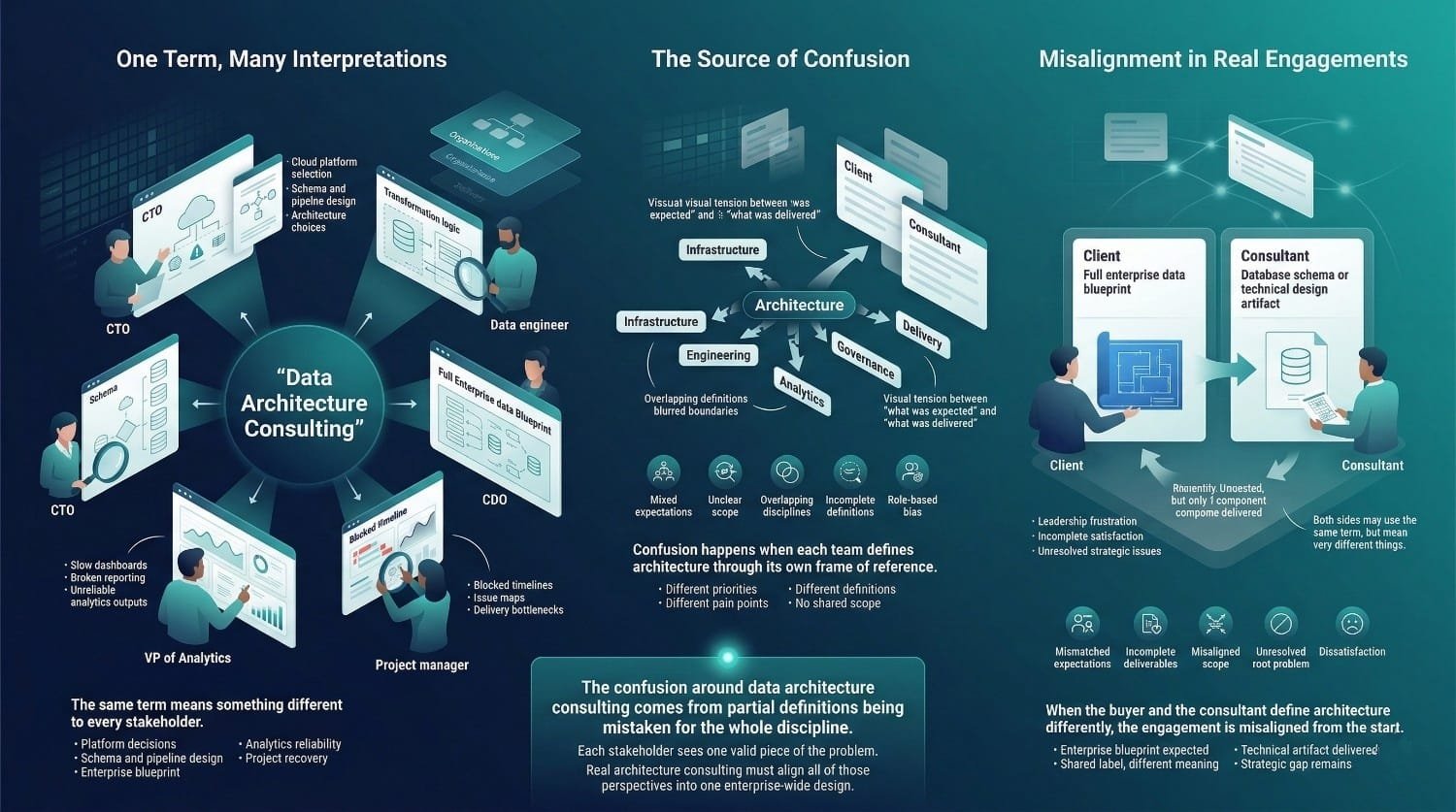

Data architecture consulting is one of those terms that sounds self-explanatory until you ask three people what it means and get four different answers.

Ask a database administrator and they’ll tell you it’s about designing schemas and optimizing storage. Ask a data engineer and they’ll describe pipeline design and tool selection. Ask a cloud consultant and they’ll talk about infrastructure configuration. Ask a vendor and they’ll explain why their platform is the architecture you need.

None of them are entirely wrong. None of them captures the full scope either. The term gets used so loosely, attached to everything from database design to tool implementation to cloud migration, that its actual meaning has been diluted to the point of confusion. And that confusion can have real operational and financial consequences.

Why the Confusion Matters

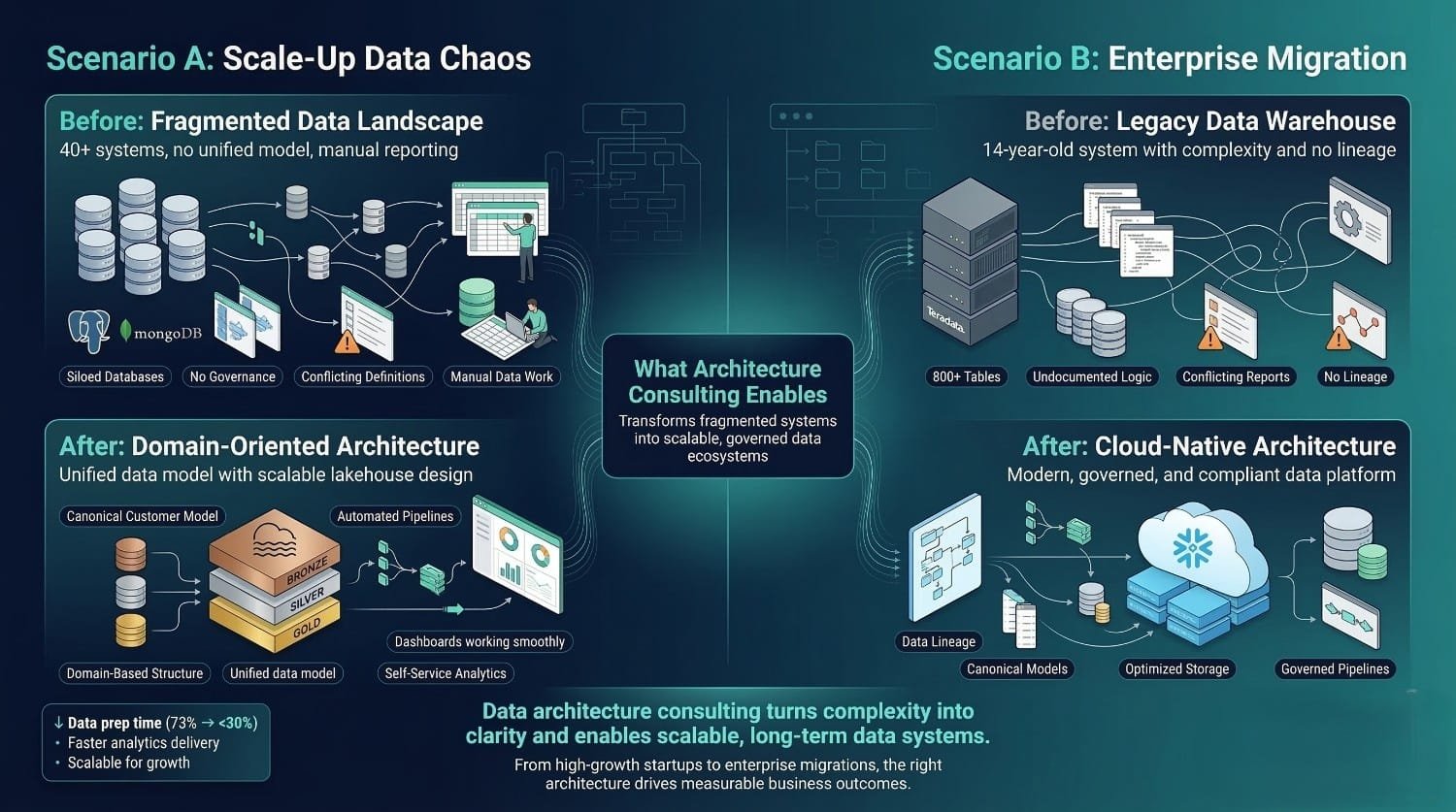

When organizations don’t understand what data architecture consulting actually is, predictable problems follow:

Mismatched expectations. Leadership expects a strategic blueprint for their entire data ecosystem. The consulting partner delivers a database schema and a tool recommendation. Both parties think they delivered or received “architecture consulting.” Neither is satisfied.

Poorly scoped engagements. The organization hires for architecture but scopes for engineering, or hires for engineering but expects architecture. The engagement delivers work that’s technically competent but strategically misaligned. Budget is spent. The fundamental problem remains.

Missed opportunities. The organization has a genuine architecture problem, fragmented systems, no governance, technical debt, scaling failures, but doesn’t recognize it as architectural. They keep buying tools and hiring engineers to solve a problem that exists at a layer neither tools nor engineering can reach.

Wasted investment. The architecture engagement produces beautiful diagrams and comprehensive documents, but they don’t connect to business outcomes, don’t account for organizational reality, and don’t get implemented. Expensive shelf decoration.

Many of these failures can be traced back to a misunderstanding of what data architecture consulting actually involves.

What This Post Is For

This post exists to clear the fog. Not to sell you on architecture consulting. Not to convince you that you need it right now. Simply to give you a precise, honest, plain-language understanding of what this discipline actually is, so that if and when you do engage it, you know exactly what you’re buying, what you should expect, and how to evaluate whether you’re getting the real thing.

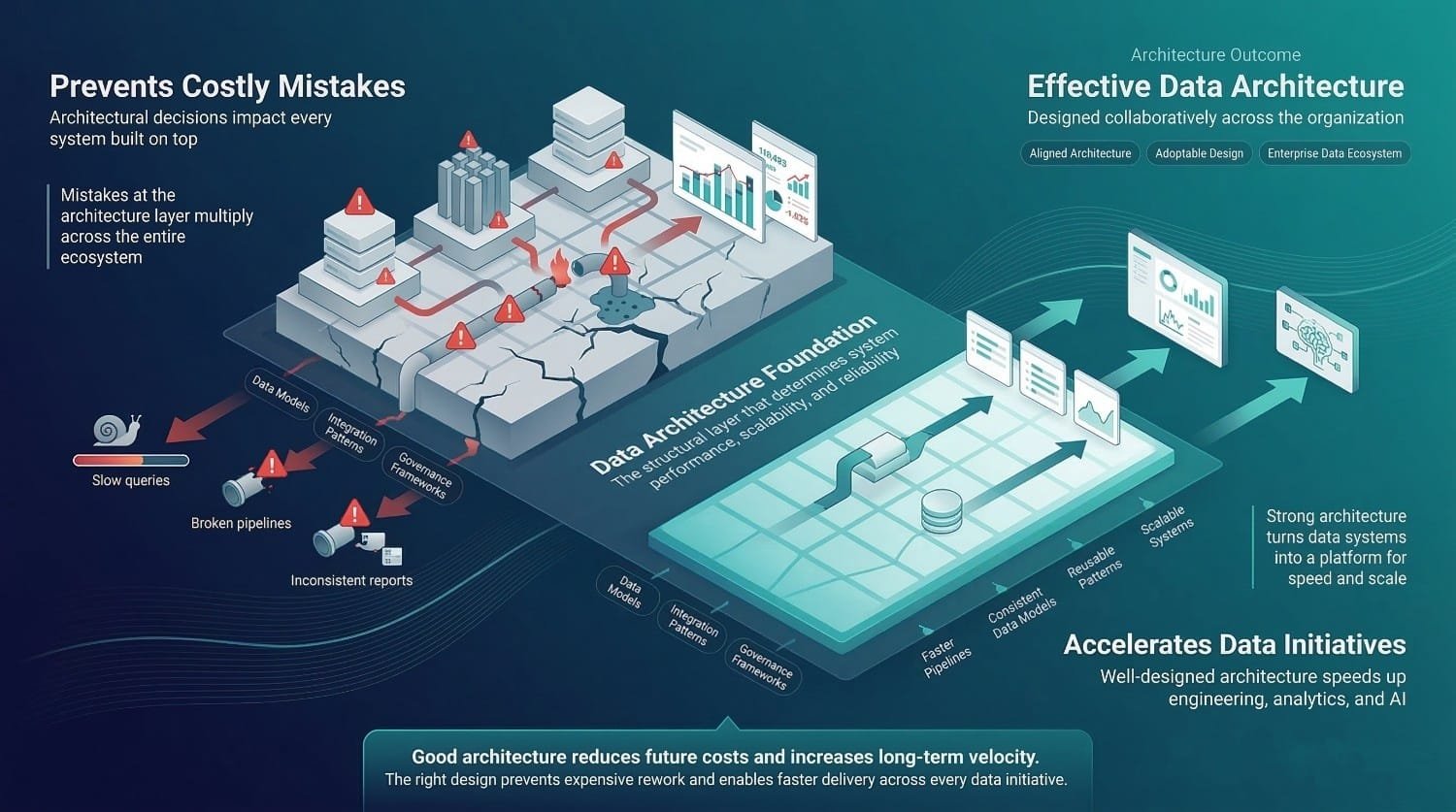

The Stakes

This clarity matters because architecture is one of the highest-leverage decision layers in the data ecosystem. Every other data investment, tools, pipelines, analytics, AI, governance, operates on top of architecture. When the architecture is sound, those investments deliver their full value. When the architecture is flawed, those investments underperform, regardless of how well they’re executed individually.

A perfectly built pipeline running within a poorly designed architecture produces unreliable results. A world-class BI tool querying a badly modeled warehouse produces dashboards nobody trusts. A sophisticated AI model trained on data from a fragmented, ungoverned ecosystem produces predictions that are technically impressive and practically useless.

Architecture is the multiplier. It amplifies everything above it, for better or worse.

The Thesis

Data architecture consulting is a distinct, strategic discipline that goes far beyond technology selection or diagram creation. It’s the practice of designing the complete structural foundation for how an organization’s data is collected, stored, organized, integrated, governed, and consumed, aligned to business outcomes and built to evolve. Understanding what it truly is, and equally, what it isn’t, is essential before investing in it.

What You'll Walk Away With

By the end of this post, you’ll have:

- A clear, demystified definition of what data architecture consulting actually involves, in plain language, not jargon

- A detailed look at every component of a real architecture engagement, from assessment through design through knowledge transfer

- An honest explanation of what it isn’t, the common misconceptions that lead to mismatched expectations

- Understanding of how it creates value, the specific mechanisms through which architecture translates to business impact

- The ability to evaluate whether a consulting partner is delivering genuine architecture work or something else wearing the label

- The confidence to engage data architecture consulting with clear expectations and the ability to hold your partner accountable for delivering real architectural value

Let’s start with what we’re actually talking about.

The Problem

Before defining what data architecture consulting actually is, we need to understand why the confusion exists in the first place. The misunderstanding isn’t accidental, it’s the predictable result of a term that means different things to different people, a market that uses it loosely, and a discipline whose best work is invisible.

The Term Means Different Things to Different People

Ask five stakeholders in the same organization what “data architecture consulting” means and you’ll get five different answers, each shaped by their own role, their own pain points, and their own frame of reference.

The CTO hears: “Help us pick the right cloud platform and design our infrastructure.” Their world is technology decisions. Architecture, to them, means platform selection, Snowflake vs. Databricks, AWS vs. Azure, streaming vs. batch. They want a consultant who can evaluate options and make a recommendation.

The data engineer hears: “Design our database schemas and pipeline patterns.” Their world is implementation. Architecture means the technical blueprints they build against, table structures, indexing strategies, transformation patterns, and naming conventions.

The CDO hears: “Create a blueprint for our entire data ecosystem.” Their world is enterprise data management. Architecture means the complete structural design, every system, every flow, every governance policy, every standard, and how it all serves the business strategy.

The VP of Analytics hears: “Fix whatever is making our dashboards slow and unreliable.” Their world is data consumption. Architecture means whatever structural changes are needed to make analytics actually work.

The project manager hears: “Tell us why our data project is stuck and what to do about it.” Their world is delivery. Architecture means the root cause analysis that explains why things aren’t working and the plan that gets them unstuck. Every one of these perspectives captures a piece of what data architecture consulting involves. None captures the full enterprise scope of data architecture. And when an organization engages consulting with one perspective while the consultant operates from another, the engagement risks misaligned before it begins.

The CDO expects an enterprise blueprint. The consultant delivers a database schema. Both call it “architecture consulting.” Neither is satisfied.

Vendors Relabeling Implementation as Architecture

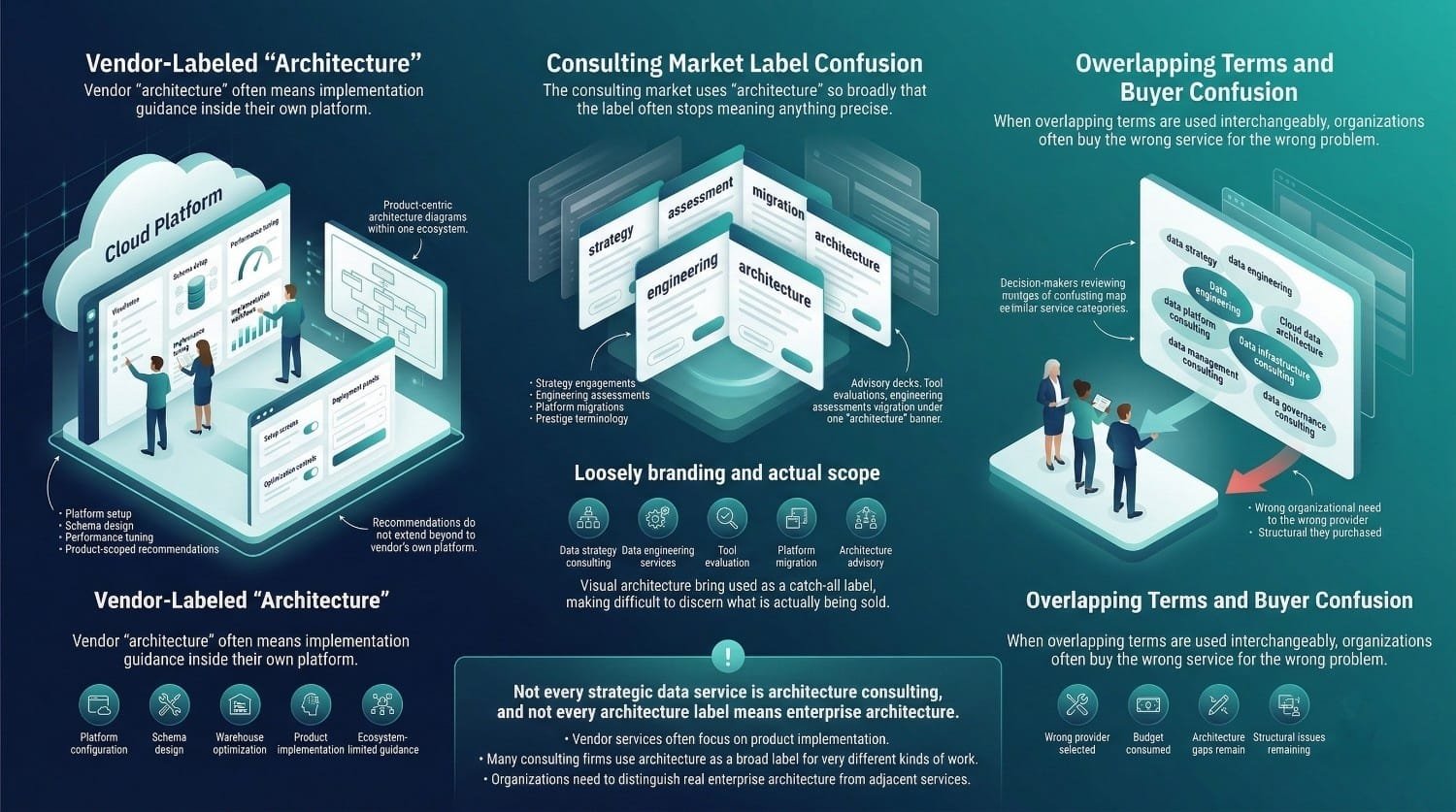

When a cloud platform vendor offers “data architecture consulting,” what they typically provide is guidance on how to implement their platform, how to configure their warehouse, how to design schemas within their tool, how to optimize performance on their infrastructure.

This is valuable work. However, it is typically scoped to product implementation rather than enterprise-wide architecture design. It’s product implementation services wearing an architecture label. The distinction matters because vendor-provided “architecture” will always be scoped to their product. Their recommendations will generally be constrained to their own platform ecosystem. They won’t design the components of your architecture that exist outside their ecosystem. They won’t evaluate whether their tool should be part of your architecture at all.

Consulting Firms Using Architecture as a Catch-All

On the consulting side, “architecture” has become a prestige term attached to almost any strategic data work. Data strategy engagements get called architecture. Data engineering assessments get called architecture. Tool evaluations get called architecture. Platform migrations get called architecture.

Some of these overlap with genuine architecture work. Many don’t. The result is a market where the “architecture consulting” label is applied to engagements ranging from a 2-week tool assessment to a 12-month enterprise design initiative, with no consistency in what the term means, what’s delivered, or what the client should expect.

The Alphabet Soup of Overlapping Terms

The confusion is compounded by a proliferation of adjacent terms that overlap without clear boundaries: data strategy consulting, data engineering services, data platform consulting, cloud data architecture, data infrastructure consulting, data management consulting, data governance consulting.

Each of these describes a real discipline. Each overlaps with architecture in some areas. None is a synonym for architecture. But the market uses them interchangeably, and organizations shopping for help often can’t tell which one they actually need, let alone which one a given provider is actually selling.

The Consequence

Organizations end up buying the wrong services. They engage a cloud infrastructure consultant when they need a data architect. They hire data engineers when they need architectural design. They purchase a vendor’s implementation services expecting an enterprise blueprint. These mismatches can consume budget while leaving structural architectural issues unresolved.

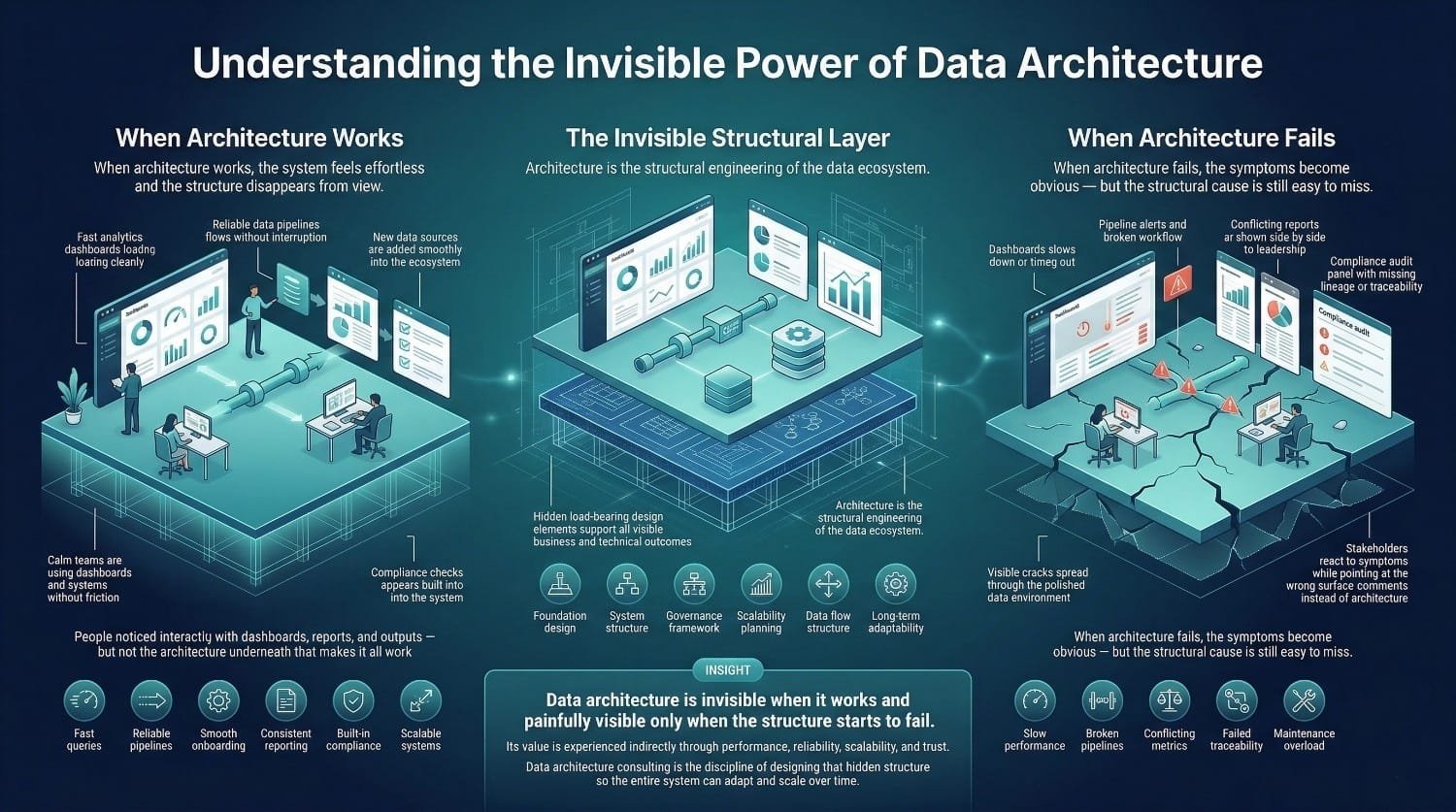

When Architecture Works

Well-designed architecture is often invisible in daily operations. When it’s working:

- Queries are fast, nobody thinks about why

- Pipelines run reliably, nobody notices

- New data sources get onboarded smoothly, it feels easy

- Reports are consistent across departments, it seems obvious

- The system scales with growth, it just works

- Compliance requirements are met, it’s built in

Architecture is rarely visible when systems operate smoothly. They praise the dashboards, the analytics, the AI models, the engineering team. The architecture is invisible, like gravity. You only notice it when it fails.

When Architecture Fails

Bad architecture is equally invisible, until a threshold is crossed. Then it becomes painfully, expensively visible:

- Queries that used to take seconds now take minutes, and nobody can explain why

- Adding a new data source triggers a month-long impact analysis

- Two departments present different numbers to the CEO

- A compliance audit reveals that nobody can trace a regulatory metric back to its source

- The data team spends 80% of its time maintaining existing systems instead of building new capabilities

At this point, the symptoms are obvious. But the root cause, architecture, remains hidden to most stakeholders. They see slow queries and blame the database. They see pipeline failures and blame the engineers. They see conflicting reports and blame the data. The connection between these symptoms and earlier structural design decisions is often overlooked.

Bad architecture is equally invisible, until a threshold is crossed. Then it becomes painfully, expensively visible:

- Queries that used to take seconds now take minutes, and nobody can explain why

- Adding a new data source triggers a month-long impact analysis

- Two departments present different numbers to the CEO

- A compliance audit reveals that nobody can trace a regulatory metric back to its source

- The data team spends 80% of its time maintaining existing systems instead of building new capabilities

At this point, the symptoms are obvious. But the root cause, architecture, remains hidden to most stakeholders. They see slow queries and blame the database. They see pipeline failures and blame the engineers. They see conflicting reports and blame the data. The connection between these symptoms and earlier structural design decisions is often overlooked.

Why This Makes Consulting Hard to Buy

It is inherently difficult to justify investment in structural improvements that are not immediately visible. Architecture consulting asks organizations to invest significantly in improving something that’s invisible when it works and misattributed when it fails. That’s a hard sell, especially compared to tools (which have demos), engineers (who write visible code), and analytics platforms (which produce visible dashboards).

The value of architecture is real. But it’s structural value, experienced indirectly through the performance of everything that sits on top of it. Communicating that value requires a different kind of conversation than selling a tool or a deliverable.

The Analogy

Think about the architecture of a building. You never see the structural engineering, the steel beams, the load calculations, the foundation design. You see the rooms, the windows, the finishes. You experience the building through what’s visible.

But the structural engineering determines everything. Whether the building can support another floor. Whether it withstands an earthquake. Whether the walls crack after 5 years or stand for 50. Whether the plumbing and electrical can be upgraded without gutting the walls.

Data architecture is the structural engineering of your data ecosystem. Invisible. Essential. And the difference between a system that adapts and scales for decades, and one that cracks under pressure.

Data architecture consulting is the discipline of getting that structural engineering right. The rest of this post explains exactly what that involves.

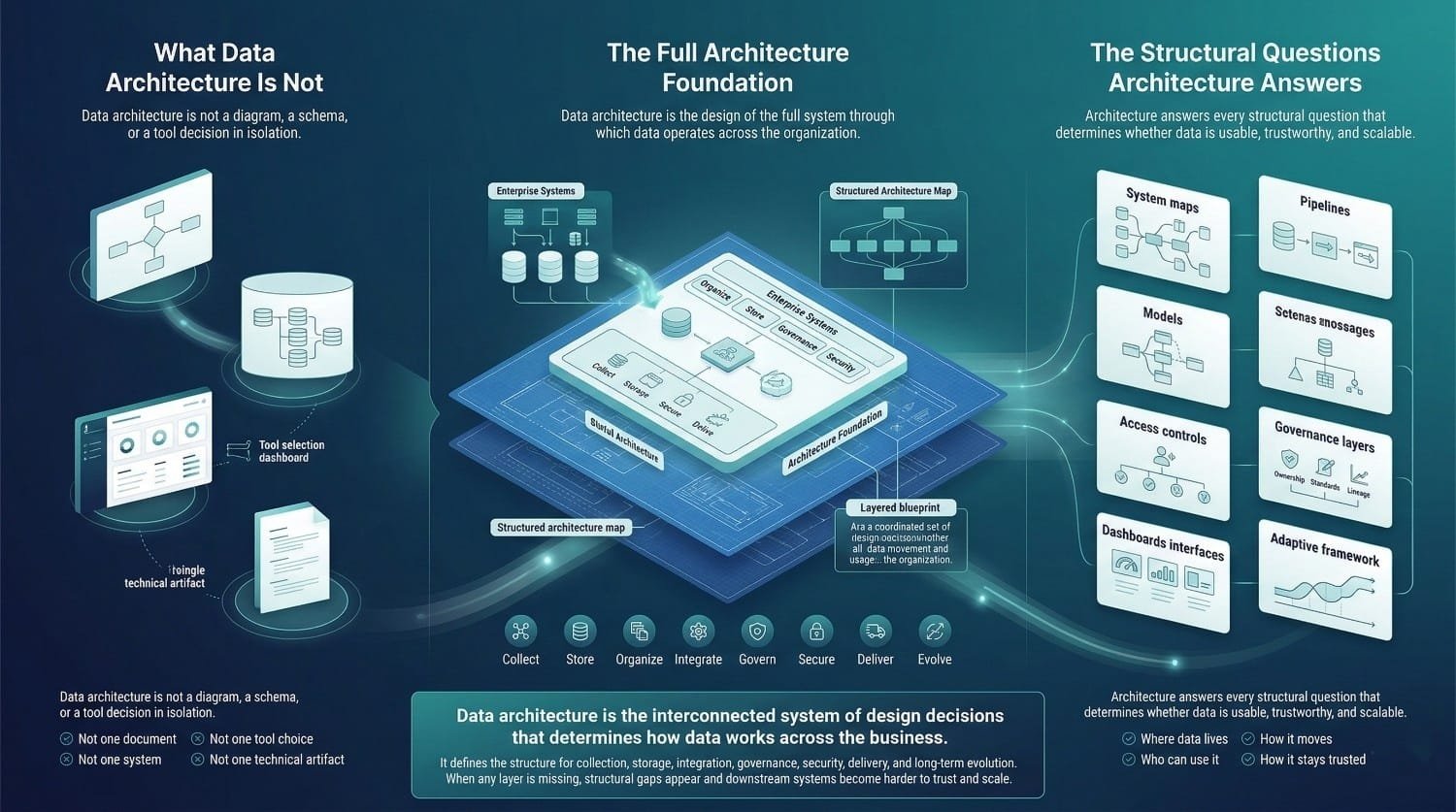

A Clear, Comprehensive Definition

Data architecture is the design of the entire system by which an organization collects, stores, organizes, integrates, manages, secures, and delivers data to enable business outcomes.

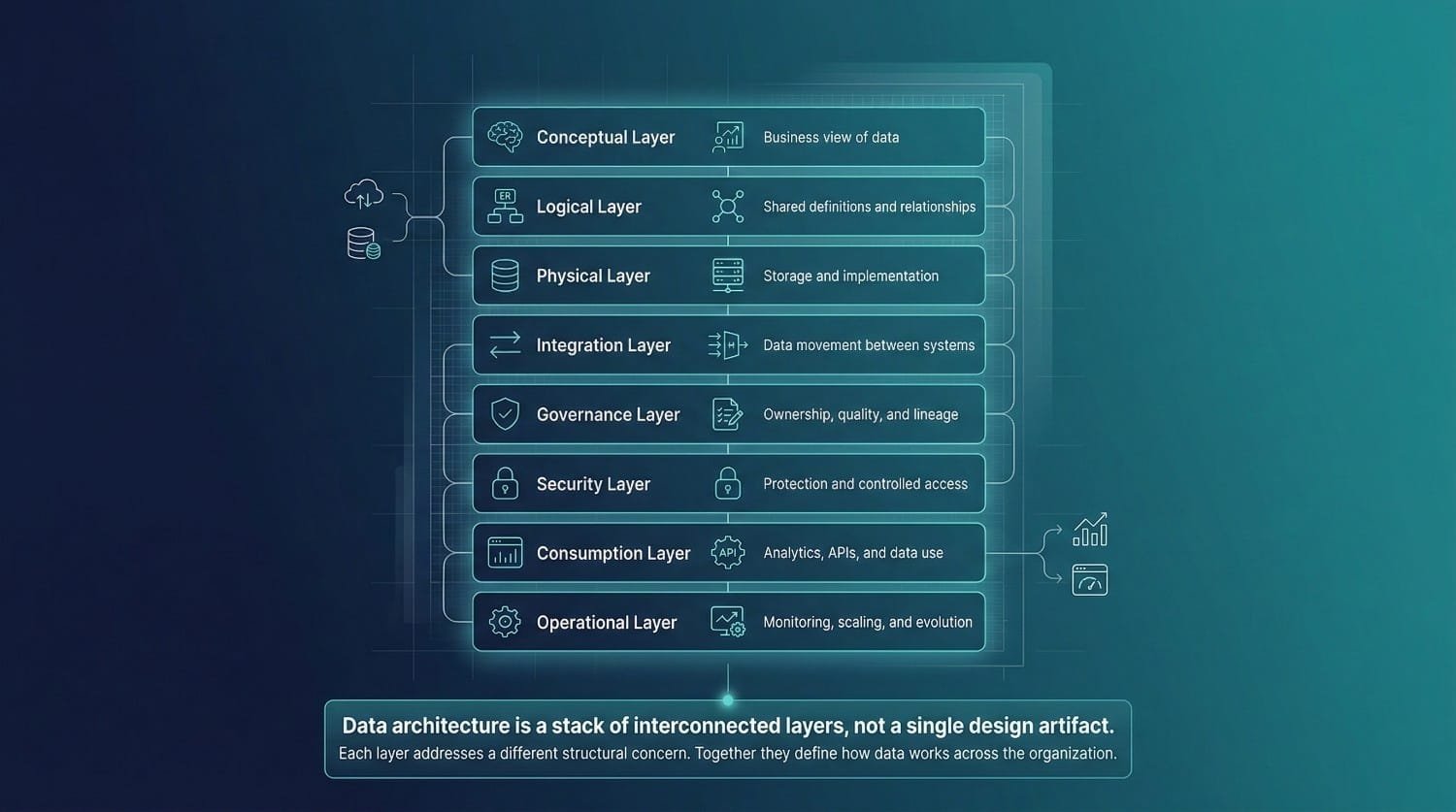

Not a diagram. Not a schema. Not a tool selection. Not a single document. It is a layered and interconnected set of design decisions that together define how data operates across the organization. It answers every structural question about data:

- What data exists, and what data should exist but doesn’t

- Where it lives, which systems store which data, in what format, at what tier

- How it moves, the pipelines, integrations, and interfaces that transport data between systems

- How it’s structured, the models, schemas, and relationships that give data meaning

- Who can access it, the permissions, controls, and policies that govern who sees what

- How it’s governed, the ownership, stewardship, quality standards, and lineage tracking that keep data trustworthy

- How it’s consumed, the tools, interfaces, and products that deliver data to the people and systems that need it

- How it evolves, the principles, standards, and processes that ensure the architecture adapts as the organization changes

Each of these is a design decision. Together, they form the architecture. Omitting any of these elements increases the likelihood of structural gaps that may create downstream issues.

The Conceptual Layer

What it is: The highest-level view of the organization’s data landscape, expressed in business terms, not technical ones.

What it defines:

- The major data domains, customer, product, financial, operational, employee, compliance

- The key business entities within each domain, and the relationships between them

- How data domains map to business functions, which departments own which data, which processes produce and consume which entities

- The scope of the data ecosystem, what’s in, what’s out, what’s planned

Who it’s for: Business leadership, data governance teams, and anyone who needs to understand the organization’s data landscape without technical detail.

Why it matters: The conceptual layer creates shared understanding. When the CEO asks “what data do we have about our customers?” the conceptual model provides the answer, in language they understand, with relationships they can follow. Without it, every conversation about data starts from scratch.

The Logical Layer

What it is: The detailed data model, independent of any specific technology, that defines how data is structured and related.

What it defines:

- Entity definitions, what a “customer,” “order,” “product,” or “transaction” actually is, with precise attributes

- Attributes and data types, every field, its meaning, its format, and its constraints

- Relationships and cardinality, how entities connect (one-to-many, many-to-many) and what those connections mean

- Business rules, the logic that governs how data behaves (a customer can’t have a negative balance, an order must have at least one line item)

- Canonical models, the shared, authoritative definitions that all systems map into

Who it’s for: Data architects, data modelers, and senior engineers who need to translate business concepts into implementable structures.

Why it matters: The logical layer is the connective tissue of the architecture. It’s what makes it possible for different systems, different teams, and different tools to mean the same thing when they say “customer” or “revenue.” Without it, every system defines entities differently, and conflicting definitions are a common root cause of data trust problems.

The Physical Layer

What it is: The actual implementation of the logical model, specific to the platforms and technologies in use.

What it defines:

- Database schemas, the actual tables, columns, and indexes in each database or warehouse

- Partitioning strategies, how data is divided for performance optimization

- File formats and storage, Parquet, Delta, Iceberg, JSON, CSV, and which is used where and why

- Storage tiering, hot, warm, and cold storage for different access patterns and cost optimization

- Denormalization decisions, where the physical model deviates from the logical model for performance reasons, and why

Who it’s for: Data engineers and database administrators who build and maintain the actual systems.

Why it matters: The physical layer is where architecture meets reality. A brilliant logical model that’s poorly implemented physically will underperform. The physical layer translates design intent into operational performance.

The Integration Layer

What it is: The design of how data moves between systems, the pipes, the protocols, and the patterns.

What it defines:

- Integration patterns, batch ETL/ELT, streaming, CDC, API-based, event-driven, and which pattern applies where

- Data flow design, source-to-target mappings, transformation sequences, and orchestration

- Interface standards, how systems expose and consume data (APIs, file drops, streaming topics, database connections)

- Integration platform architecture, iPaaS, custom pipelines, cloud-native services, and how they fit together

- Error handling and retry patterns, what happens when integrations fail

Who it’s for: Integration architects, data engineers, and platform teams.

Why it matters: Integration is the circulatory system of the architecture. If data can’t move reliably between systems, nothing else works, regardless of how well-designed the storage and models are.

The Governance Layer

What it is: The policies, roles, and mechanisms that ensure data remains trustworthy, compliant, and well-managed.

What it defines:

- Data ownership model, who is accountable for each data domain

- Data stewardship, who handles day-to-day quality, issue resolution, and change management

- Data quality standards, the rules that define acceptable quality for each dataset, with monitoring and alerting

- Data lineage, end-to-end tracking of where data came from and every transformation applied to it

- Data catalog and metadata, how data is documented, discovered, and understood across the organization

- Policy framework, retention, classification, sharing, and lifecycle management

Who it’s for: Data governance teams, compliance officers, and data stewards.

Why it matters: Architecture without governance can produce data that is structurally organized but insufficiently controlled or trusted. Governance without architecture has no structural foundation to enforce policies. They’re inseparable, and the governance layer is where data architecture consulting often delivers its most lasting value.

The Security Layer

What it is: The design of how data is protected, from unauthorized access, from breaches, and from non-compliance.

What it defines:

- Data classification, PII, PHI, financial, confidential, public, with handling rules for each class

- Encryption architecture, at rest and in transit, with key management policies

- Access control design, role-based, attribute-based, row-level, and column-level permissions

- Data masking and anonymization, for non-production environments, analytics, and external sharing

- Audit trails, who accessed what data, when, and through what interface

Who it’s for: Security teams, compliance officers, and platform administrators.

Why it matters: Security retrofitted into a poorly designed architecture is expensive, incomplete, and fragile. Security designed into the architecture from the start is comprehensive, enforceable, and maintainable. The security layer determines whether the organization can meet regulatory requirements by design rather than by heroic effort.

The Consumption Layer

What it is: How data reaches the people and systems that need it, the last mile of the architecture.

What it defines:

- BI and analytics interfaces, which tools, how they connect, what semantic layers translate raw data into business metrics

- Self-service access, how business users find, understand, and query data without engineering support

- API and data product interfaces, how applications and external consumers access data programmatically

- AI/ML serving infrastructure, feature stores, model serving, and the pipeline from raw data to model-ready features

- Real-time vs. batch delivery, which consumers need real-time data and which can work with periodic refreshes

Who it’s for: Analysts, data scientists, application developers, and business users.

Why it matters: Architecture that is well designed but poorly adopted delivers limited business value. The consumption layer is where architecture translates into outcomes, decisions made, insights generated, models deployed, products delivered. If this layer is missing or poorly designed, the rest of the architecture risks becoming infrastructure with reduced measurable impact.

The Operational Layer

What it is: How the architecture is monitored, maintained, scaled, and evolved over time.

What it defines:

- Monitoring and observability, pipeline health, data freshness, quality scores, performance metrics, and cost tracking

- Alerting and incident response, what triggers alerts, who responds, and what the escalation path is

- Scaling strategy, how the architecture handles growth in data volume, user count, and query complexity

- Change management, how changes to the architecture are proposed, reviewed, approved, and documented

- Maintenance and evolution planning, how the architecture adapts to new requirements, new technologies, and new business conditions

Who it’s for: Data operations, platform engineering, and architecture owners.

Why it matters: Architecture that’s designed but not operationalized gradually degrades in effectiveness. The operational layer sustains architectural health after the consulting engagement concludes, monitoring for degradation, responding to issues, and evolving the design as the organization changes.

Not a Deliverable, a Discipline

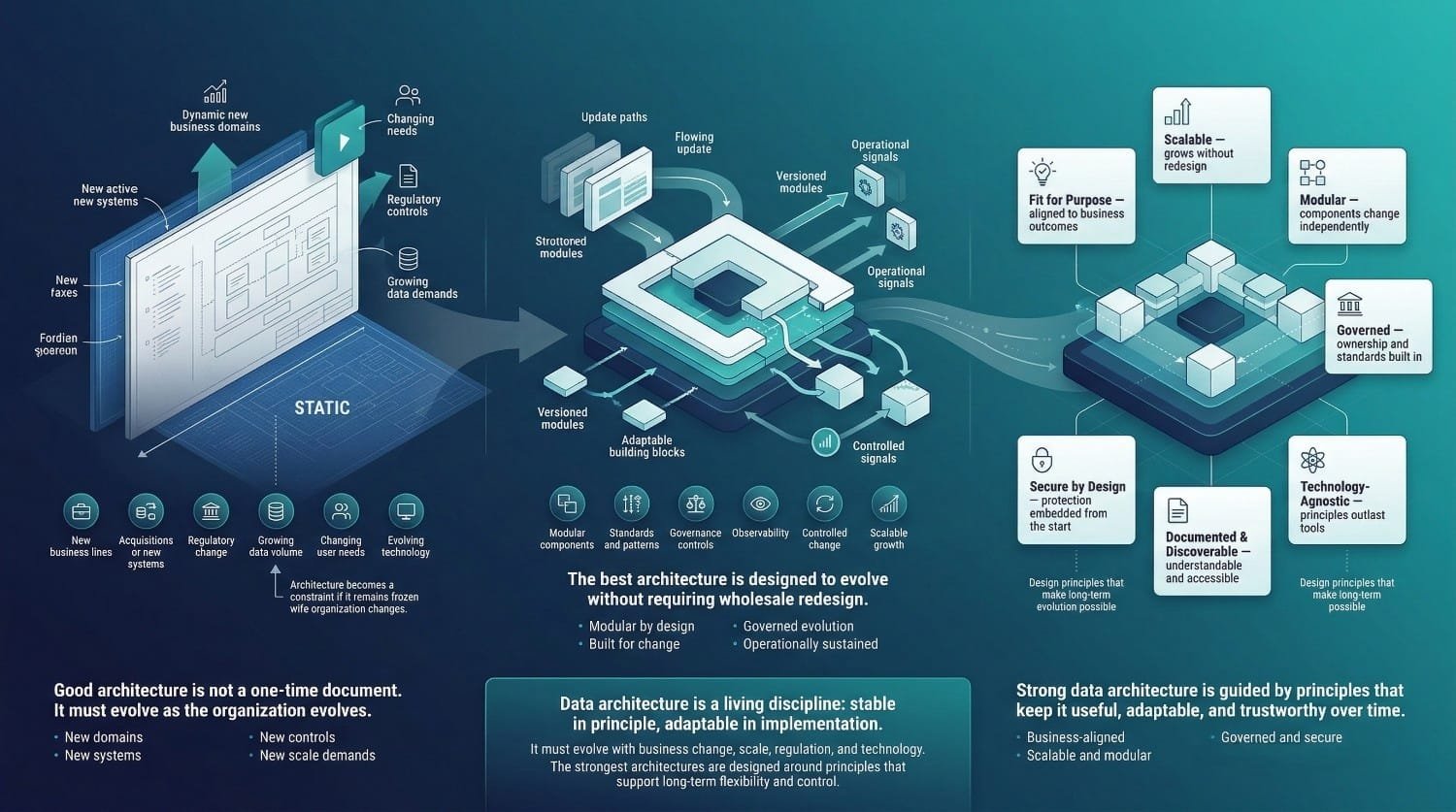

The biggest misconception about data architecture is that it’s a one-time deliverable, a document produced, approved, and filed. Architecture is living. It changes because the organization changes:

- New business lines create new data domains

- Acquisitions bring new systems that need to be integrated

- Regulations change, requiring new governance and security controls

- Technology evolves, creating better options for storage, processing, and consumption

- Data volumes grow, stressing patterns that worked at smaller scale

- User needs shift, requiring new consumption interfaces and access patterns

Architecture that doesn’t evolve with these changes becomes a constraint instead of an enabler. Within 12–24 months, a static architecture document may no longer reflect the actual operating system landscape.

Evolutionary Architecture

The best architectures are designed for evolution, built on principles and patterns that accommodate change without requiring wholesale redesign.

This means modularity, components that can be modified independently without cascading impacts. It means standards that provide consistency without rigidity. It means governance that adapts to new requirements. And it means operational practices that detect when the architecture is drifting from its design, and mechanisms to correct course.

Data architecture consulting is intended to design an architecture that remains adaptable over time. It designs an architecture that can become tomorrow’s architecture through deliberate, managed evolution, not through the chaotic accumulation of ad hoc changes.

Key Principles of Good Data Architecture

Regardless of paradigm, technology, or scale, these principles separate architecture that delivers lasting value from architecture that creates lasting problems.

Fit for Purpose

The architecture serves the business, not the other way around. Every design decision connects to a business requirement. If the business needs real-time customer analytics, the architecture supports real-time. If the business needs monthly financial reporting, the architecture doesn’t over-engineer for real-time where batch suffices. Elegance matters less than effectiveness. The most effective architecture for an organization enables its specific outcomes while maintaining appropriate complexity.

Scalable

The architecture should accommodate significant growth without requiring fundamental redesign. Not by over-engineering for scale today, but by choosing patterns and technologies that scale naturally, and by avoiding design decisions that create hard ceilings. If moderate growth requires redesigning core structural components, the architecture may not be sufficiently scalable. It’s just adequately sized for the current moment.

Modular

Components can be changed, replaced, or upgraded independently.Core components should be replaceable with limited downstream impact. The BI tool can be replaced without redesigning the data model. A new integration platform can be adopted without rearchitecting the data flows. Modularity is what makes evolution possible without disruption.

Governed

Ownership, standards, and policies are embedded in the design, not layered on after the fact. Every data domain has an owner. Every entity has a definition. Every quality threshold has monitoring. Every access path has controls. Governance should be embedded within architectural design rather than treated as a separate initiative.

Secure by Design

Security and compliance are architectural concerns addressed at design time, not afterthoughts bolted on before an audit. Data classification, encryption, access controls, and audit trails are part of the architecture itself. Security controls should be enforced systematically through architectural design rather than relying solely on manual implementation.

Documented and Discoverable

The architecture is understandable by the people who need to work within it. Documentation is current, accessible, and written for its audience. Documentation should enable new team members to understand the architecture efficiently. Business stakeholders can find and understand the data they need without filing a ticket with engineering.

Technology-Agnostic Where Possible

Principles and patterns outlast specific tools. The architecture defines what needs to happen, store this data, transform it this way, serve it to these consumers, govern it by these rules. The technology is chosen to implement those requirements. When better technology becomes available, the architecture adapts, because the design is defined by requirements, not by vendor capabilities.

This is the last principle and perhaps the most important one for data architecture consulting: an architecture tightly coupled to a single tool may have a shorter effective lifespan. An architecture designed around principles is an architecture that evolves with the organization.

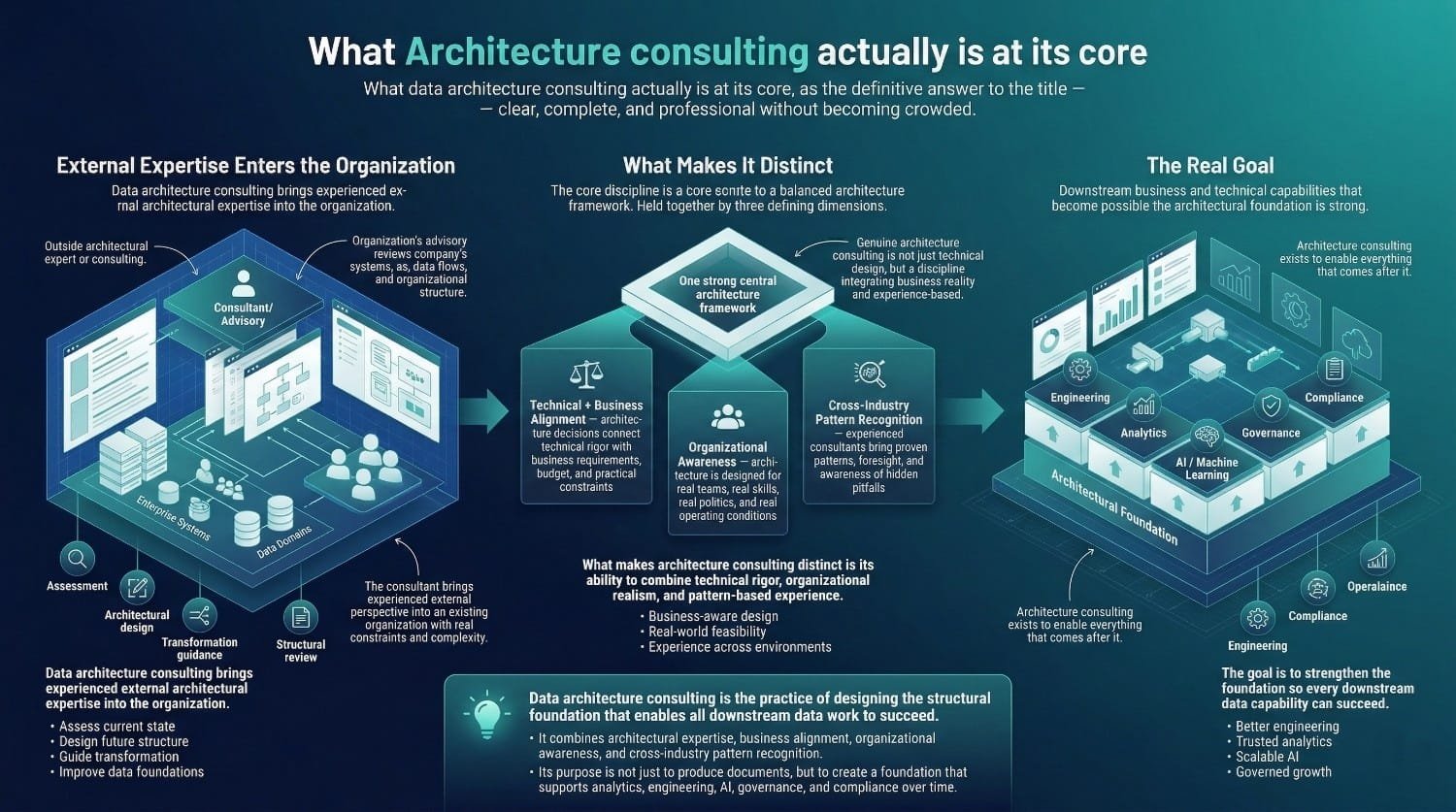

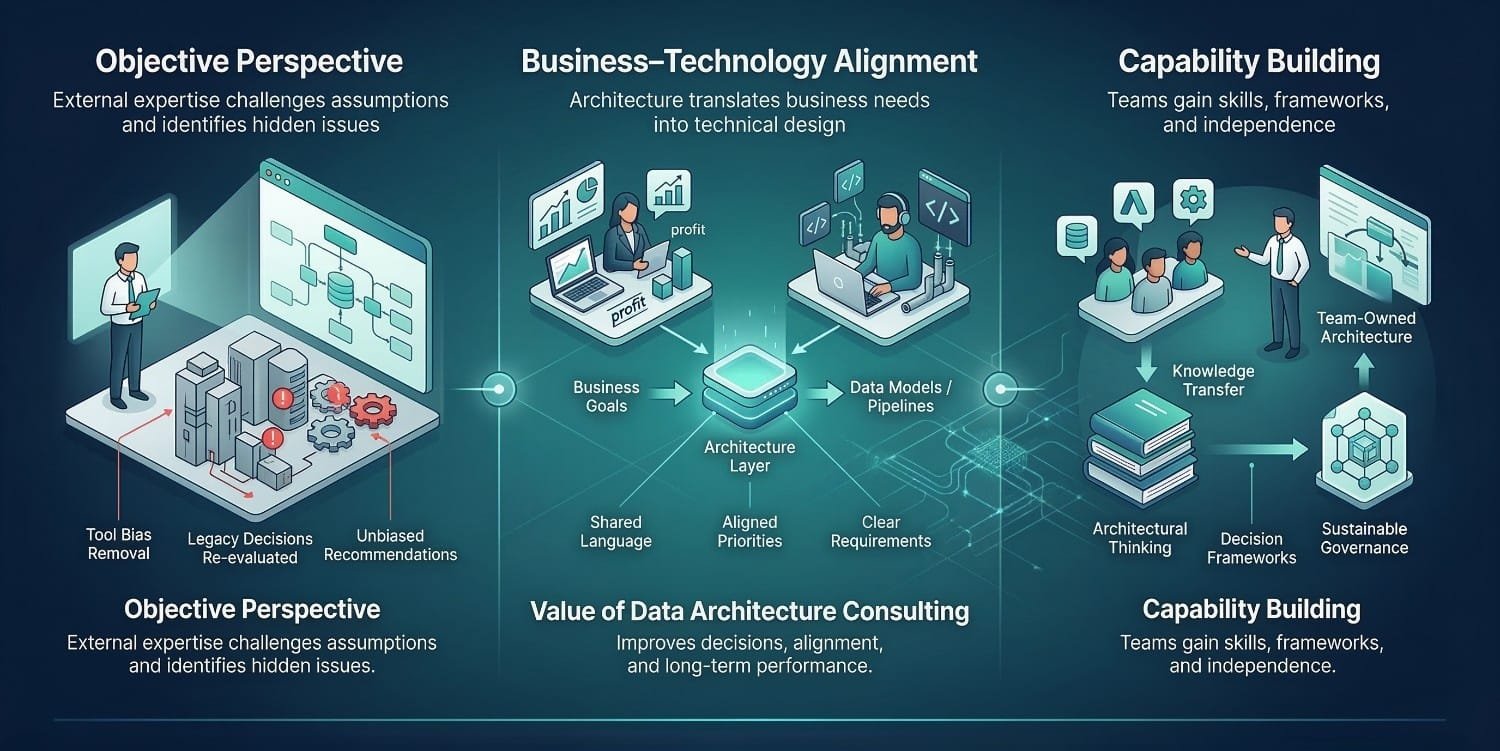

The Core Definition

Data architecture consulting is the practice of bringing experienced, external architectural expertise into an organization to assess, design, guide, or transform how the organization structures and manages its data.

Three things make it distinct from other types of data consulting:

It combines deep technical knowledge with business acumen. Architecture isn’t just a technical exercise. Every design decision connects to a business requirement, a budget constraint, or an organizational reality. The consultant who designs a technically brilliant architecture that ignores the organization’s actual capabilities, culture, and goals has designed something that won’t get implemented. Genuine architecture consulting holds both dimensions simultaneously, technical rigor and business alignment.

It requires organizational awareness. Data architecture doesn’t exist in a vacuum. It lives inside an organization with departments that have competing priorities, teams with different skill levels, leaders with different visions, and a history of decisions that can’t be undone overnight. Architecture consulting navigates this landscape, not just designing what’s technically optimal, but designing what’s achievable within the organization’s real context.

It depends on cross-industry pattern recognition. The most valuable asset a data architecture consulting partner brings isn’t any single technical skill. It’s the accumulated experience of designing architectures across dozens of organizations with different scales, industries, and challenges. That experience creates pattern recognition, the ability to see where your situation fits within patterns they’ve seen before, which approaches work and which don’t, and where the hidden pitfalls are that your team wouldn’t encounter until it’s too late.

The Goal

The goal is not limited to producing documents or selecting tools. It isn’t even to design a beautiful architecture. The goal is to create or improve the architectural foundation so that all downstream data activities, engineering, analytics, AI, governance, compliance, can succeed. The architecture functions as an enabler for downstream data capabilities.

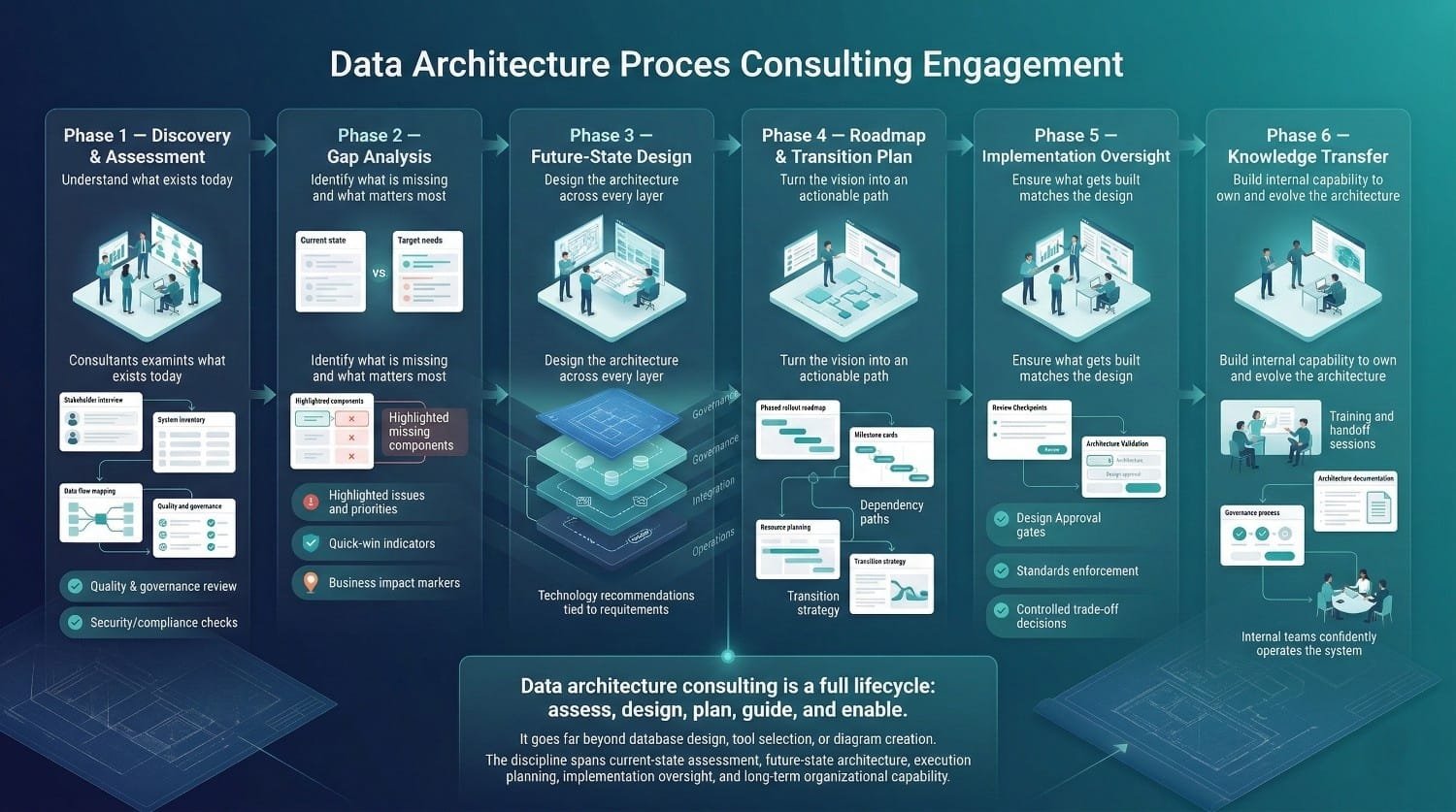

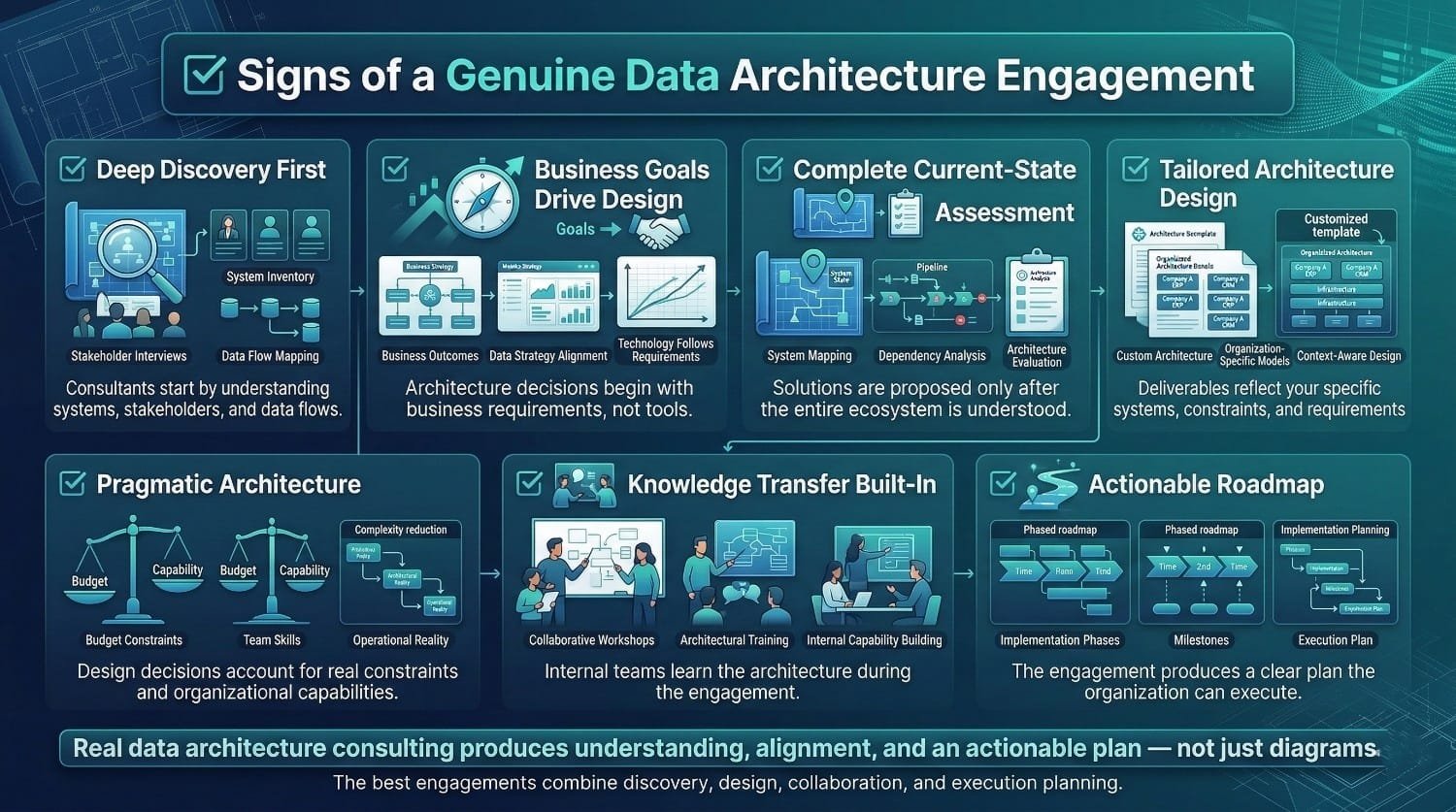

Phase 1: Discovery and Current-State Assessment

Purpose: Understand what exists today, completely, honestly, and without assumptions.

Duration: Typically 2–4 weeks

This is the most important phase. Everything that follows depends on the accuracy and completeness of what’s discovered here. Skipping or compressing this phase significantly increases the risk of engagement failure.

What actually happens:

The consulting team conducts stakeholder interviews across business and technology. Not just the data team, business leaders, analysts, compliance officers, and executives. Each has a different view of the data landscape, different pain points, and different requirements. The architecture must serve all of them.

They perform a complete system inventory. Every database, warehouse, lake, SaaS platform, legacy system, and shadow IT data store. Every pipeline, integration, API, and manual data transfer. Every tool in the ecosystem, BI platforms, quality monitoring, orchestration, governance tools. Nothing is excluded. Previously undocumented systems are frequently sources of structural issues.

They map existing data flows, where data originates, how it moves, where it’s transformed, and where it’s consumed. This mapping almost always reveals undocumented dependencies, redundant data copies, and flows that nobody on the team knew existed.

They assess data models. Are they documented? Consistent across systems? Aligned with how the business actually thinks about its data? In many organizations, the answer to all three is no, and that gap is a primary driver of data trust problems.

They profile data quality. Not by asking people whether quality is good, but by running quantitative profiling against source systems, measuring completeness, accuracy, duplication rates, and consistency. Quantitative profiling often reveals issues that were not previously visible.

They review governance practices. Is ownership defined? Are stewards active? Is lineage tracked? Are quality standards monitored? Is the data catalog maintained? In most organizations, some of these exist in theory. Few exist in practice.

They assess security and compliance posture. Data classification, encryption practices, access control consistency, and regulatory adherence. They identify where sensitive data exists that the organization didn’t know about, which happens more often than anyone wants to admit.

They identify pain points, bottlenecks, risks, and technical debt. Not just the obvious ones. The systemic ones, the architectural root causes that produce the symptoms the organization has been treating individually.

The output is a documented current-state assessment, understandable by both technical and business stakeholders, that serves as the foundation for everything that follows. It’s the honest mirror that most organizations have never held up to their data ecosystem.

Phase 2: Gap Analysis and Opportunity Identification

Purpose: Identify what’s broken, what’s missing, and what matters most.

Duration: Typically 1–2 weeks (often overlapping with late Phase 1)

Discovery tells you what exists. Gap analysis tells you what the distance is between where you are and where you need to be.

What actually happens:

The consulting team compares the current state against three benchmarks: the organization’s own stated business requirements, the scalability and performance needs implied by growth projections, and industry best practices for organizations of similar scale and complexity.

They identify architectural gaps, systematically, across every layer. Missing data models. Absent governance frameworks. Integration patterns that can’t scale. Security controls that don’t cover sensitive data. Consumption interfaces that don’t serve the users who need them. Operational monitoring that doesn’t exist.

They prioritize gaps, not by technical severity alone, but by business impact, risk exposure, and effort to remediate. A gap that’s technically concerning but has low business impact gets a different priority than one that’s actively blocking a revenue-generating initiative.

They identify quick wins. Not every gap requires a major architectural intervention. Some can be addressed with targeted changes that deliver immediate value, and building early momentum is strategically important for maintaining organizational support.

They benchmark against reference architectures relevant to the organization’s industry and scale. Not to copy someone else’s architecture, but to identify where the organization lags behind peers and where targeted investment would yield the highest return.

The output is a prioritized gap analysis, a map of what’s missing, what matters most, and where the highest-impact opportunities are. This becomes the input for the architecture design.

Phase 3: Future-State Architecture Design

Purpose: Design the architecture the organization should be building toward, across every layer.

Duration: Typically 3–6 weeks

This is the core creative and technical work of the engagement. It’s where the consulting team’s experience, pattern recognition, and design skills produce the most value.

What actually happens:

The consulting team designs the target-state architecture across all eight layers, conceptual, logical, physical, integration, governance, security, consumption, and operational. Not as separate documents, but as an interconnected design where every layer is consistent with every other layer.

They define architectural principles and standards that will govern ongoing decisions. These principles are what keep the architecture coherent as it evolves, even after the consulting team is gone. Principles like “single source of truth for every business entity” or “governance is embedded in the pipeline, not applied after” become the decision-making framework for every future architectural choice.

They select architectural patterns. Centralized warehouse, data lake, lakehouse, data mesh, data fabric, event-driven, streaming-first, or hybrid, chosen based on the organization’s specific requirements, team capabilities, and growth trajectory. Selected based on organizational requirements rather than industry trends alone.

They design data models at multiple levels. Enterprise-level conceptual models that create shared language. Domain-specific logical models that define entity structures and relationships. Physical model guidelines that translate logical design into platform-specific implementation.

They design the integration architecture. How data will flow between systems in the future state, which patterns, which protocols, which platforms, and how the integration layer relates to the storage and governance layers.

They design the governance framework. Ownership model, stewardship roles, quality standards with specific thresholds and monitoring, lineage tracking architecture, data catalog approach, and policy framework. Designed as an architectural component, not a separate initiative.

They design the security architecture. Data classification scheme, access control model, encryption strategy, masking and anonymization approach, and audit framework. Embedded in the architecture, not layered on top.

They design the consumption architecture. How business users, analysts, data scientists, and applications will access data, semantic layers, self-service interfaces, API designs, feature stores, and data product specifications.

They make technology recommendations. Vendor-neutral evaluation of platforms and tools that implement the target architecture, based on requirements analysis, not partnerships. The recommendations include rationale, alternatives considered, and trade-offs accepted.

And critically, they ensure the design is pragmatic. Every recommendation accounts for the organization’s actual budget, the team’s actual skills, the organizational culture, and realistic timelines. An architecture that is technically sound but infeasible to implement lacks practical value.

The output is a comprehensive target-state architecture, documented, explained, and designed for the organization’s specific reality.

Phase 4: Roadmap and Transition Planning

Purpose: Turn the future-state vision into an actionable, phased plan.

Duration: Typically 1–3 weeks

A target-state architecture without a practical path to get there is aspiration, not a plan. This phase bridges design and execution.

What actually happens:

The consulting team breaks the future state into phased, achievable milestones. Not a big-bang transformation, but a sequenced series of steps that each deliver measurable value while building toward the complete vision.

They prioritize phases based on three factors: business value (which phases unblock the highest-impact initiatives), risk reduction (which phases address the most dangerous gaps), and dependency management (which phases must precede others).

They define what “done” looks like for each phase, specific, measurable criteria that confirm a phase is complete before the next one begins. Without these, phases blur together and progress becomes unmeasurable.

They identify dependencies, prerequisites, and blockers. Which teams need to be involved. Which systems need to change first. Which organizational decisions need to be made before technical work can proceed. Which external factors (vendor timelines, regulatory deadlines) constrain the schedule.

They estimate effort, timeline, and resource requirements for each phase, giving leadership the information needed to budget, staff, and plan.

They design the transition strategy, how to move from current state to future state without disrupting ongoing operations. This is where architecture meets operational reality. The business can’t stop while the architecture is rebuilt. Coexistence strategies, parallel running, and phased cutover plans are designed to keep everything operational during the transition.

The output is an actionable roadmap, phased, prioritized, resourced, and designed for real-world execution.

Phase 5: Implementation Guidance and Oversight

Purpose: Ensure what gets built matches what was designed.

Duration: Varies, typically spans the implementation period (weeks to months)

Architecture design is one thing. Implementation is another. Without architectural oversight during the build, engineering teams inevitably make local decisions that deviate from the design, sometimes for good reasons, often for expediency. Those deviations accumulate into an implementation that looks nothing like the architecture it was supposed to follow.

What actually happens:

The consulting team provides architectural oversight during implementation, not writing code, but reviewing designs, validating decisions, and ensuring consistency with the architecture.

They review key design decisions made by engineering teams. When the implementation team faces a choice that has architectural implications, a new table structure, a different integration pattern, a deviation from the data model, the architect weighs in before the decision is implemented.

They conduct architecture reviews at critical milestones. Before each phase goes live, the architecture is reviewed against the design to confirm alignment, and to catch deviations early when they’re cheap to fix.

They resolve architectural trade-offs that arise during implementation. Reality always introduces constraints that weren’t visible during design. The architect helps navigate these trade-offs, adjusting the design where necessary while preserving architectural integrity.

They ensure standards and patterns are being followed consistently. Naming conventions, modeling patterns, integration standards, governance practices, the standards established during design need enforcement during build. Without oversight, standards can erode early in implementation.

Phase 6: Knowledge Transfer and Organizational Enablement

Purpose: Leave the organization capable of owning and evolving the architecture independently.

Duration: Ongoing throughout the engagement, with a focused period at the end

The engagement will end. The consultants will leave. The architecture must remain, and the organization must be able to maintain, operate, and evolve it without external help.

What actually happens:

The consulting team trains internal teams on the architecture, the standards, and the decision-making frameworks. Not a single training session, but a continuous process of working alongside internal staff throughout the engagement, so the knowledge is built through participation, not just presentation.

They document the architecture in formats the organization can maintain. Not in the consultant’s preferred format, but in formats that fit the organization’s tools, practices, and team. Documentation that can’t be maintained internally may become outdated quickly.

They establish architecture governance processes. Review boards, decision logs, exception handling, standards enforcement mechanisms, the organizational structures that keep the architecture healthy after the consulting team leaves.

They verify that the organization can operate independently. Before the engagement closes, the consulting team confirms that internal staff can make architectural decisions, onboard new components, maintain documentation, and evolve the design, without calling the consultant for every question.

They build internal architectural capability for the long term. Not just transferring knowledge about this specific architecture, but developing the skills and judgment that enable the internal team to handle architectural decisions going forward.

The output is less a document and more an organizational capability outcome., an organization that owns its architecture and can evolve it confidently.

The Complete Picture

These six phases, discovery, gap analysis, design, roadmap, implementation guidance, and knowledge transfer, are what data architecture consulting actually involves. Not all engagements include every phase. Some organizations need only an assessment and roadmap. Others need the full lifecycle.

But understanding the complete picture is essential, because it reveals how far the discipline extends beyond the common misconceptions of “database design” or “tool selection” or “diagram creation.”

Data architecture consulting is the practice of designing the structural foundation of the data ecosystem, from conceptual model to operational monitoring, from business alignment to security architecture, from current-state assessment to knowledge transfer.

It is comprehensive, strategic, technical, and organizational in scope.. And that combination is what makes it both difficult to do well and highly valuable when executed effectively.

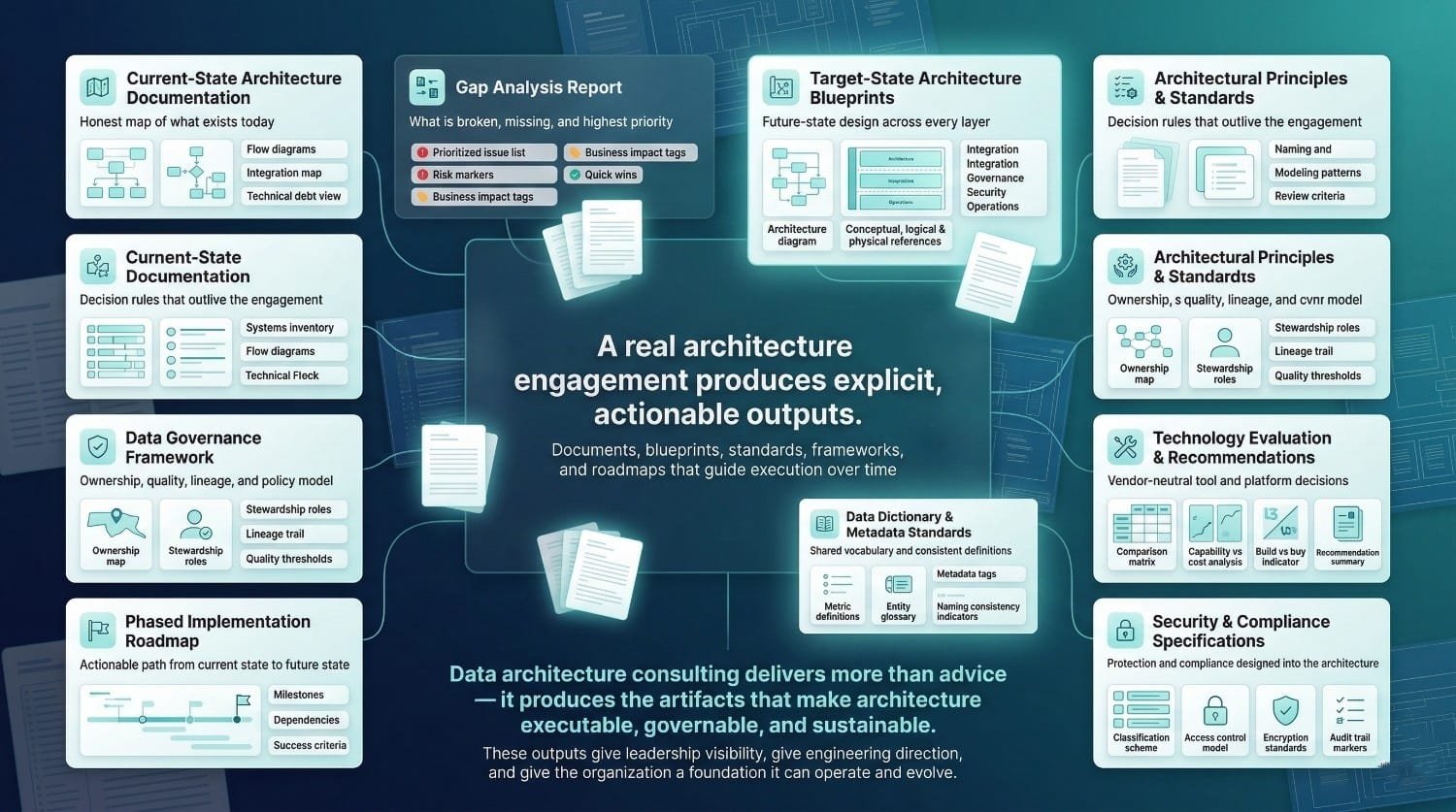

What Data Architecture Consulting Delivers (Tangible Outputs)

One of the reasons data architecture consulting is misunderstood is that its outputs are less visible than a working pipeline or a new dashboard. This section makes the deliverables explicit, every document, every decision, every organizational outcome, so you know exactly what a real engagement produces.

Documentation and Artifacts

These are the tangible deliverables, the documents, diagrams, and specifications that the engagement produces.

Current-State Architecture Documentation

The honest map of what exists today. This includes a complete systems inventory covering every database, warehouse, lake, SaaS platform, and legacy system in the ecosystem. Data flow diagrams showing how data moves from source through transformation to consumption. Integration maps documenting every connection between systems, including the undocumented ones that discovery reveals. A data model assessment evaluating what models exist, their quality, their coverage, and their alignment with business concepts. And a technical debt inventory cataloging the accumulated shortcuts, workarounds, and structural problems that need to be addressed.

Many organizations do not maintain a consolidated current-state architecture document. When it’s produced, it’s almost always the first time anyone has seen the complete picture in one place. That visibility alone frequently provides significant value during the discovery phase.

Gap Analysis Report

The prioritized assessment of what’s broken, what’s missing, and what matters most. Each gap is described with its business impact, its risk level, and an estimated effort to remediate. Quick wins are identified separately from structural improvements. The report gives leadership a clear, honest picture of the distance between where the architecture is and where it needs to be, with enough specificity to make investment decisions.

Target-State Architecture Blueprints

The core design deliverable. Layered architecture diagrams showing every component and how they relate, storage, integration, governance, security, consumption, and operations. Data models at the conceptual, logical, and physical levels. Integration pattern specifications defining how data flows in the future state. Technology stack diagrams showing which platforms implement which layers. These blueprints typically serve as a reference for engineering execution over the next several years, subject to evolution.

Architectural Principles and Standards Document

The decision-making framework that outlives the engagement. Core principles that guide every future architectural choice, things like “every business entity has a single authoritative source,” “governance is embedded in pipelines, not applied after the fact,” and “security is designed in, not bolted on.” Alongside the principles, specific standards covering naming conventions, modeling patterns, pipeline development practices, documentation requirements, and code review criteria. These standards are what prevent the architecture from reverting to organic chaos after the consultants leave.

Data Governance Framework and Operating Model

The complete governance design, not just policies, but the operational model that makes governance work. Data ownership model mapping every domain to an accountable owner. Stewardship roles with defined responsibilities. Quality standards with specific thresholds, monitoring approach, and escalation procedures. Lineage tracking requirements specifying what must be tracked and how. Data catalog strategy defining how metadata is captured, maintained, and made discoverable. Policy framework covering retention, classification, sharing, and lifecycle management.

Technology Evaluation and Recommendation Report

The vendor-neutral assessment of which tools fit the architecture. Evaluation criteria derived from architectural requirements, not vendor feature lists. Platform comparisons across capability, cost, scalability, ecosystem fit, and operational burden. Specific recommendations with documented rationale, alternatives considered, and trade-offs accepted. Build vs. buy analysis for each component of the architecture.

Phased Implementation Roadmap

The actionable plan that turns the architecture into reality. Phases sequenced by business value, risk, and dependency. Each phase defined with scope, milestones, success criteria, resource requirements, and timeline estimates. Dependencies and prerequisites mapped. Decision points and checkpoints identified. The roadmap is what leadership uses to budget, staff, and track the transformation.

Data Dictionary and Metadata Standards

The shared vocabulary that ensures consistency. Business definitions for every key entity and metric. Technical metadata standards specifying how schemas, tables, and fields are documented. Naming conventions that apply across all systems. This deliverable is what makes the data catalog useful, and what prevents the “same word, different meaning” problem that undermines data trust.

Security and Compliance Architecture Specifications

The detailed security design. Data classification scheme with handling rules for each class. Access control model specifying role-based and attribute-based permissions at every layer. Encryption standards for data at rest and in transit. Audit trail requirements defining what’s logged, where, and for how long. Compliance mapping outlining how the architecture supports relevant regulatory requirements such as GDPR, HIPAA, SOX, or CCPA where applicable..

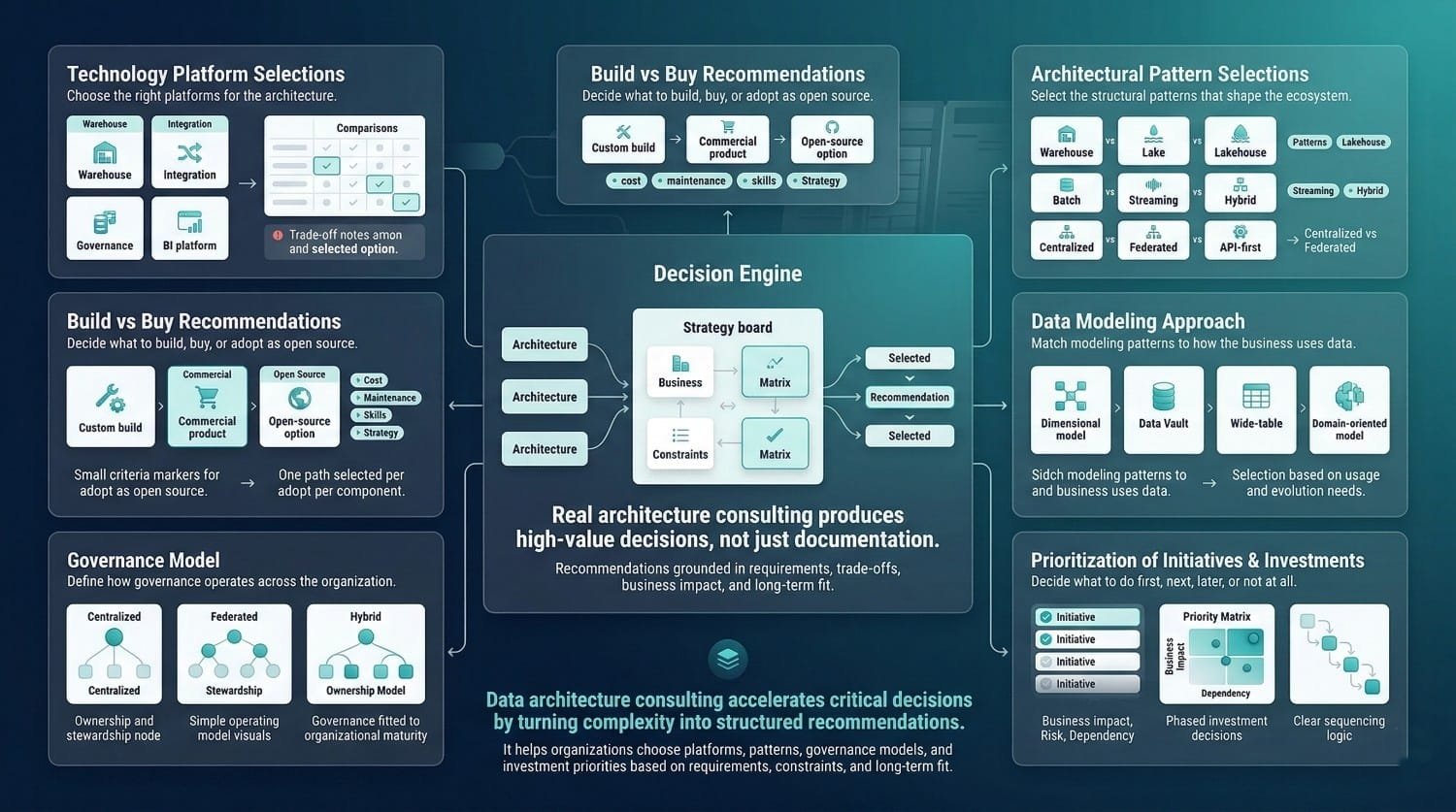

Technology Platform Selections

Which warehouse, which integration platform, which governance tool, which BI system, selected primarily based on architectural requirements rather than vendor demonstrations or analyst rankings alone. Each selection comes with documented justification explaining why this option was chosen over alternatives and what trade-offs were accepted.

Build vs. Buy Recommendations

For each component of the architecture, should the organization build a custom solution, buy a commercial product, or use an open-source option? Each recommendation is grounded in the organization’s specific context: team skills, budget, maintenance capacity, and long-term strategy.

Architectural Pattern Selections

The foundational pattern choices that shape the entire ecosystem. Warehouse vs. lake vs. lakehouse. Batch vs. streaming vs. hybrid. Centralized vs. federated vs. mesh. Hub-and-spoke vs. event-driven vs. API-first. Each selection is explained with the reasoning, the alternatives considered, and the conditions under which the choice should be revisited.

Data Modeling Approach

Dimensional modeling, Data Vault, wide-table approaches, or domain-oriented modeling patterns. The modeling approach is selected based on the organization’s query patterns, team skills, tool capabilities, and evolution requirements, not based on the consultant’s personal preference.

Governance Model

Centralized governance vs. federated vs. hybrid. The model is matched to the organizational structure, the team’s maturity, and the regulatory environment. It specifies not just who governs, but how governance operates day-to-day.

Prioritization of Initiatives and Investments

Perhaps the most pragmatically valuable decision output. Given limited budget and limited team capacity, what should be done first? Second? Third? What can wait? What should be deferred permanently? Prioritization based on business impact, risk, and architectural dependency, not on who lobbies hardest.

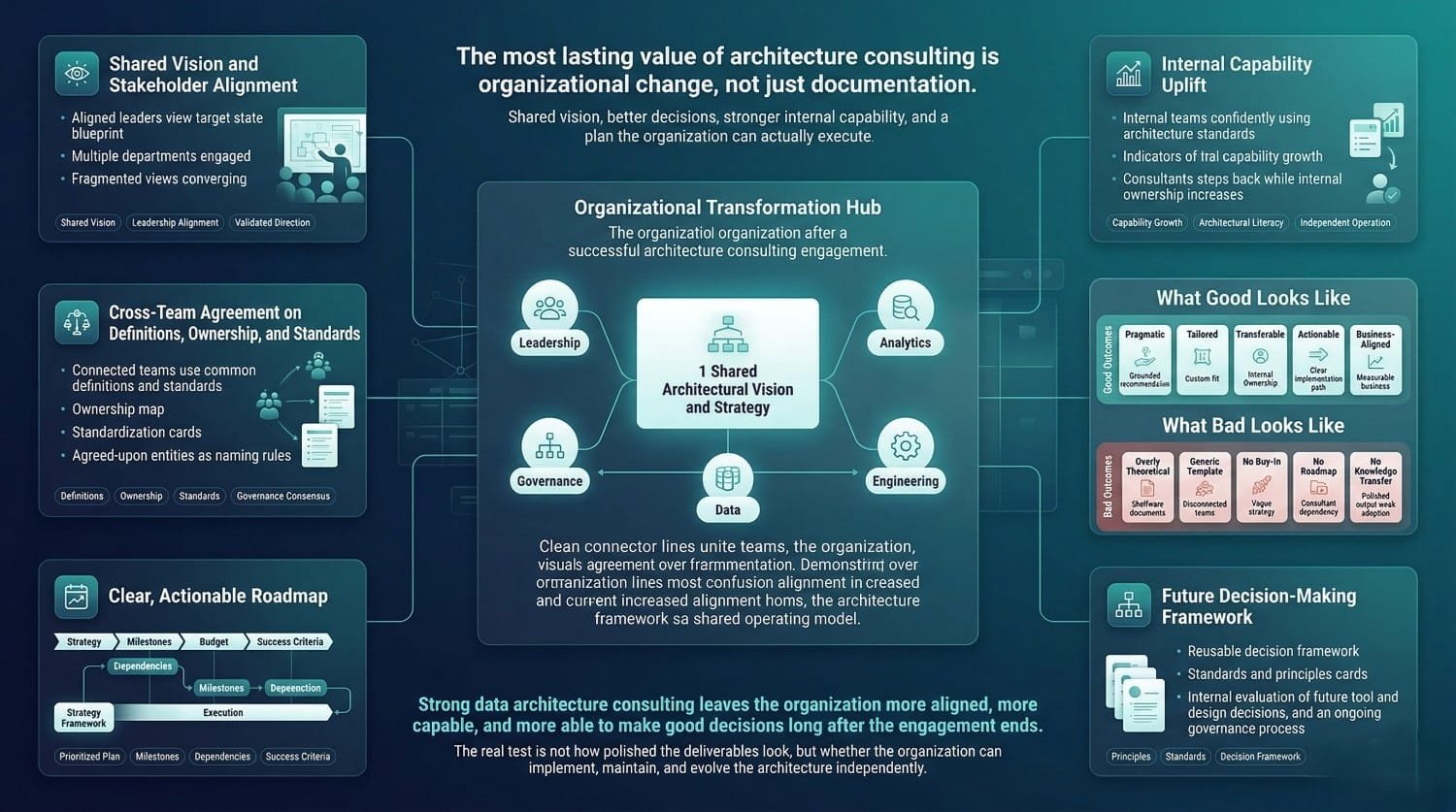

Stakeholder Alignment on a Shared Vision

Before the engagement, different leaders had different mental models of what the data ecosystem should look like. After the engagement, there is a documented and broadly aligned architectural vision, documented, presented, and validated by the people who need to support it. This alignment is often the engagement’s most valuable outcome, because without it, every subsequent investment faces political resistance.

Cross-Team Agreement on Definitions, Ownership, and Standards

The workshops and facilitation that happen during the engagement produce agreements that internal teams had been unable to reach on their own. Marketing and finance agree on what “customer” means. Sales and operations agree on who owns pipeline data. Engineering and analytics agree on naming conventions. These agreements are captured in the governance framework and the data dictionary, but the real value is the organizational consensus itself.

A Clear, Actionable Plan

Leadership has something they didn’t have before: a plan they can execute. Not a vague aspiration. A phased, prioritized, resourced roadmap with milestones, dependencies, and success criteria. They can budget against it. They can staff for it. They can track progress. They can make informed trade-off decisions when priorities shift.

Internal Team Capability Uplift

The team that worked alongside the consultants throughout the engagement emerges with capabilities they didn’t have before. They develop increased architectural literacy and decision-making capability. They know how to evaluate design decisions against principles. They can maintain the documentation, evolve the standards, and make architectural choices within the framework the engagement established. This is the capability that sustains the architecture long after the consultants leave.

A Decision-Making Framework for the Future

Perhaps the most underappreciated outcome. The principles, standards, and governance processes established during the engagement give the organization a framework for making every future architectural decision. When a new data source needs to be integrated, the patterns exist. When a new tool is evaluated, the criteria exist. When a design conflict arises, the principles provide resolution. The organization doesn’t need the consultant for every decision, because the framework enables confident internal decision-making.

What "Good" Looks Like vs. What "Bad" Looks Like

Not all data architecture consulting deliverables are created equal. Here’s how to tell the difference.

What Good Looks Like

Pragmatic. Every recommendation accounts for the organization’s actual budget, team skills, timeline, and culture. The architecture is designed for the organization as it is, not as the consultant wishes it were. Trade-offs are documented honestly. Constraints are respected, not ignored.

Tailored. The architecture is designed for this specific organization, these specific systems, these specific requirements. It doesn’t look like a template with the company name swapped in. The design decisions are justified with reasoning specific to this context, not generic best practices.

Documented for the audience. Technical specifications are precise enough for engineers to implement. Business descriptions are clear enough for executives to understand. Governance frameworks are operational enough for stewards to follow. Each deliverable speaks to the people who’ll use it.

Transferable. The internal team understands the architecture deeply, because they helped build it. Documentation is in formats the organization can maintain. Decision rationale is captured so future team members understand not just what was decided, but why. The organization can operate independently.

Actionable. Every recommendation connects to a specific step in the roadmap. Nothing is left as a vague “consider in the future.” The path from design to implementation is clear, phased, and resourced.

Aligned with business reality. The architecture serves measurable business outcomes. Every design choice can be traced to a business requirement. The ROI is articulable in terms leadership understands.

What Bad Looks Like

Overly theoretical. The architecture is elegant on paper but impossible to implement with the organization’s actual resources. It describes an ideal state with no practical path to reach it. The consultant optimized for design beauty rather than organizational reality.

Generic templates rebranded as custom work. The deliverables look suspiciously like they could apply to any organization. The same architectural patterns, the same tool recommendations, the same governance framework, regardless of whether they fit the specific context. The company name is the only thing that’s customized.

No stakeholder engagement. The consulting team worked in isolation, emerging periodically with finished deliverables. The internal team didn’t participate in discovery, wasn’t involved in design decisions, and doesn’t fully understand the architecture. The documents are comprehensive. The organizational buy-in is zero.

No implementation path. The architecture is beautifully designed with no roadmap, no phasing, no resource estimates, and no transition strategy. The organization has a vision of where it should go but no plan for how to get there. The gap between design and execution is left as someone else’s problem.

No knowledge transfer. The consulting team holds all the architectural knowledge. The internal team can’t explain the design decisions, can’t maintain the documentation, and can’t make architectural choices without calling the consultant. The engagement created dependency, not capability.

The difference between strong and weak data architecture consulting outcomes can be assessed through implementation success, sustainability, and organizational capability, in the organization’s ability to implement, maintain, and evolve the architecture independently after the engagement ends.

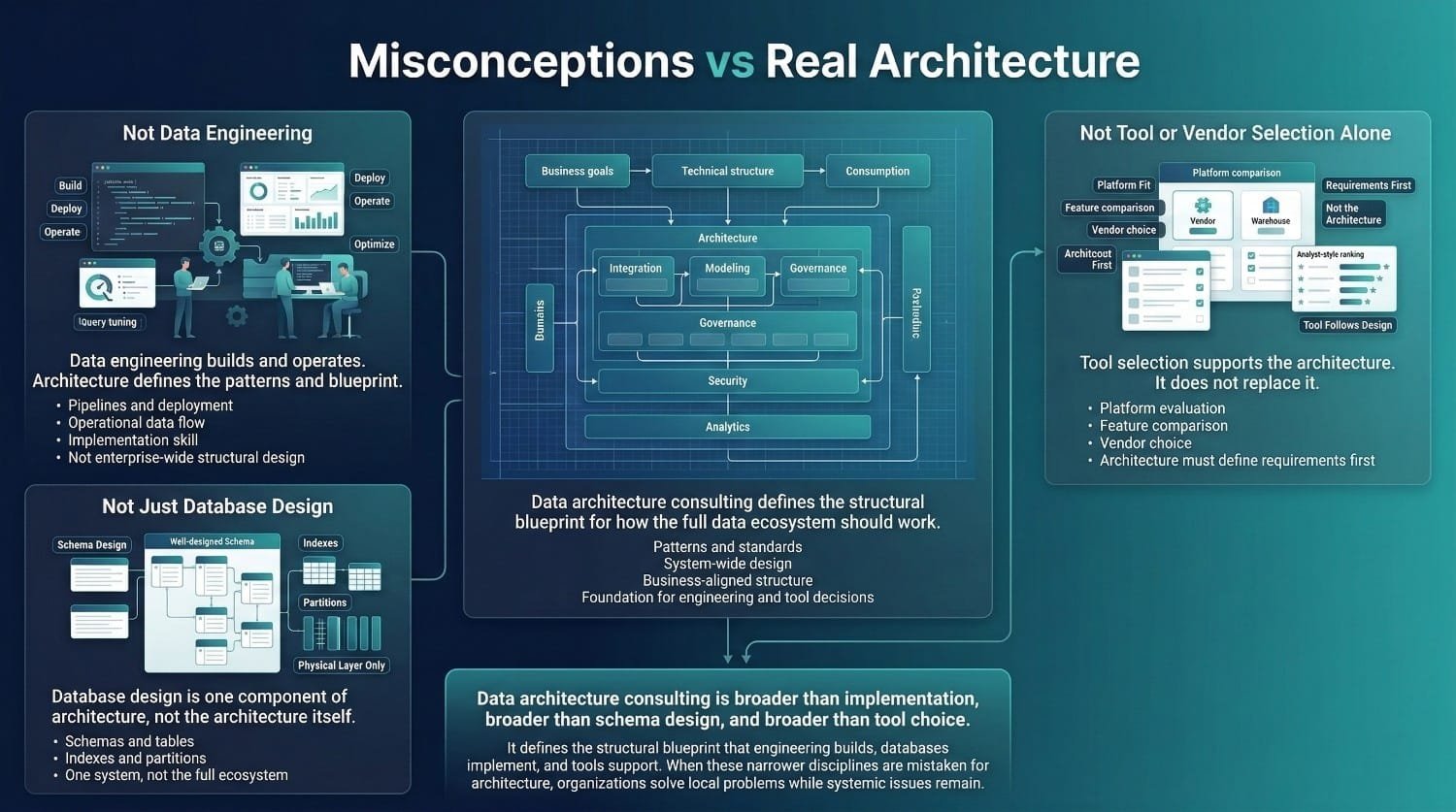

It's Not Data Engineering

This is the most frequent confusion, and the one that causes the most damage when the wrong discipline is engaged for the problem.

The Distinction

Data engineering builds, deploys, and operates. Engineers write pipeline code, configure platforms, optimize queries, manage infrastructure, and keep data flowing day to day.

Data architecture designs the blueprint. Architects define the patterns, models, standards, and structural decisions that engineering builds within.

An engineer asks: “How should I build this pipeline?” An architect asks: “What pipeline patterns should all pipelines follow, and how does this pipeline fit into the broader ecosystem?”

An engineer asks: “What’s the best index for this query?” An architect asks: “What data model design ensures that the most common query patterns perform well across the entire warehouse?”

The Relationship

They’re complementary and interdependent. Architecture without engineering is a plan that never gets implemented, beautiful diagrams that sit in a shared drive. Engineering without architecture is building without a plan, solutions that work individually but don’t fit together, creating the organic chaos that eventually requires architectural intervention to untangle.

The best outcomes happen when both work together. Architecture provides the blueprint, the standards, and the guardrails. Engineering provides the implementation expertise, the operational knowledge, and the feedback on what’s practically buildable.

When You Need Which

You need engineering when the blueprint exists and is sound, you need skilled people to execute it. The patterns are defined, the models are designed, and the standards are established. What’s missing is capacity and implementation skill.

You need architecture when the blueprint doesn’t exist, is fundamentally flawed, or can’t support what the business needs. Strong engineering execution cannot fully compensate for structural design deficiencies. Accelerating development within a flawed architecture often amplifies existing structural issues.

You need both when you’re undertaking a major transformation, building a new platform, migrating to the cloud, consolidating post-acquisition, or modernizing a legacy environment. Architecture designs the target state. Engineering builds it. Without both, you get either an unimplemented plan or an unplanned implementation.

It's Not Just Database Design

The Distinction

Database design, schema structure, table definitions, indexing strategies, partitioning approaches, is one component of the physical layer of data architecture. It’s important. It’s also roughly 5–10% of the total architectural scope.

Data architecture consulting encompasses the entire ecosystem: how dozens or hundreds of systems work together. How data flows between them. How it’s modeled at the conceptual and logical levels. How it’s governed. How it’s secured. How it’s consumed. How it evolves.

Designing a great schema for one database while ignoring how that database relates to the warehouse, the lake, the BI platform, the integration layer, and the governance framework is like designing a great room while ignoring the rest of the building. The room might be excellent. The building might be structurally unsound.

The Consequence of Confusion

When organizations engage “architecture consulting” expecting ecosystem-level design but receive database-level design, the engagement solves a narrow problem while the systemic issues remain untouched. The database is well-designed. The architecture is still broken. And the organization has spent its architecture budget on something that doesn’t address the architectural problem.

It's Not Tool or Vendor Selection Alone

The Distinction

Selecting Snowflake vs. Databricks vs. BigQuery is a technology decision. It’s an important decision. But it’s not an architecture.

Architecture defines what you need from a technology. Tool selection determines which technology meets those needs. The architecture says: “We need a storage layer that supports semi-structured data, handles 5TB with 30% annual growth, serves both batch analytical queries and near-real-time dashboards, integrates with our existing BI platform, and fits within a $150K annual budget.” The tool selection process evaluates which platform best meets those requirements.

When organizations skip architecture and go straight to tool selection, they’re choosing a solution before defining the problem. The result is a platform purchased for the wrong reasons, because the demo was impressive, because a competitor uses it, because an analyst report ranked it highly, that may or may not fit the actual architectural requirements.

The Consequence of Confusion

Tool selection without architectural context is a common source of costly misalignment in data infrastructure. The organization commits to a platform, often with a multi-year contract and significant migration effort, before understanding whether it’s the right fit. When the misfit becomes apparent, the organization faces a painful choice: force the architecture to fit the tool (suboptimal) or re-platform at enormous cost (expensive).

Data architecture consulting prevents this by defining the architecture first and selecting the tool second. The tool serves the architecture. The architecture serves the business. The sequence is important.

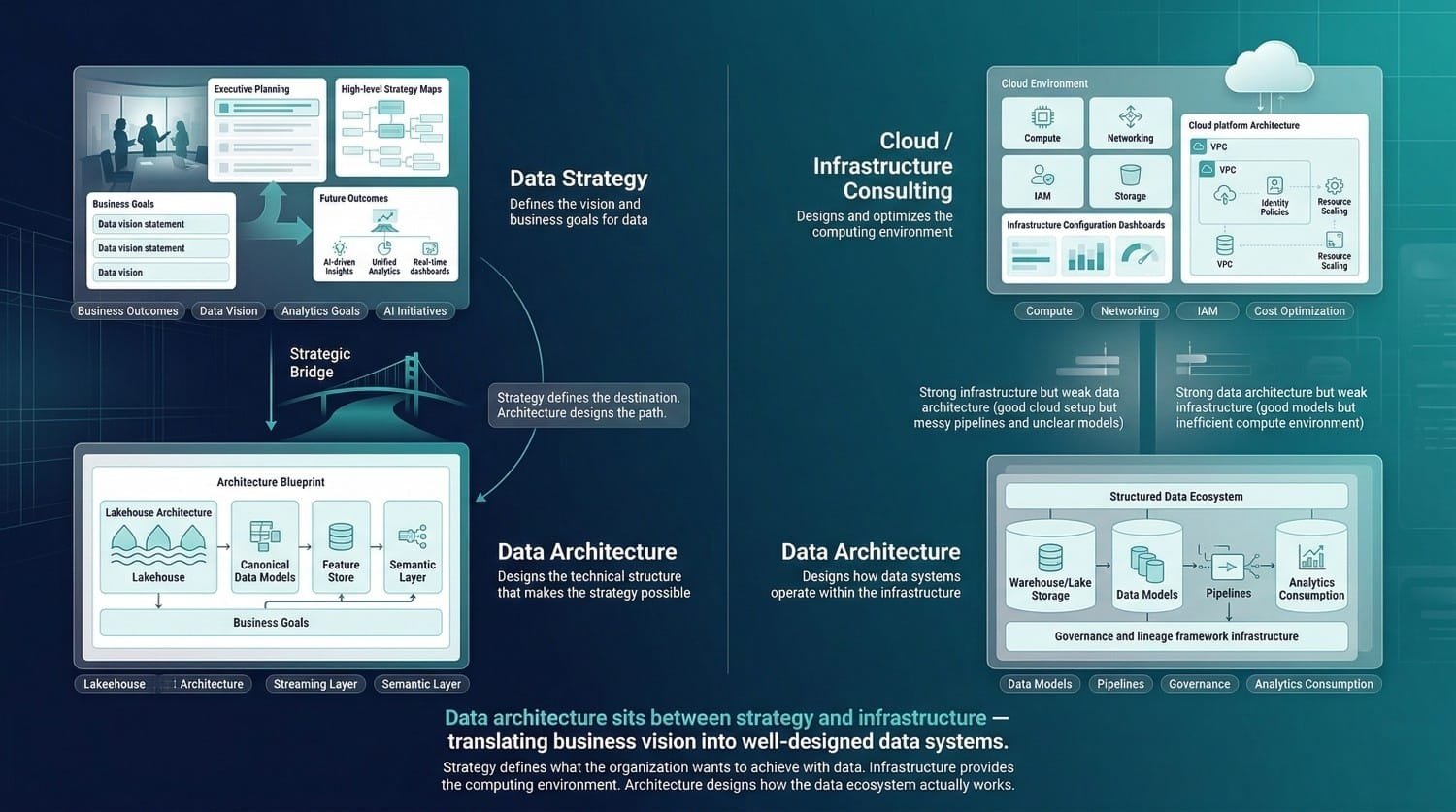

The Distinction

Data strategy answers: “What do we want to achieve with data? What’s our vision? How does data support our business objectives?”

Data architecture answers: “How do we design our data systems to make that vision technically possible?”

Strategy says: “We need to become a data-driven organization with unified customer analytics, AI-powered personalization, and real-time operational dashboards.” Architecture says: “To achieve that, we need a lakehouse architecture with a canonical customer model, a real-time streaming layer, a feature store, and a semantic layer, implemented in these specific technologies, governed by this framework, and built in these phases.”

Strategy defines the destination. Architecture designs the structural path to reach it.

The Relationship

Strategy without architecture is aspiration without a plan. The organization knows where it wants to go but has no structural design for getting there. Goals are set. Budgets are allocated. But the technical foundation to support those goals doesn’t exist and nobody has designed it.

Architecture without strategy is design without direction. The technical blueprint is sound, but it’s not aligned with business priorities. The architecture supports capabilities the business doesn’t need while missing capabilities it does.

They’re complementary disciplines, often delivered together. Many data architecture consulting engagements include a strategy component, ensuring that architectural decisions are grounded in business objectives. But they’re distinct, and understanding the boundary prevents the mismatched expectation where someone buys strategy and expects architecture, or vice versa.

It's Not Cloud Consulting or Infrastructure Architecture

The Distinction

Cloud consulting focuses on the infrastructure layer, compute resources, networking configuration, identity and access management, storage provisioning, cost optimization, and cloud platform configuration.

Data architecture consulting focuses on the data layer, how data is modeled, stored, integrated, governed, secured, and consumed. These concerns exist regardless of whether the infrastructure is cloud, on-premises, or hybrid.

A cloud consultant ensures that your AWS environment is well-configured, secure, and cost-efficient. A data architect ensures that the data systems running in that environment are well-designed, well-modeled, and well-governed.

Why the Distinction Matters

It is possible to have a well-configured cloud environment alongside weak data architecture practices. The VPCs are configured correctly. The IAM policies are tight. The instances are right-sized. But the data warehouse has no coherent model. The pipelines are point-to-point spaghetti. There’s no governance. Nobody can trace a metric from report to source. The infrastructure is excellent. The data architecture is broken. No amount of cloud optimization fixes a data modeling problem.

The reverse is also true. You can have brilliant data architecture on poorly configured infrastructure. The models are elegant. The governance is mature. But the cloud environment is overprovisioned, the networking adds latency, and the security configuration has gaps. Both layers need to be right. They require different expertise. Data architecture consulting addresses the data layer. Cloud consulting addresses the infrastructure layer. Major transformations often require both, coordinated so that the data architecture defines what the infrastructure needs to support.

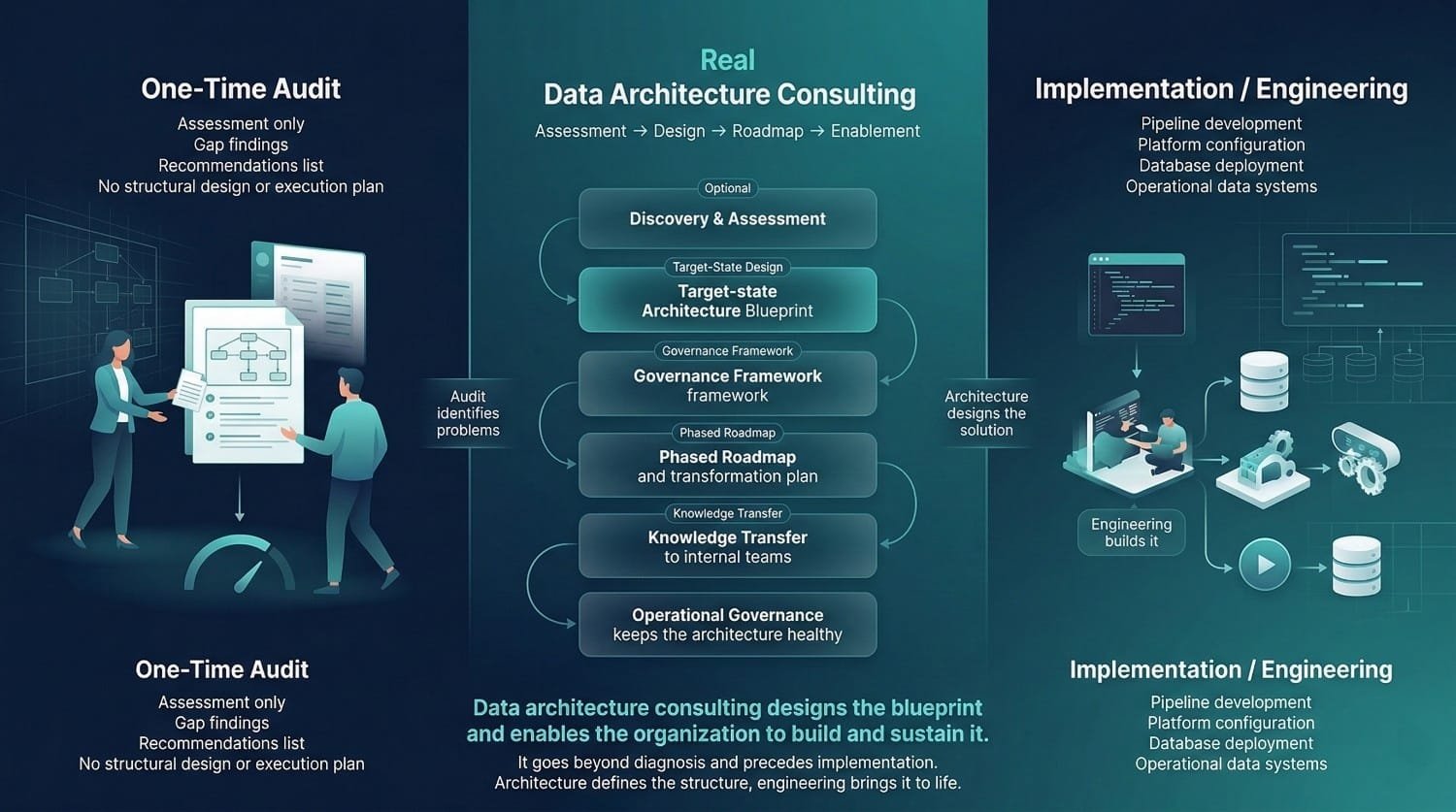

The Distinction

An audit or assessment produces findings. Data architecture consulting typically produces findings along with a design, a plan and organizational capability development. If the engagement delivers a PDF documenting the current state and a list of recommendations, and then the consulting team disappears, you’ve received an assessment, not architecture consulting. The assessment is a valuable component, but it’s Phase 1 of a multi-phase engagement. Stopping there can be comparable to receiving a diagnosis without a structured treatment plan.

What Full Architecture Consulting Includes Beyond an Audit

A target-state design that translates findings into structural solutions. A governance framework that operationalizes the recommendations. Standards and patterns that prevent the problems from recurring. A phased roadmap that turns the design into an executable plan. Knowledge transfer that enables the organization to own and evolve the architecture. Governance processes that keep the architecture healthy over time.

An audit primarily identifies gaps and risks. The architecture consulting tells you what’s wrong, designs what “right” looks like, creates a plan to get there, and ensures your team can sustain it.

The Test

If the consulting firm’s engagement ends with a deliverable handoff and no mechanism for ensuring the organization can implement, maintain, and evolve what was designed, the engagement was an audit with a label upgrade. Comprehensive data architecture consulting extends beyond diagnosis to include design, planning, knowledge transfer, and enablement.

It's Not Implementation

The Distinction

Architecture consulting designs the blueprint and guides the build. It typically doesn’t involve writing production code, deploying infrastructure, configuring platforms, or operating pipelines. Those are engineering activities, distinct from architecture, though informed by it.

The architect designs the data model. The engineer creates the tables. The architect defines the pipeline patterns. The engineer writes the pipeline code. The architect specifies the governance framework. The engineer implements the quality monitoring.

The Nuance

Some consulting firms offer both architecture and implementation services. That’s fine, even advantageous, when the same team that designed the architecture helps build it. But the two should be scoped and understood as distinct activities with different deliverables, different skill sets, and different cost structures.

When architecture and implementation are conflated, two problems arise. First, the architecture phase gets rushed to get to the “real work” of building, producing a shallow design that causes problems during implementation. Second, implementation decisions start driving architectural choices, the team designs what’s easiest to build rather than what’s structurally sound.

The Right Relationship

Architecture should precede and inform implementation. Architecture provides the blueprint, the standards, and the guardrails. Implementation provides the build, with architectural oversight ensuring alignment. They work together, but the architectural direction should be established before major implementation begins, not discovered along the way.

The Summary Principle

Data architecture consulting occupies a specific, distinct position in the data landscape. It’s not engineering (which builds), not database design (which is one component), not tool selection (which is one decision), not strategy (which defines the vision), not cloud consulting (which optimizes infrastructure), not a one-time audit (which only diagnoses), and not implementation (which executes the design).

It’s the discipline that designs the complete structural foundation, across every layer, for every stakeholder, aligned to business outcomes, and built to evolve.

When you engage it, you should know exactly what you’re getting. And when someone offers something else under the same label, you should know the difference.

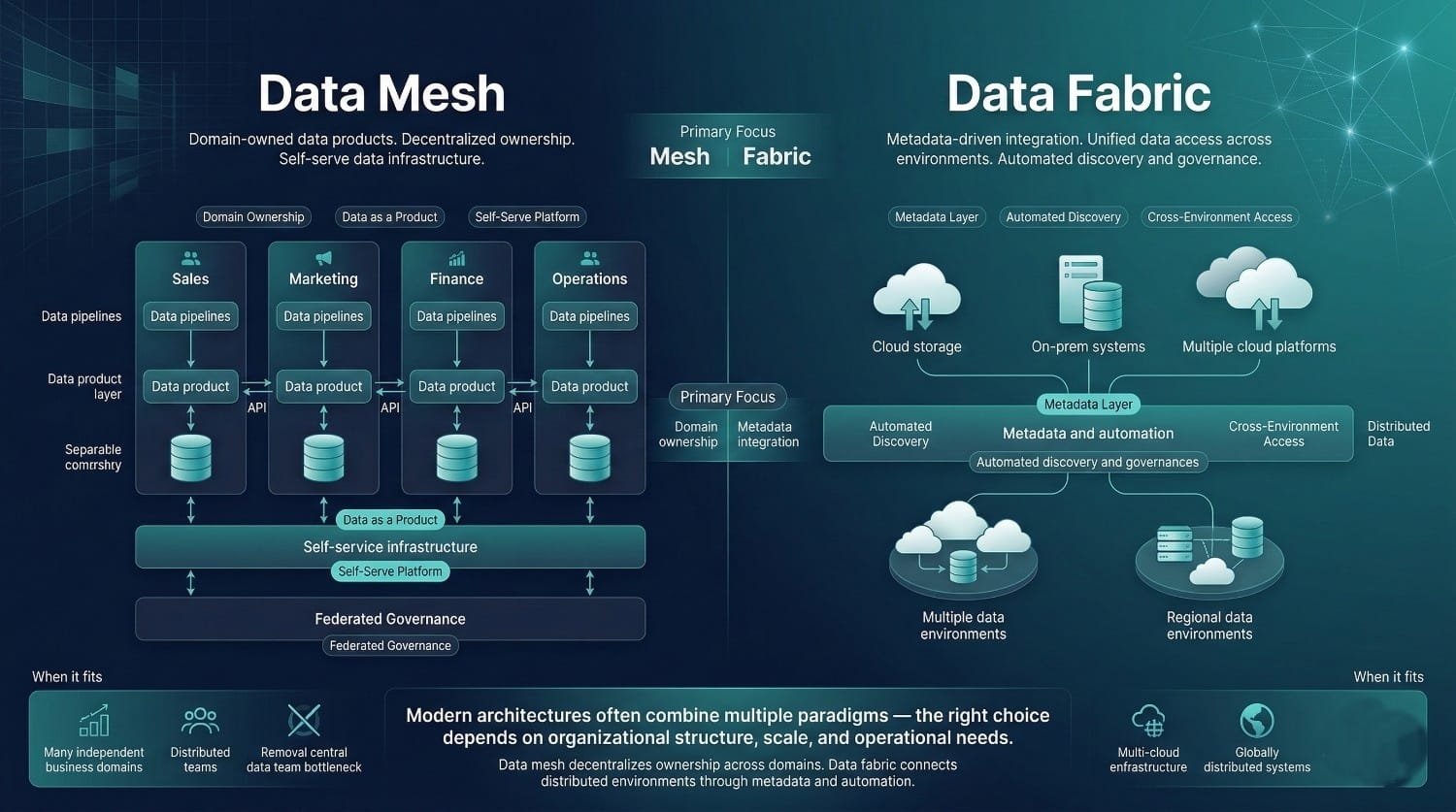

Common Architectural Paradigms a Consultant Might Recommend (And Why)

A significant portion of what data architecture consulting delivers is the selection and design of the right architectural paradigm, the foundational pattern that shapes how the entire data ecosystem is structured.

This isn’t a technology choice. It’s a structural design choice that determines how data is stored, how it moves, who owns it, and how it’s consumed. Getting it right sets the organization up for years of productive data work. Getting it wrong can create structural constraints that are difficult and costly to overcome through engineering alone. Here are the paradigms a consultant might recommend, with honest assessments of when each fits and where each falls short.

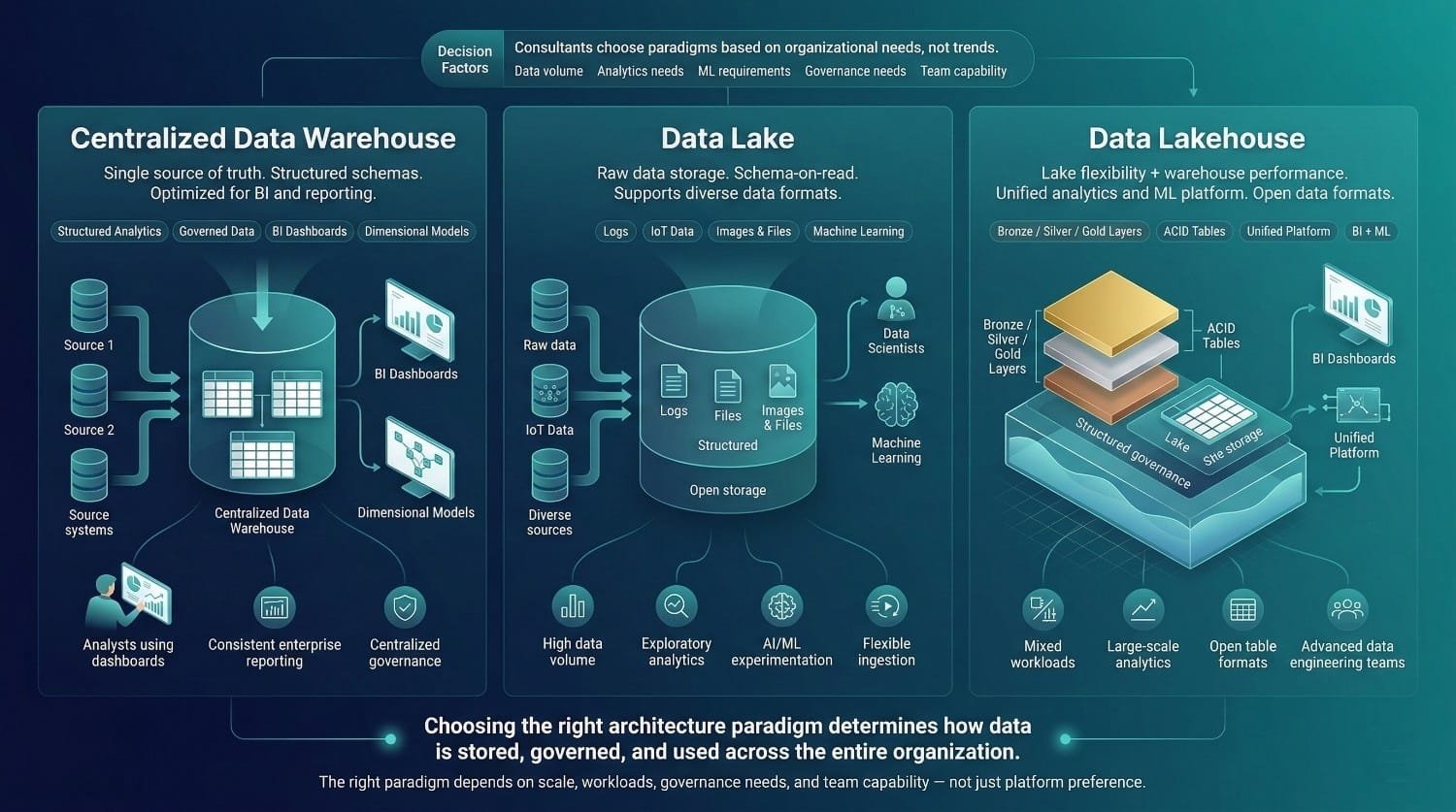

Centralized Data Warehouse

What It Is

A single, structured repository optimized for analytical queries and business reporting. Data is loaded from source systems, transformed into well-defined schemas (typically dimensional models or similar structured approaches), and served to analysts and BI tools through a governed, consistent interface.

This is one of the most established and widely adopted paradigms in analytical data architecture. It’s been the default analytical architecture for decades, and for good reason.

When It Fits

A centralized warehouse is often the right choice when the organization has a strong BI and reporting culture with well-defined analytical needs. When data volumes are moderate, terabytes, not petabytes. When consistency, governance, and a single source of truth are top priorities. When the primary consumers are business analysts and executives using structured queries and dashboards. And when the organization values reliability and simplicity over flexibility.

Popular Platforms

Snowflake, Google BigQuery, Amazon Redshift, Azure Synapse, and Teradata (legacy but still widely deployed).

Trade-Offs

Warehouses can become bottlenecks when a single team controls all data access and every request goes through a central queue. They are generally less optimized for large volumes of unstructured data without additional extensions, documents, images, logs, and streaming events. At extreme scale, cost and performance can become challenging, though modern cloud warehouses have pushed these limits significantly. And the structured, schema-on-write approach generally requires clearer upfront modeling decisions before structured transformation, which doesn’t always align with exploratory or AI/ML use cases.

Data Lake

What It Is

A large-scale storage layer that accepts data in any format, structured, semi-structured, and unstructured, without requiring schema definition upfront. Data is stored in its raw form and structured at the point of consumption (schema-on-read).

The data lake emerged as a response to the warehouse’s limitations, offering flexibility, scale, and the ability to store data before deciding exactly how it would be used.

When It Fits

Lakes are well-suited when the organization deals with high data volume and variety, logs, IoT streams, documents, images, and video alongside traditional structured data. When AI/ML workloads require access to raw, unprocessed data for feature engineering and model training. When the organization needs to store data before fully defining its analytical use cases. And when cost-effective storage of large volumes is a priority.

Popular Platforms

Amazon S3 with Athena or EMR, Azure Data Lake Storage with Synapse or Databricks, and Google Cloud Storage with BigQuery or Dataproc.

Trade-Offs

The lake’s greatest strength is also its greatest risk. The flexibility that allows any data in any format also allows ungoverned, undocumented, low-quality data to accumulate indefinitely. Without active governance, a data lake risks becoming poorly governed and difficult to navigate, a vast, expensive storage layer where data goes in but useful insights rarely come out. Query performance on raw lake data is typically slower than a well-modeled warehouse. And the schema-on-read approach can shift complexity toward downstream consumers if semantic layers are not introduced who need to make sense of the data, which often means the complexity isn’t managed, just redistributed.

Data Lakehouse

What It Is

A hybrid architecture that combines the flexibility and scale of a data lake with the structure, performance, and governance capabilities of a data warehouse. Data is stored in open formats on lake storage, but a metadata and transaction layer provides warehouse-like features, ACID transactions, schema enforcement, time travel, and optimized query performance. The lakehouse emerged as organizations realized they needed both the lake’s flexibility and the warehouse’s reliability, and didn’t want to maintain two separate systems.

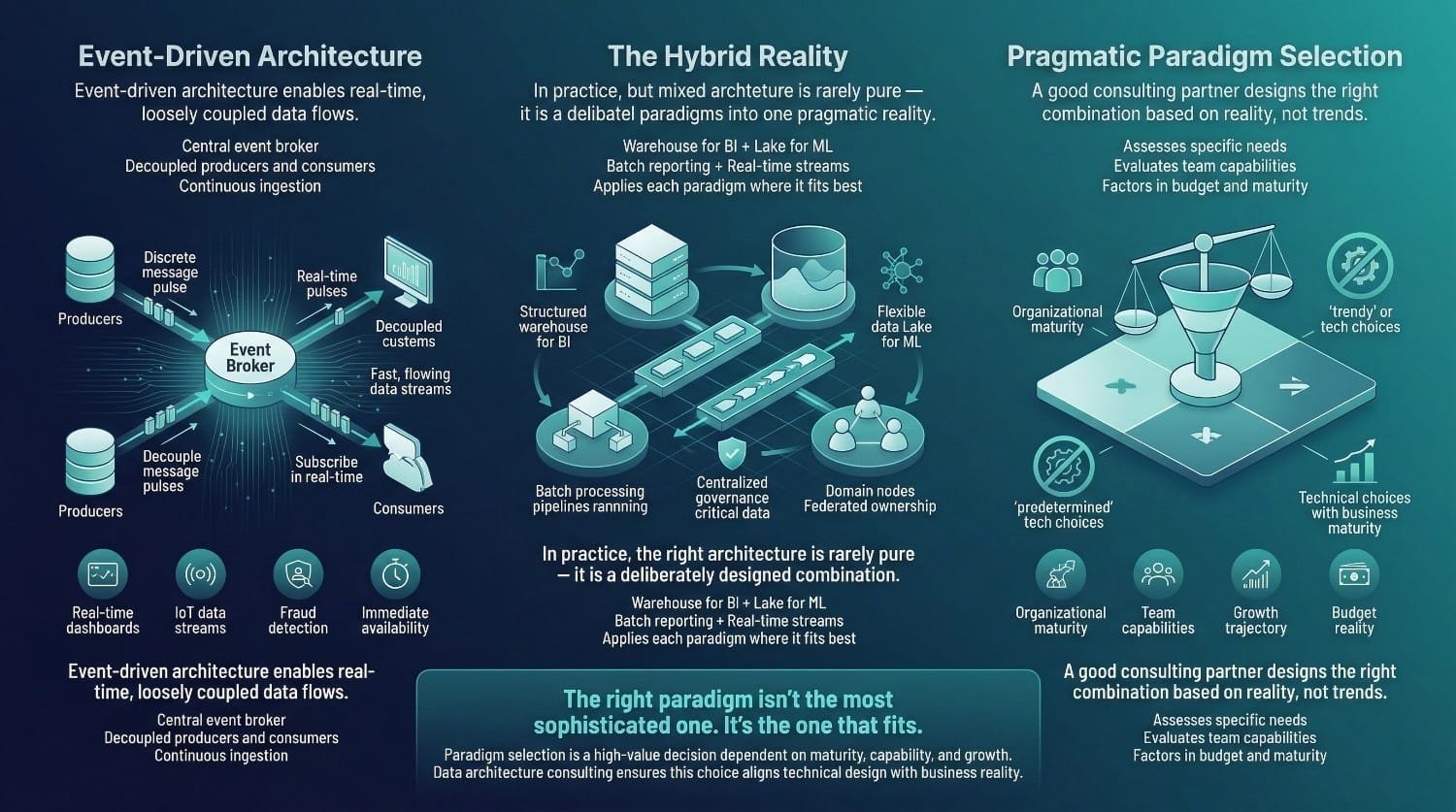

When It Fits