Table of Contents

Introduction

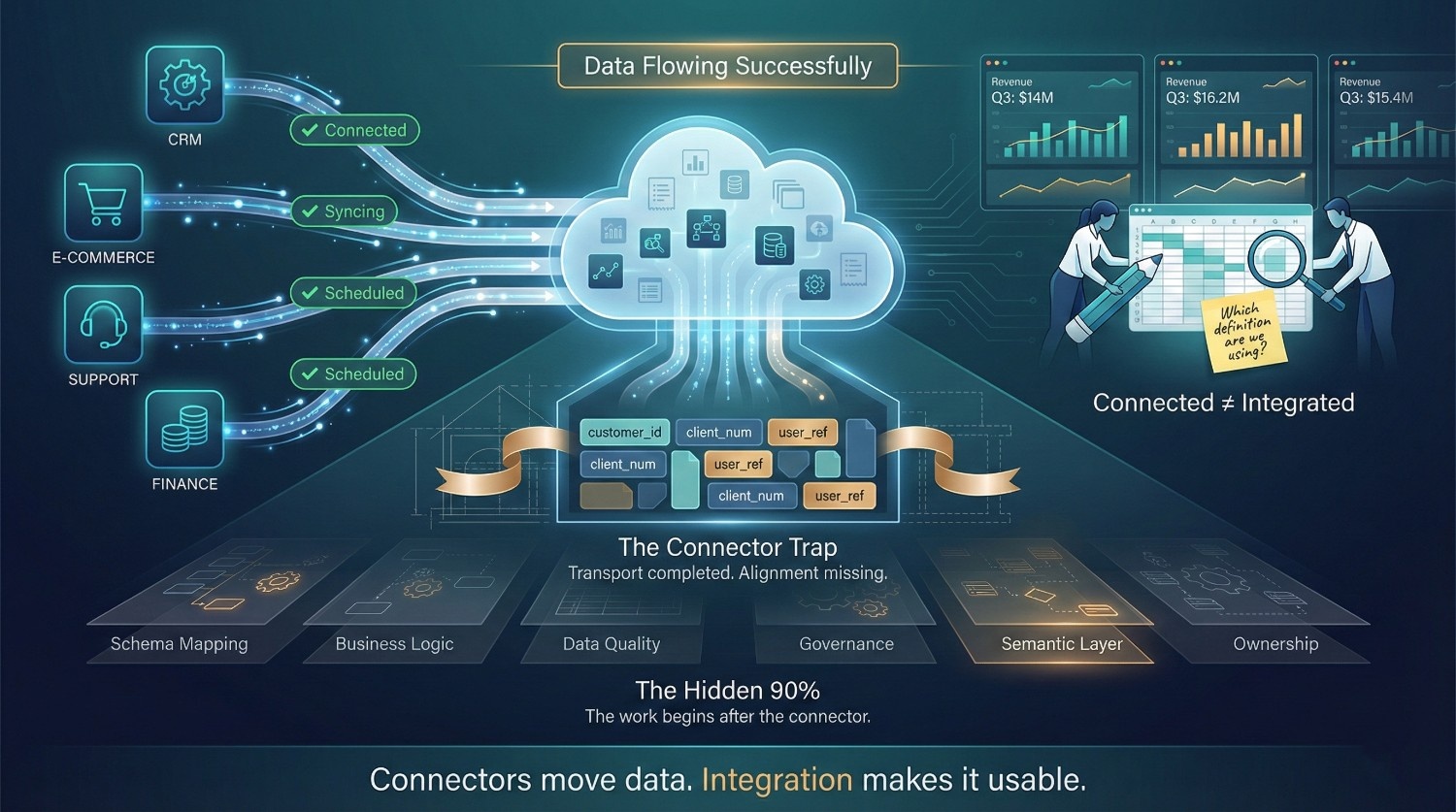

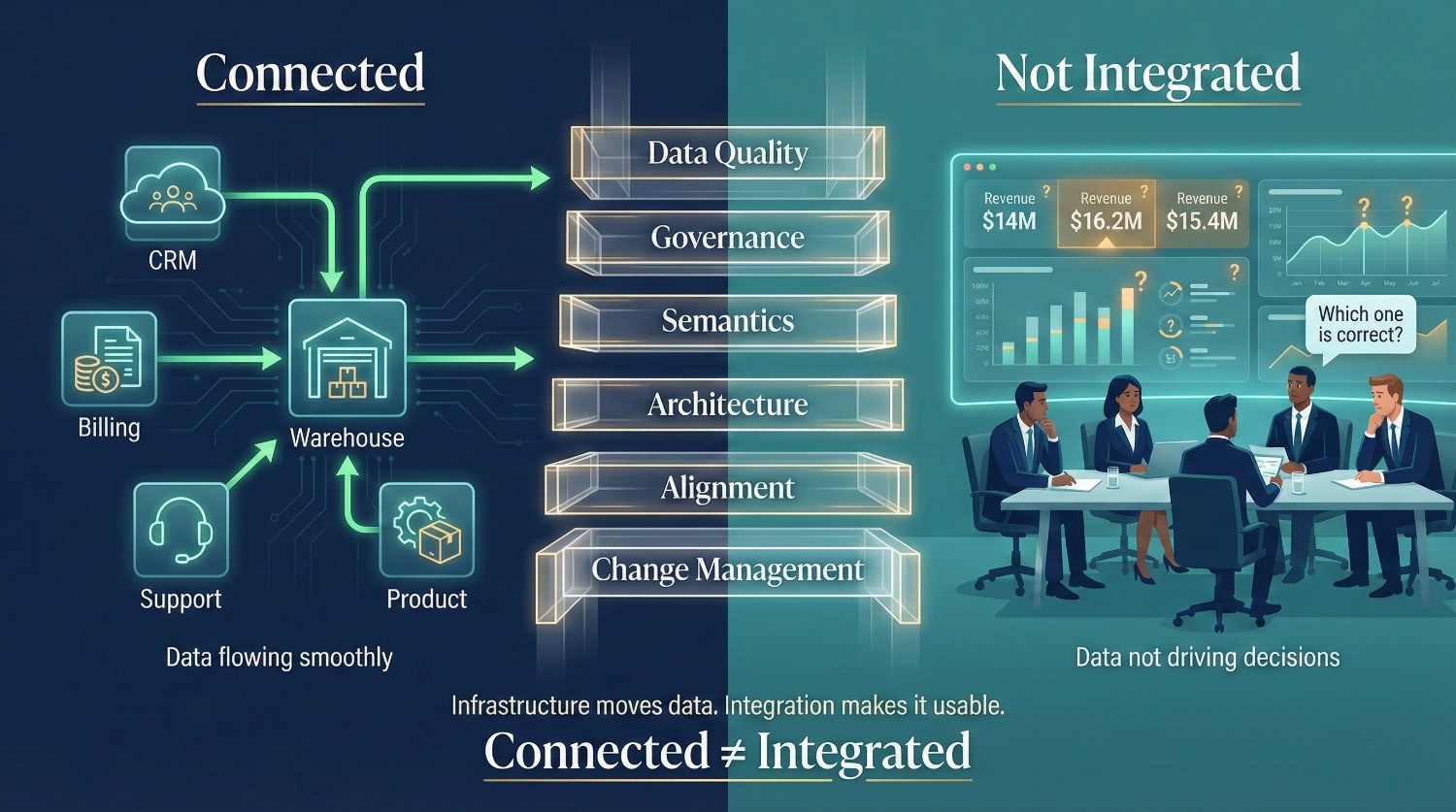

The Connector Trap

There’s a moment in almost every data integration project that feels like a win:

“The connectors are live. Data is flowing. We’re good.”

Salesforce is syncing to Snowflake. Shopify data is landing in BigQuery. HubSpot, Zendesk, NetSuite, all connected, all pushing data on schedule. Green lights across the board. And then, nothing useful happens.

- Reports still don’t match across departments

- Analysts are still spending days cleaning data manually

- The “unified customer view” is still three different versions of the truth

- Leadership still doesn’t trust the numbers

The connectors work perfectly. The project is still failing.

Why This Keeps Happening

Here’s the uncomfortable truth that most tool vendors won’t tell you:

Technical connectivity is a relatively small portion of a successful data integration initiative.

Getting data to flow between systems is typically one of the more straightforward parts compared to aligning definitions, models, and governance, though it can still be complex in legacy or regulated environments. It’s what happens after the data lands that determines whether the project delivers value or becomes an expensive exercise in moving garbage from one place to another.

The other 90% includes:

- Data quality, is the incoming data clean, complete, and consistent?

- Schema mapping, do fields from different systems actually mean the same thing?

- Business logic, are calculations, hierarchies, and definitions aligned across sources?

- Governance, who owns the data? Who can access it? Where did it come from?

- Organizational alignment, do stakeholders agree on what “success” looks like?

- Architecture, is the data landing in the right place, in the right structure, for the right use case?

None of these are connector problems. All of them are integration problems.

The Hidden Layers Connectors Don't Solve

Working connectors create a dangerous illusion of progress. They make it look like the integration is done when it’s barely started.

What connectors do:

- Extract data from source systems

- Handle API authentication and pagination

- Deliver raw data to a target on a schedule

What connectors don’t do:

- Reconcile conflicting definitions of “customer” across five systems

- Resolve duplicate records created by different departments

- Apply business rules that turn raw data into meaningful metrics

- Ensure data quality or flag anomalies

- Build the semantic layer that makes data trustworthy and queryable

- Align stakeholders on what the integrated data should actually enable

The gap between what connectors deliver and what the business expects is a common failure point in data integration projects, especially when semantic alignment and governance are under-scoped.

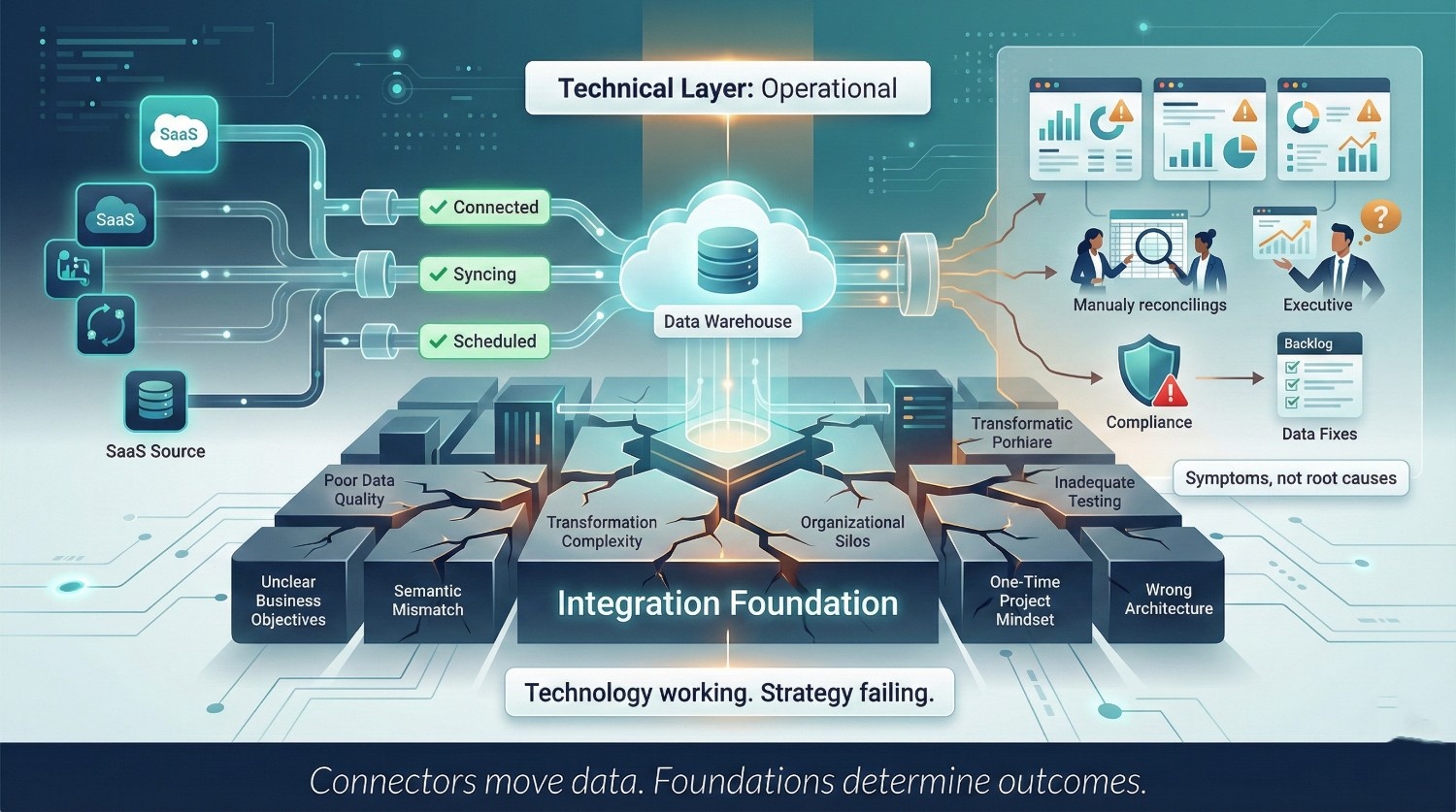

The Real Thesis

Many data integration failures stem less from technical connectivity issues and more from strategic, organizational, and architectural misalignment, though technical debt can amplify these risks. The technology works. The strategy doesn’t. And that disconnect is exactly why experienced data integration consulting exists, to close the gap between “data is flowing” and “data is driving decisions.”

Organizations that treat connectors as the finish line end up with:

- Expensive infrastructure that nobody trusts

- Dashboards that leadership ignores

- Data teams drowning in support tickets instead of delivering insights

- A growing backlog of “data fixes” that never gets smaller

Organizations that treat connectors as the starting line, and invest in the strategic, architectural, and organizational layers beyond them, are the ones that actually get ROI from their data.

What You'll Learn in This Post

By the end of this article, you’ll understand:

- The specific reasons data integration projects fail even when the technical layer is working

- Why problems like data quality, governance, and organizational misalignment kill more projects than bad connectors ever will

- How to recognize the warning signs early, before a failing project burns through its budget

- What a strategic approach to data integration looks like versus a tool-first approach

- Why experienced data integration consulting can materially increase the likelihood that a project delivers business value rather than simply moving data between systems.

This isn’t a vendor comparison or a tool tutorial. It’s a post about why smart organizations still fail at integration, and what to do differently.

Let’s get into it.

The Connector Illusion: Why "Connected" Doesn't Mean "Integrated"

What Connectors Actually Do

Let’s give connectors their due credit. They have significantly reduced the custom engineering effort that used to take weeks or months in many common integration scenarios.

What Modern Connectors Handle

- Authentication, OAuth, API keys, token refresh, all managed automatically

- API communication, handling rate limits, pagination, retries, and endpoint versioning

- Data extraction, pulling data from source systems on a schedule or via CDC

- Schema detection, reading source schemas and creating corresponding tables in the target

- Delivery, landing raw data in a warehouse, data lake, or staging environment

The Platforms Making This Easy

Platform | What It Offers |

|---|---|

Fivetran |

300+ pre-built connectors, fully managed ELT, automatic schema handling |

Airbyte |

Open-source connector framework with a rapidly growing library |

MuleSoft |

API-led connectivity for both application and data integration |

Stitch |

Lightweight, developer-friendly ingestion pipelines |

Hevo |

No-code data pipeline platform with real-time sync |

These tools have generally made the mechanical act of connecting systems faster, cheaper, and more reliable for standardized SaaS integrations, though complex or legacy systems can still require substantial custom work.

Why the Plug-and-Play Promise Is Seductive

- Set up a connector in 15 minutes

- See data flowing into your warehouse within the hour

- Demo a working pipeline to leadership by end of day

It feels like the hard part is done. That’s the trap.

What Connectors Don't Do

Here’s everything that happens after the connector is live, and that the connector has absolutely no opinion about.

They Don't Understand Your Business Logic

- Your company calculates “revenue” differently than the default in your ERP

- “Active customer” means one thing in marketing and something else in customer success

- Connectors move the raw fields. They don’t know, or care, what those fields mean to your business.

They Don't Resolve Schema Conflicts

- Salesforce calls it AccountName. Shopify calls it customer_name. Your billing system calls it client_label.

- All three refer to the same entity. The connector doesn’t know that.

- Without explicit mapping, you end up with three disconnected columns in your warehouse, not one unified field.

They Don't Handle Data Quality

Connectors primarily replicate what the source provides, often with minimal transformation, including:

- Duplicate records created by different teams entering the same customer manually

- Missing values that were never required in the source system

- Stale records that haven’t been updated in years

- Formatting inconsistencies, USA vs. US vs. United States vs. U.S.A.

If the source data is messy, the connector delivers messy data. Perfectly. On schedule.

They Don't Define Transformation or Enrichment Rules

- How should these 47 raw tables be modeled for analytics?

- Which fields need to be normalized? Which need to be derived?

- What business rules should be applied during transformation?

- What enrichment is needed, geocoding, currency conversion, segmentation?

These are integration decisions. Connectors are typically not responsible for making these decisions; they focus on transport rather than semantic modeling or business rule enforcement.

They Don't Align Data with Business Outcomes

- Which KPIs should this data support?

- What questions should the integrated dataset be able to answer?

- Who are the consumers, analysts, executives, ML engineers, compliance teams?

- What decisions will be made based on this data?

Connectors generally operate without awareness of downstream business context; their primary responsibility is reliable data movement. This is the fundamental gap that data integration consulting is designed to fill, connecting the technical plumbing to actual business outcomes.

The Dangerous Gap

The Illusion of Progress

There’s a predictable pattern in failed integration projects:

- Week 1, Connectors are configured. Data starts flowing. The team celebrates.

- Week 4, Analysts start querying the warehouse. The data is raw, inconsistent, and confusing.

- Week 8, Stakeholders ask why the dashboards still don’t work. The data team scrambles.

- Week 12, Everyone realizes the “integration” was just ingestion. The real work hasn’t started.

- Month 6, Budget is burned. Trust is eroded. Someone suggests starting over.

The root cause is always the same: the organization mistook connectivity for integration.

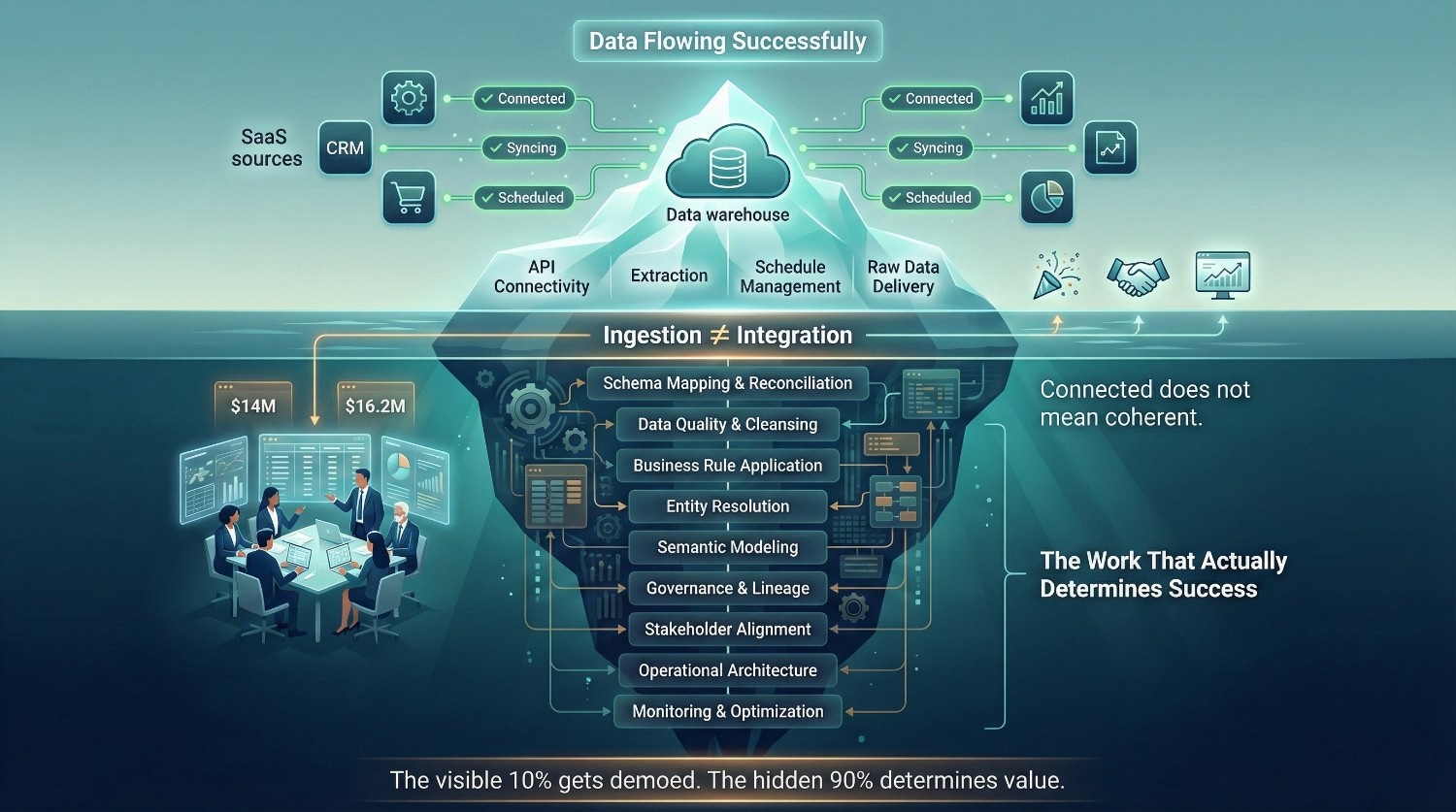

The Iceberg Analogy

Connectors are often the visible portion of the effort, while the majority of complexity lies in modeling, governance, and alignment beneath the surface. What’s beneath the surface:

Visible (Connectors) | Hidden (Everything Else) |

|---|---|

API connectivity |

Schema mapping and reconciliation |

Data extraction |

Data quality and cleansing |

Schedule management |

Business rule application |

Schema detection |

Entity resolution and deduplication |

Raw data delivery |

Governance, lineage, and access control |

Consumption / Modeling |

Semantic modeling and metric definitions |

Organizational governance |

Stakeholder alignment and change management |

Operational architecture |

Ongoing monitoring and optimization |

The visible part is what gets demoed. The hidden part is what determines whether the project actually delivers value.

Why Stakeholders Get Fooled

- Connectors produce tangible, demonstrable output quickly, data in tables, rows being synced

- The harder work, mapping, cleansing, modeling, governing, is invisible and incremental

- Leadership naturally equates “data is flowing” with “data is ready”

- Nobody wants to hear that the project is 10% done when money has already been spent

This perception gap is one of the most common triggers for bringing in data integration consulting, often after the initial “connectors-only” approach has already stalled. Engaging experienced expertise before large-scale ingestion begins can reduce rework and architectural drift; engaging later is still possible but typically more expensive to correct.

The Real Reasons Data Integration Projects Fail

Connectors working. Data flowing. Project still failing. If technology isn’t the problem, what is?

Across many failed and recovered integration initiatives, consistent patterns tend to emerge. These failures frequently trace back to strategic, organizational, and architectural gaps, rather than the operational status of a specific connector.

This is the section that explains why, and it’s the core of what data integration consulting is built to address.

Lack of Clear Business Objectives

The Root Problem

“We need to integrate our systems.”

That’s not a goal. That’s a technology activity disguised as a strategy. Compare it to:

“We need a unified customer view across CRM, support, and billing so we can reduce churn by 15% within 12 months.”

That’s a goal. It has a measurable outcome, a defined scope, and clear success criteria.

What Happens Without Clear Objectives

- Scope creep, without a defined target, everything becomes a priority and nothing gets finished

- Misaligned priorities, engineering builds what’s technically interesting; the business needs something different entirely

- No way to measure success, the project runs for 18 months and nobody can say whether it worked

- Abandoned projects, leadership loses patience because they can’t see ROI

The Fix

Start with outcomes, not tools. Every integration initiative should begin with:

- What business decisions will this data support?

- What questions should the integrated dataset answer?

- What does success look like, in numbers, not vague descriptions?

- Who are the consumers of this data and what do they need?

Establishing measurable business objectives is a foundational step in mature data integration consulting engagements, because architectural and modeling decisions depend on it. Get the objectives wrong and it doesn’t matter how good your architecture is.

Poor Data Quality Across Source Systems

The Root Problem

Most connectors prioritize replication over cleansing or validation, though some offer limited transformation or basic data handling features. If your source systems are full of garbage, your connector will deliver that garbage to your warehouse, on time, every time.

Common Quality Issues That Kill Integration

Issue | Example | Impact on Integration |

|---|---|---|

Duplicate records |

Same customer entered manually in two CRMs |

Entity resolution becomes impossible without deduplication |

Inconsistent formats |

Dates as MM/DD/YYYY, YYYY-MM-DD, and DD-Mon-YY across systems |

Joins and comparisons fail silently |

Orphaned foreign keys |

Orders referencing customer IDs that no longer exist |

Referential integrity breaks in the unified model |

Missing mandatory fields |

Email blank in 30% of CRM records |

Customer matching and identity resolution lose accuracy |

Stale records |

Addresses last updated in 2019 |

Downstream analytics based on outdated information |

The Compounding Effect

Here’s what makes data quality especially dangerous in integration:

- Bad data from one source doesn’t just affect that source’s data, it corrupts the entire unified view

- A duplicate customer record from Salesforce creates a false match with a Zendesk ticket, which inflates support metrics, which skews the churn model

- One bad input cascades across every downstream system that consumes the integrated data

Why Quality Problems Surface Late

- Source systems often tolerate bad data, they have workarounds, manual processes, and tribal knowledge that compensate

- It’s only when data from multiple systems is combined that conflicts, gaps, and inconsistencies become visible

- By then, the project is already past the planning stage and into expensive debugging

The Fix

Data profiling and quality assessment should ideally begin before large-scale integration design, and continue iteratively throughout the project lifecycle. This is a fundamental principle of data integration consulting, assess the state of your data before designing the architecture that depends on it.

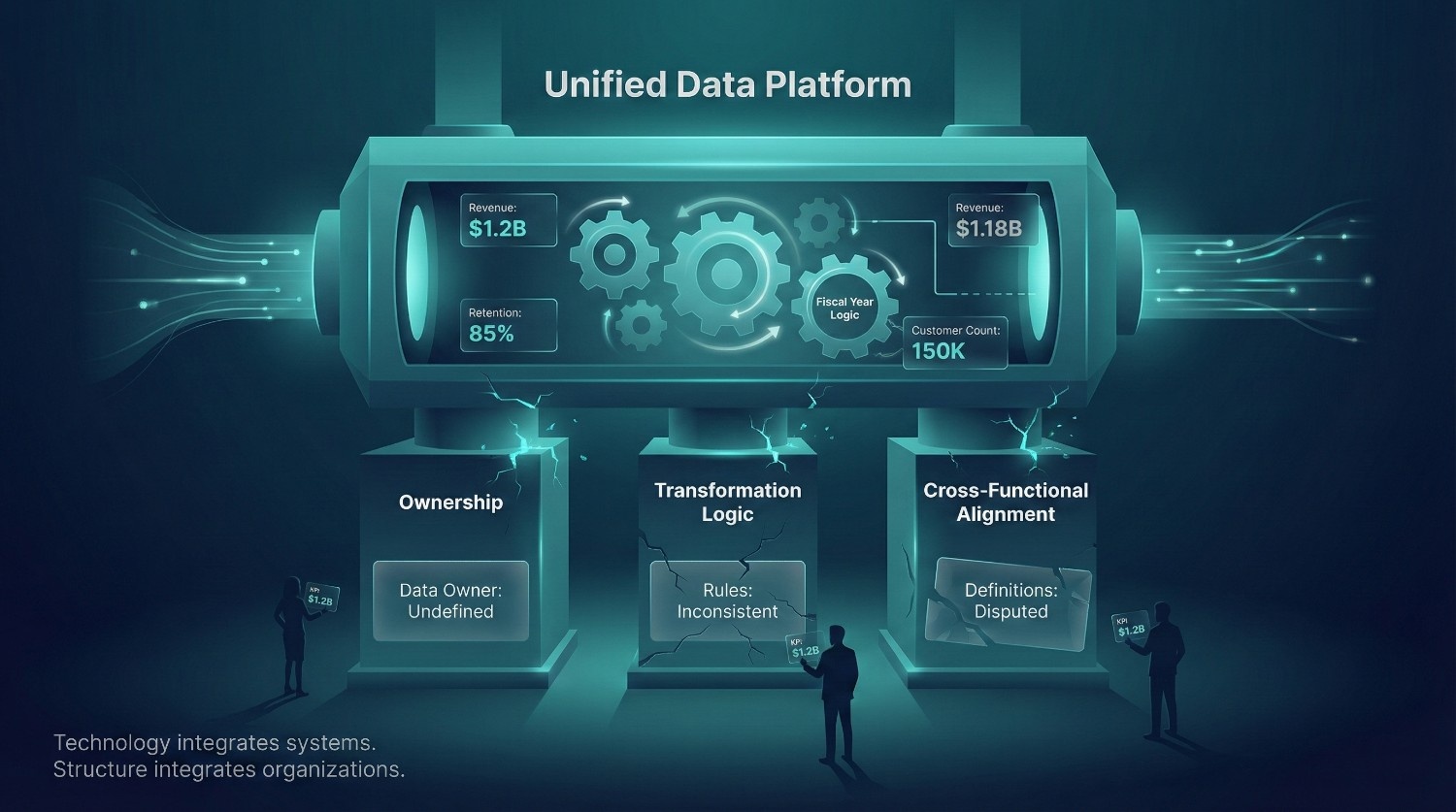

Schema and Semantic Mismatches

The Root Problem

Different systems model the world differently. That’s not a bug, it’s a fundamental reality of enterprise software. But when you try to integrate data from these systems, every difference becomes a conflict that must be resolved.

The Two Core Mismatch Types

Same field, different meaning:

The revenue field in your sales system includes tax. The revenue field in your finance system excludes tax. Both are technically correct. Neither can be used as-is in an integrated view without explicit business rule application.

Different field, same meaning:

Salesforce has client_id. Shopify has customer_number. Your billing platform has account_id. All three refer to the same real-world entity, but the connector doesn’t know that.

Other Common Schema Conflicts

- Hierarchical vs. flat structures, your ERP models customers in a parent-child hierarchy; your CRM stores them flat

- Date/time formats, 2025-01-15, 01/15/2025, 15-Jan-2025, and timestamps with or without timezone offsets

- Currency codes, some systems store amounts in local currency, others in USD, some don’t store the currency code at all

- Units of measure, kilograms vs. pounds, liters vs. gallons, different rounding conventions

Why Automation Helps But Doesn't Solve It

AI-powered schema matching tools are getting better at suggesting mappings. But they can’t:

- Know that your company’s definition of “active customer” changed last quarter

- Understand that revenue means different things in different departments

- Resolve a conflict where both systems are “correct” according to their own business logic

Human domain expertise remains critical in resolving semantic conflicts, even as automated schema matching tools continue to improve. This is one of the highest-value activities in any data integration consulting engagement, sitting with business stakeholders and making the mapping decisions that no tool can automate.

The Root Problem

“Who owns this data?”

Silence. Without clearly defined ownership, accountability for accuracy, completeness, and trustworthiness often becomes fragmented. And when nobody is accountable, everybody builds their own workaround.

What Governance Gaps Look Like

- No data stewards or data owners, nobody is responsible for resolving conflicts or maintaining quality

- No golden record logic, when Salesforce says the customer’s address is X and the billing system says Y, there’s no rule for which one wins

- No lineage tracking, a number appears in an executive dashboard and nobody can trace where it came from or how it was calculated

No access controls, sensitive data lands in the warehouse with the same permissions as everything else

The Downstream Consequences

- Shadow systems, teams build their own spreadsheets and databases because they don’t trust the “official” integrated data

- Conflicting metrics, three departments report three different numbers for the same KPI

- Compliance risk, regulators ask for data provenance and you can’t provide it

- Decision paralysis, leadership stops using data for decisions because they don’t know which numbers to trust

The Fix

Governance isn’t a nice-to-have that you add later. It’s structural infrastructure that needs to be designed alongside the integration architecture:

- Define data owners for every key domain (customer, product, financial, operational)

- Establish golden record rules before building transformation logic

- Implement lineage tracking from source through every transformation to final consumption

- Set access controls based on data classification, not convenience

Mature data integration consulting engagements typically formalize ownership models, conflict resolution rules, and accountability structures to operationalize governance.

Underestimating Transformation Complexity

The Root Problem

“We’ll just load the raw data and let analysts figure it out.”

This sounds pragmatic. In practice, it transfers the hardest part of integration onto the people least equipped to handle it, and guarantees inconsistency because every analyst will “figure it out” differently.

What Transformation Actually Involves

It’s not just renaming fields and changing date formats. Real-world transformation includes:

- Fiscal year definitions, your company’s fiscal year starts in April, not January. Every date-based metric needs adjustment.

- Territory mappings, assigning customers to sales territories based on address, region, or account hierarchy

- Customer segmentation logic, defining enterprise vs. mid-market vs. SMB based on revenue, employee count, or contract value

- Calculated metrics, ARR, MRR, net retention, LTV, none of which exist as raw fields in any source system

- Currency conversion, historical exchange rates applied to transaction data for accurate cross-region reporting

The Long Tail of Edge Cases

In practice, a disproportionate amount of effort is often spent handling edge cases and exceptions in transformation logic:

- What happens when a customer has addresses in two countries?

- How do you handle a record with a negative revenue value?

- What if a field that’s supposed to be mandatory is null in 12% of records?

- How do you treat a customer who exists in the CRM but has never transacted?

Every edge case that isn’t handled explicitly becomes a silent error in production.

The Fix

Transformation logic should be:

- Documented, business rules written down, reviewed, and approved by stakeholders

- Version-controlled, managed in tools like dbt, not buried in stored procedures

- Tested, automated tests for every business rule and edge case

- Iterative, built incrementally, validated with real data, and refined over time

This is often the most labor-intensive phase of any data integration consulting project, and the phase where cutting corners creates the most long-term damage.

Organizational Silos and Political Resistance

The Root Problem

Data integration is a people problem as much as a technology problem. And people don’t always want to integrate.

What Political Resistance Looks Like

- Departments that won’t share data, “That’s our data, we don’t want other teams seeing it”

- Conflicting metric definitions, Marketing defines “lead” as anyone who filled out a form. Sales defines “lead” as someone with budget authority. Neither will budge.

- Turf wars over source of truth, Finance insists their system is authoritative for revenue. Sales insists theirs is. Both escalate.

- Passive resistance, teams agree to the integration project in meetings but continue using their old processes and spreadsheets

Why This Kills Projects

- Integration requires agreement on shared definitions, shared standards, and shared ownership

- When departments won’t align, you end up building integration logic based on compromises that satisfy nobody

- Users revert to old workflows because the integrated system doesn’t match how they actually work

- The “unified” system becomes just another data source that nobody trusts

The Fix

- Executive sponsorship, integration projects need top-down mandate, not just bottom-up engineering effort

- Cross-functional governance, representatives from every consuming department involved in defining standards and resolving conflicts

- Change management, training, communication, and feedback loops so teams actually adopt the integrated system

- Quick wins, demonstrate value early to build organizational buy-in before tackling the harder political battles

Experienced data integration consulting engagements typically treat organizational alignment as equally important to technical design and plan for both workstreams from the outset.

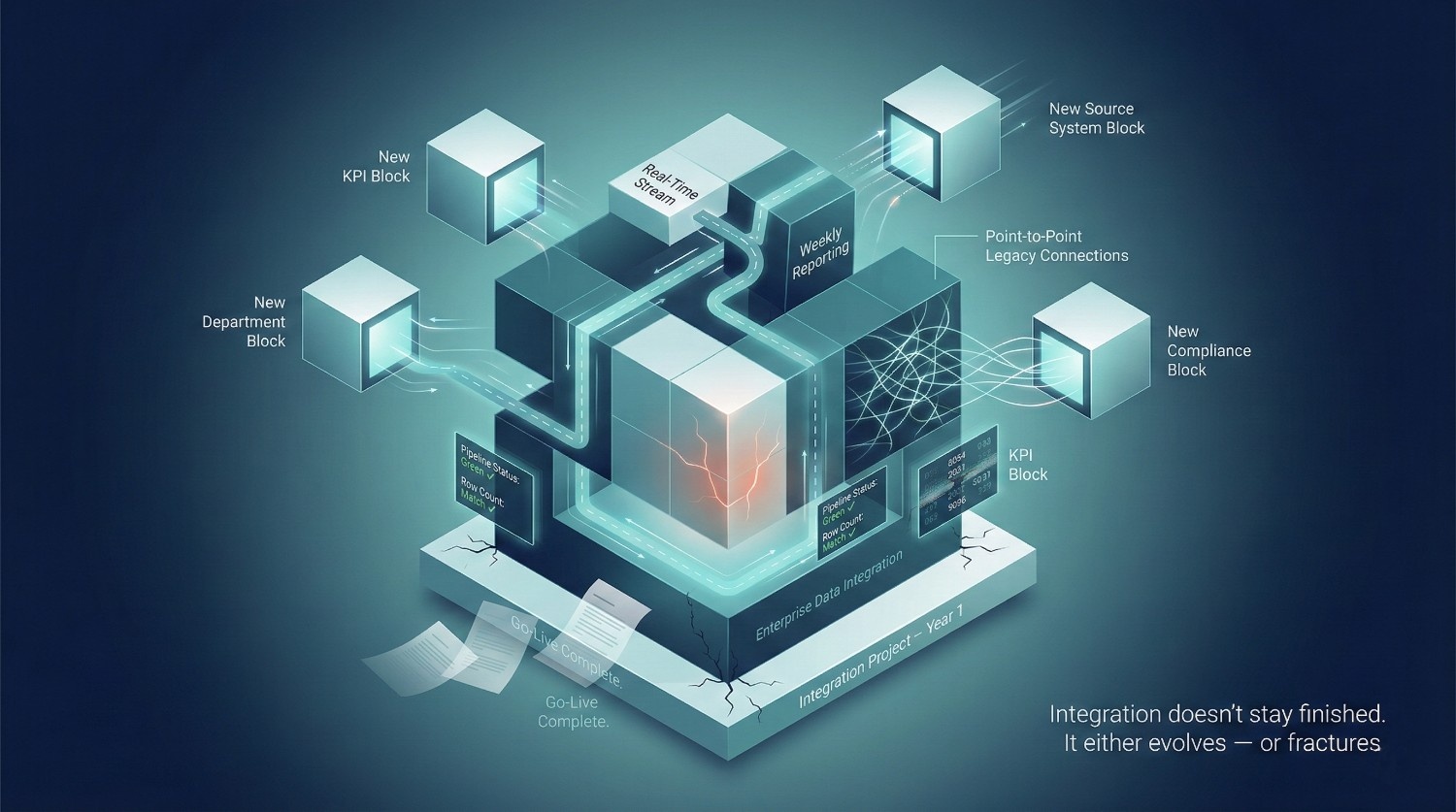

The Root Problem

“We finished the integration project last year. We’re good.”

You may have been aligned last year, but systems, requirements, and business context evolve continuously.

What Changes After Go-Live

- Source systems evolve, APIs get deprecated, schemas add new fields, vendors change their data models

- Business requirements shift, new KPIs, new segments, new products, new regulations

- New data sources appear, the company adopts a new CRM, acquires another business, launches a new channel

- Data quality degrades, without active monitoring, quality issues creep back in

The Concept of Data Debt

Just like technical debt in software, data debt accumulates silently:

- Transformation logic that’s no longer aligned with current business rules

- Connectors syncing tables that nobody uses anymore

- Governance policies that haven’t been updated in 18 months

- Documentation that’s outdated the moment someone changes a pipeline

Data debt tends to accumulate over time, and remediation becomes more complex and costly the longer misalignments persist.

The Fix

Treat integration as a program, not a project:

Project Mindset | Program Mindset |

|---|---|

Has a start and end date |

Continuous and evolving |

Success = go-live |

Success = sustained business value |

Team disbands after launch |

Dedicated team maintains and evolves |

Budget is one-time |

Budget is ongoing (and planned for) |

Testing happens once |

Monitoring and validation are continuous |

Data integration consulting isn’t just about building the initial architecture. The best engagements include operationalization, setting up the monitoring, maintenance, and evolution frameworks that keep integration alive and valuable long after the consultants leave.

Inadequate Testing and Validation

The Root Problem

Most integration pipelines are tested for one thing: did the pipeline run successfully?

That is operational validation, not comprehensive data testing. It tells you nothing about whether you arrived at the right destination.

What Inadequate Testing Looks Like

- Pipeline ran without errors → tested

- Row counts match between source and target → not tested

- Transformed values are accurate → not tested

- Business rules were applied correctly → not tested

- Edge cases handled properly → not tested

- Business users validated the output → not tested

Common Testing Gaps

Gap | Example | Consequence |

|---|---|---|

No source-to-target reconciliation |

100,000 records in source, 98,743 in target, nobody notices |

Silent data loss |

No edge case testing |

Null values in a required field cause downstream joins to silently drop records |

Understated metrics |

No timezone validation |

Events recorded in UTC but displayed in local time without conversion |

Inaccurate time-series reporting |

No business user validation |

Finance never reviewed the revenue calculations before go-live |

Wrong numbers hit the board deck |

No high-volume testing |

Pipeline works with 10,000 rows but fails at 10 million |

Production crash on first real load |

The Trust Problem

Here’s the real cost of inadequate testing:

The first time a stakeholder finds a wrong number in an integrated dashboard, trust is broken. Rebuilding trust in data can take significantly longer than establishing it initially, particularly in executive environments.

The Fix

Testing should cover three layers:

- Technical validation, pipeline success, row counts, schema conformance, performance benchmarks

- Data accuracy validation, source-to-target reconciliation, transformation rule verification, edge case coverage

- Business validation, stakeholders review output data against their domain knowledge before go-live

Data integration consulting engagements build testing frameworks as a core deliverable, not an afterthought. A pipeline that runs successfully but produces incorrect data can create more long-term damage than one that fails visibly and triggers investigation.

Wrong Architecture Choices

The Root Problem

Architecture decisions are often made early, and their consequences can last for years, making later changes costly and disruptive.

Common Architecture Mistakes

Batch when real-time was needed:

- The business needs up-to-the-minute inventory data. The architecture refreshes nightly.

- By the time data arrives, it’s already stale, and decisions based on it are already wrong.

Real-time when batch was sufficient:

- The team built a Kafka-based streaming architecture for a reporting use case that only runs weekly.

- Massive over-investment in infrastructure, complexity, and maintenance, for no additional business value.

Point-to-point spaghetti:

- Every system connected directly to every other system, 15 sources × 15 targets = 225 potential connections

- Adding one new source means building connections to every existing target

- A maintenance nightmare that becomes untouchable within 12 months

Tool that fits today but can’t scale:

- Chose a lightweight tool for three data sources. Now there are thirty.

- The tool can’t handle the volume, the complexity, or the governance requirements, but everything depends on it.

Over-engineering a simple problem:

- Built a full data mesh architecture for a 50-person company with three data sources

- The overhead of maintaining the architecture exceeds the value it delivers

Under-engineering a complex problem:

- Treated a multi-source, multi-department, compliance-heavy integration as a “quick ETL project”

- Realized six months in that the foundation can’t support the requirements

The Fix

Architecture decisions should be driven by:

- Current requirements, what do you need today?

- Foreseeable scale, where will you be in 2-3 years?

- Organizational maturity, do you have the team to operate this architecture?

- Use case requirements, does this need real-time, near real-time, or batch?

- Governance needs, what compliance and security requirements must the architecture support?

Early architectural decisions are among the highest-leverage elements of data integration consulting because they shape scalability, governance, and long-term maintainability. A good architecture makes everything easier. A bad one makes everything harder. And by the time you realize it’s bad, you’ve already built on top of it.

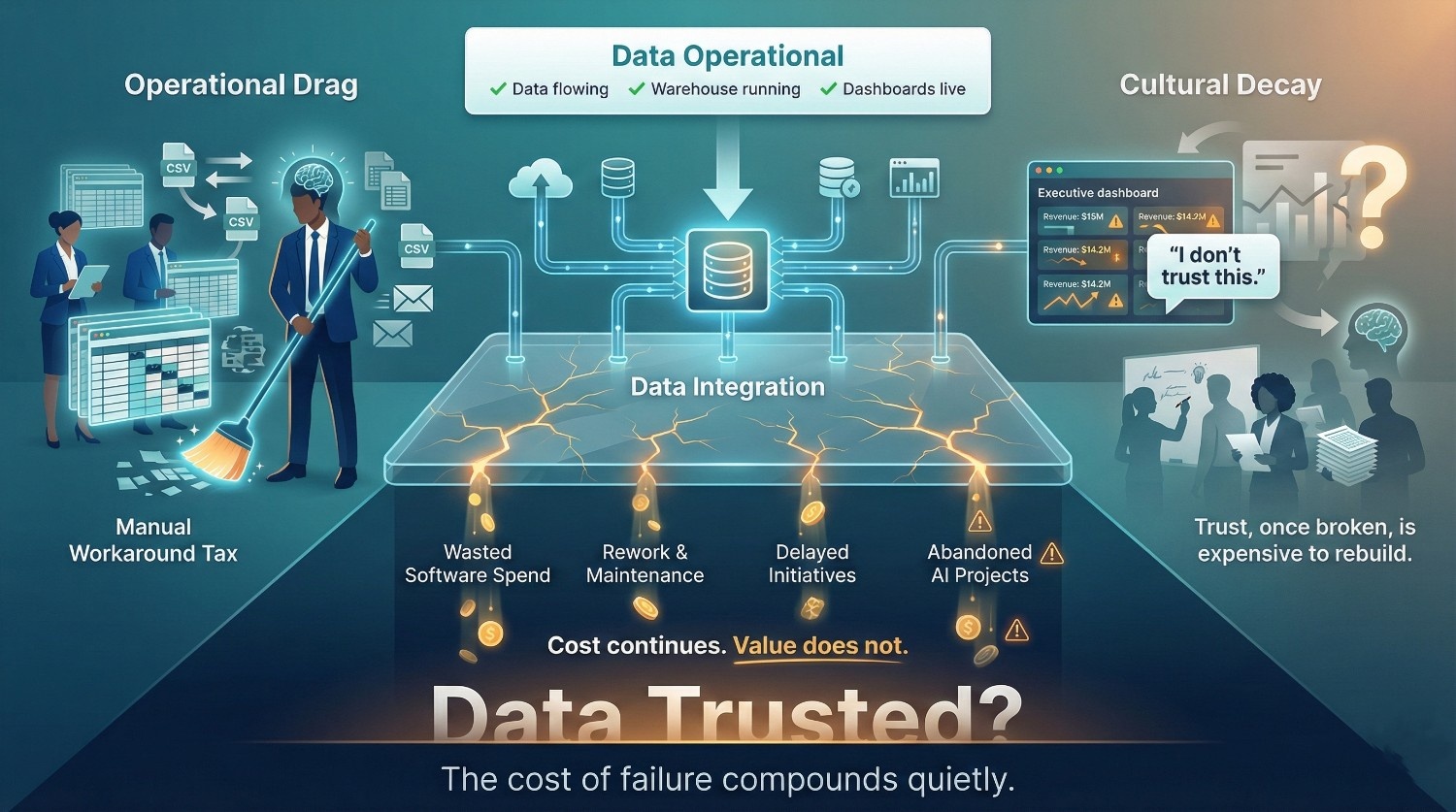

The Hidden Cost of Failed Data Integration

Failed data integration rarely presents as a single catastrophic event. More often, it erodes value gradually through wasted spend, manual workarounds, suboptimal decisions, and declining trust. By the time leadership realizes the integration project isn’t delivering, the damage is already deep.

This is the section most organizations don’t want to confront, but it’s the one that makes the business case for doing integration right the first time.

Financial Impact

The dollar cost of failed data integration is staggering, and most of it is invisible on any single line item.

Where the Money Goes

Wasted Software and Infrastructure Spend

- Licenses for connector platforms, warehouse compute, orchestration tools, all running, all billing, none delivering business value

- The average enterprise spends on multiple overlapping data tools that aren’t properly integrated into a coherent architecture

- Infrastructure costs don’t pause just because the project isn’t working

Engineering Hours Burned on Rework

- Data engineers rebuilding broken pipelines, debugging transformation logic, and manually reconciling data across systems

- Organizations average 897 applications, but only 29% are integrated( ref: Integrate.io), every disconnected system creates ongoing engineering overhead

- Engineers spending the majority of their time on maintenance rather than new capabilities represent an opportunity cost that materially reduces innovation capacity.

Delayed Business Initiatives

- Analytics projects stalled because the underlying data isn’t trustworthy

- AI/ML initiatives abandoned because training data isn’t clean or unified, Gartner predicts that through 2026, organizations will abandon 60% of AI projects unsupported by AI-ready data

- Personalization engines that can’t personalize because the customer data is fragmented

- Every month of delay is a month of missed revenue, missed efficiency, and competitive disadvantage

The Numbers That Should Concern You

Stat | Source |

|---|---|

Poor data quality costs organizations an average of $12.9 million per year |

Gartner |

Poor data quality costs US businesses an estimated $3.1 trillion annually |

IBM |

Up to 85% of big data projects fail to deliver successful outcomes |

Gartner |

At least 30% of GenAI projects will be abandoned after proof of concept due to poor data quality and unclear business value |

Gartner |

80% of data and analytics governance initiatives will fail by 2027 |

Gartner |

These aren’t edge cases. They’re the statistical norm. And at the root of most of these failures is the same issue: data was moved but never properly integrated. Data integration consulting exists to prevent exactly this, aligning spend with outcomes before the budget is committed, not after it’s burned.

Operational Impact

Even when failed integration doesn’t blow up visibly, it grinds daily operations to a halt in ways that compound over time.

Decisions Made on Bad Data

- Marketing allocates budget based on campaign attribution data that’s incomplete because web analytics and CRM data were never reconciled

- Supply chain orders inventory based on sales forecasts built from inconsistent regional data

- Executives make strategic bets based on dashboards that look authoritative but are built on unreconciled source data

A significant risk is that teams may not realize the data is incorrect until decisions have already been made. They trust the dashboard, make the decision, and only discover the problem months later, if ever.

The Manual Workaround Tax

When integration fails, people find workarounds. And those workarounds are expensive:

- Spreadsheet hell, analysts maintaining their own Excel files because the warehouse data can’t be trusted

- Email-based data sharing, departments sending CSV exports back and forth because systems aren’t synced

- Copy-paste processes, finance teams manually pulling numbers from three systems to build a single report

- Shadow databases, teams spinning up their own data stores because the “official” data doesn’t meet their needs

Every workaround is a hidden cost, in hours, in errors, and in the organizational dysfunction it normalizes.

Slower Time-to-Insight and Time-to-Market

- Questions that could be answered quickly in a well-integrated environment can take days when analysts must manually reconcile multiple sources.

- Product launches delayed because the data needed to validate market fit is scattered across disconnected systems

- Competitive intelligence stale by the time it’s assembled from multiple unintegrated sources

Mature consulting engagements often quantify operational inefficiencies to build a data-driven business case for remediation.

Trust and Cultural Impact

This is the damage that doesn’t show up in any financial report, but arguably costs the most in the long run.

"I Don't Trust the Data"

Five words that kill a data-driven culture overnight. Once leadership hears conflicting numbers from different teams, and it only takes once or twice, the default response becomes:

“Let’s not rely on the data. What does your gut say?”

At that point, every dollar spent on data infrastructure, tools, and engineering is effectively wasted. The organization has the data. It has the dashboards. Nobody uses them.

Analysts Become Data Janitors

- Data scientists and analysts spend up to 80% of their time cleaning and preparing data ( ref: Optimus Ai Labs) instead of doing the analysis they were hired for

- Every hour spent fixing formatting errors or merging inconsistent datasets is an hour not spent building predictive models, discovering insights, or developing solutions that could generate revenue(ref: Optimus Ai Labs)

- Sustained misalignment between role expectations and daily tasks can increase burnout risk and attrition among analytics talent.

- You hired data scientists to find insights. They’re spending their days fixing date formats.

Leadership Abandons Data-Driven Initiatives

The cultural cascade looks like this:

- Integration project delivers data, but it’s inconsistent and unreconciled

- Dashboards show wrong numbers, stakeholders catch discrepancies

- Trust erodes, teams stop using the dashboards

- Workarounds emerge, every department builds its own “source of truth”

- Leadership loses confidence, data initiatives get deprioritized or defunded

- The organization regresses, decisions go back to gut instinct, spreadsheets, and whoever shouts loudest in the meeting

This cultural damage is the hardest to reverse. Rebuilding trust in data after it’s been broken takes years, far longer than the technical fix itself.

The Real Cost

The hidden cost of failed data integration isn’t just money or time. It’s the organizational belief that data can drive better decisions. Once that belief is gone, it doesn’t matter how good your tools are or how much you spend on infrastructure. The organization won’t use it.

This reinforces the case for treating data integration as a strategic initiative, where consulting support may help protect and strengthen a data-driven culture. Getting integration right the first time isn’t just about pipelines. It’s about preserving the organization’s confidence that its data is worth trusting.

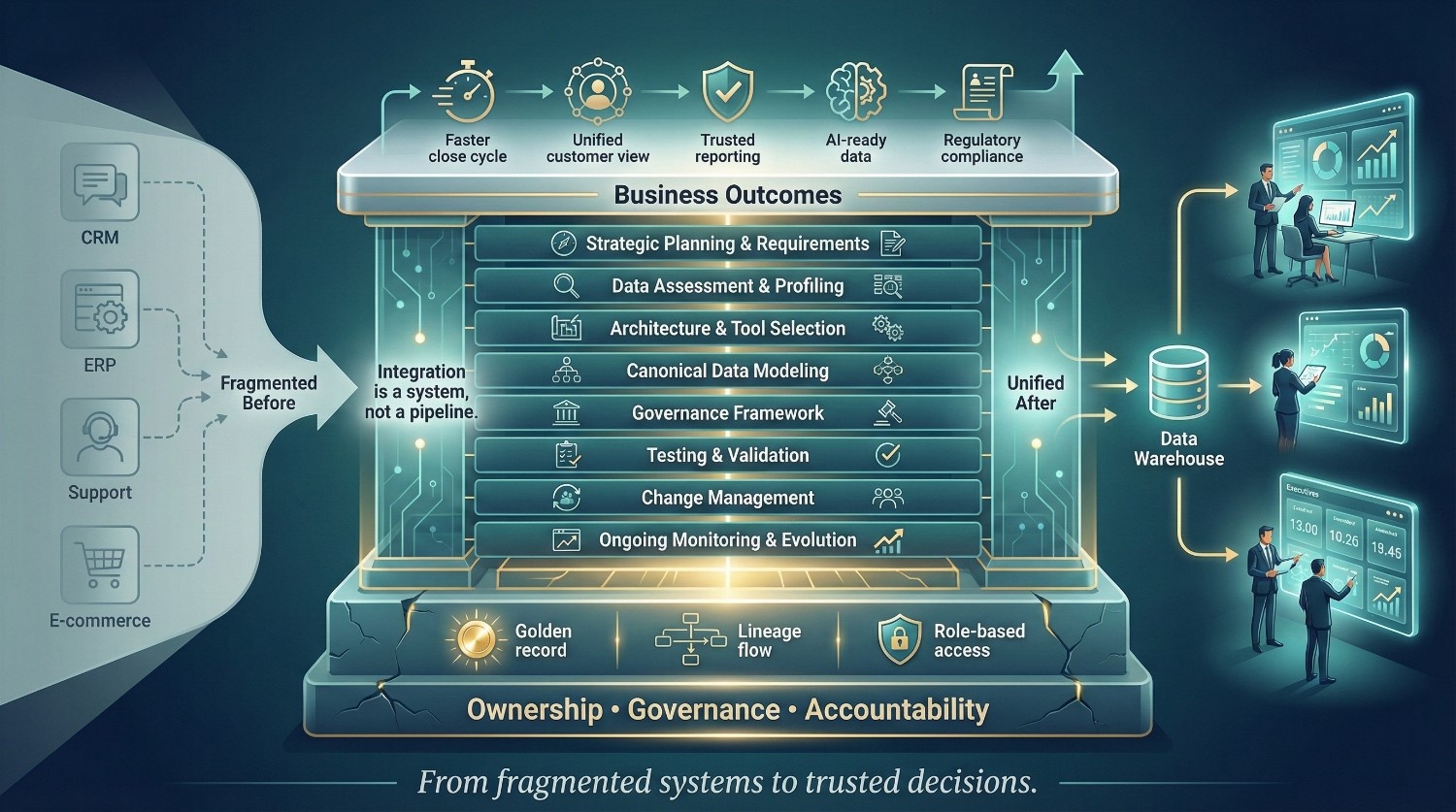

Where Data Integration Consulting Makes the Difference

If the previous sections described how integration projects fail, this one describes how they succeed.

The pattern is consistent: organizations that get integration right don’t just have better tools or smarter engineers. They have a structured approach that addresses every layer, strategy, architecture, data quality, governance, transformation, change management, and ongoing operations.

High-quality data integration consulting engagements are designed to deliver this structured strategic and operational framework. Not just technical implementation, but the full strategic and operational framework that turns data from a liability into an asset.

Here’s what that looks like in practice.

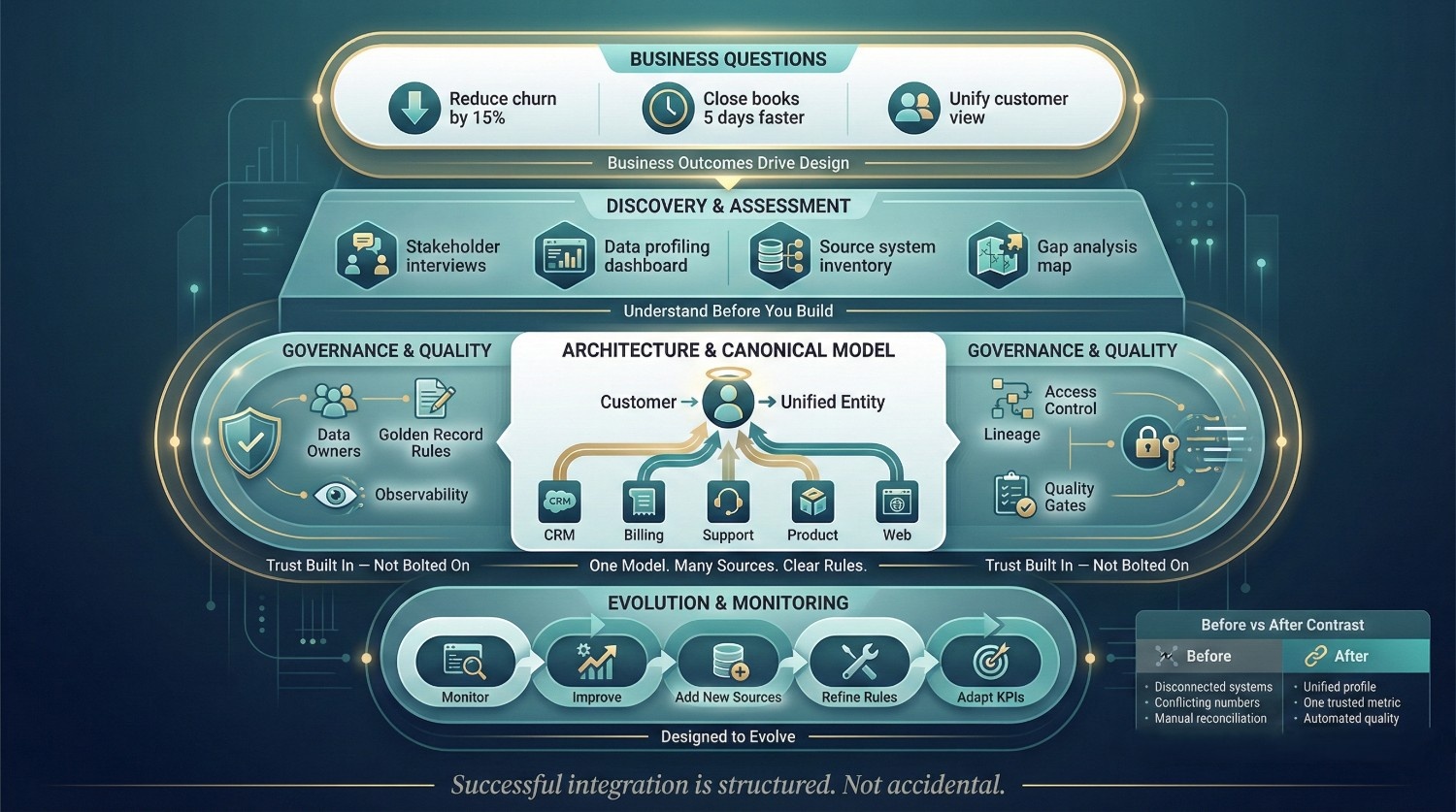

Strategic Planning and Requirements Gathering

Why It Matters

Every failed integration project shares the same origin story: someone started building before defining what success looks like.

What Data Integration Consulting Brings

Business-Outcome Alignment

- Integration goals are tied directly to business outcomes from day one

- Not “integrate Salesforce and NetSuite”, but “create a unified revenue view that enables finance to close the books 5 days faster”

- Every technical decision is evaluated against whether it moves the needle on a measurable outcome

Thorough Discovery

- Stakeholder interviews across every consuming department, not just IT

- Data landscape assessments, what systems exist, what data they hold, how it flows today

- Current-state documentation, mapping existing pipelines, workarounds, and pain points

- Gap analysis, what’s missing between where you are and where you need to be

Defined Success Criteria

- Measurable KPIs for the integration project itself:

- Data freshness targets (e.g., warehouse updated within 15 minutes of source change)

- Quality thresholds (e.g., duplicate rate below 2%)

- Adoption metrics (e.g., 80% of analysts using the integrated dataset within 90 days)

- Without defined criteria, you can’t measure progress, and you can’t prove ROI

Phased Roadmap

- Large, big-bang integration approaches carry higher risk; phased rollouts often improve change management and delivery confidence.

- Start with the highest-value, lowest-complexity data domain

- Deliver value early, build organizational confidence, then expand

- Each phase has its own scope, timeline, deliverables, and success criteria

Data Assessment and Profiling

Why It Matters

You can’t integrate what you don’t understand. And you can’t build a quality target on a foundation you haven’t measured.

What Data Integration Consulting Brings

Source System Audits

- Profile every source system for completeness, accuracy, consistency, and freshness

- Identify fields with high null rates, inconsistent formats, or questionable values

- Flag data domains that pose the highest risk to integration success

Risk Prioritization

- Not all data problems are equal. Consulting helps you focus on the ones that matter most:

Risk Level | Example | Priority |

|---|---|---|

Critical |

Customer ID duplicated across 40% of CRM records |

Fix before integration begins |

High |

Revenue field calculated differently across three systems |

Reconcile during transformation design |

Medium |

Address formatting inconsistent but parseable |

Standardize during cleansing phase |

Low |

Legacy fields no longer used by any downstream consumer |

Exclude from integration scope |

Quality Baseline

- Establish measurable benchmarks before integration starts

- Track improvement against these benchmarks throughout the project

- Without a baseline, you can’t prove the integration improved anything

This assessment phase is where data integration consulting often delivers its fastest ROI, by identifying problems that would have caused weeks of debugging if discovered later in the project.

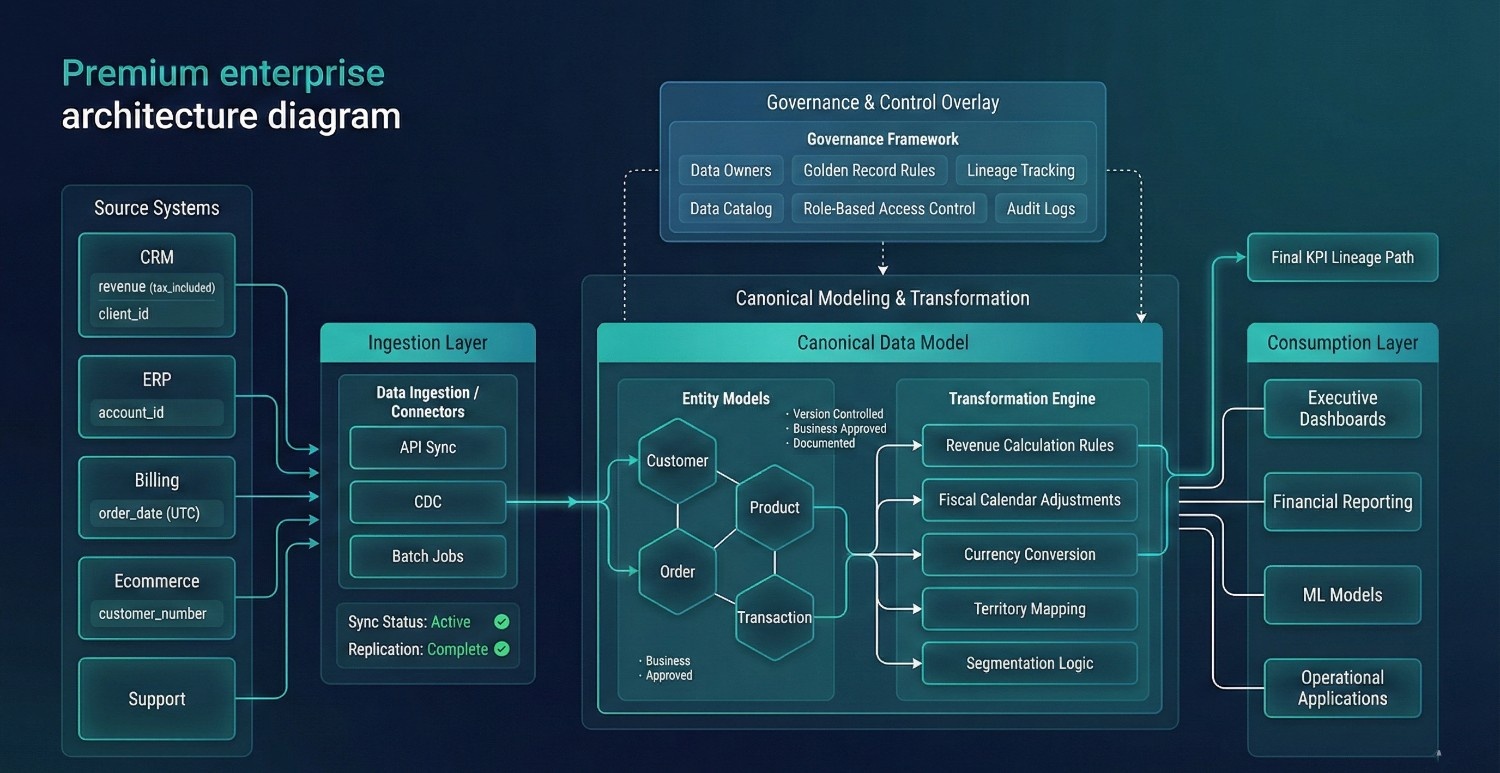

Architecture and Technology Selection

Why It Matters

Architecture decisions made in week one determine whether the project is maintainable in year three. Incorrect architectural decisions can be among the most expensive and disruptive issues to reverse later.

What Data Integration Consulting Brings

Architecture Design Tailored to Your Reality

- Not a one-size-fits-all reference architecture, a design that fits your specific scale, complexity, team maturity, and future direction

- Considerations include:

- Number of source systems and their connectivity options

- Data volume and velocity requirements

- Compliance and governance mandates

- Team capabilities and operational capacity

Evaluating Key Architecture Decisions

Decision | Options | Depends On |

|---|---|---|

Build vs. Buy |

Custom pipelines vs. managed platforms |

Team size, complexity, budget, control requirements |

Batch vs. Real-Time |

Scheduled loads vs. streaming |

Latency requirements, use case criticality |

Centralized vs. Federated |

Single warehouse vs. domain-owned data products |

Organizational structure, team maturity |

ETL vs. ELT |

Transform before loading vs. transform in the warehouse |

Compute costs, transformation complexity, tool preferences |

Tool Selection Based on Requirements

- Not based on vendor demos, blog posts, or what a peer company uses

- Selection criteria tied directly to the architecture and requirements defined earlier

- Proof-of-concept testing with your actual data, not sample datasets

Future-Proofing

- The architecture should support current requirements while remaining adaptable to foreseeable growth and additional use cases.

- Scalability, observability, and maintainability are designed in from the start, not bolted on later

Why It Matters

This is where raw data becomes a business asset. And it is frequently underestimated in scope and effort by teams new to large-scale integration.

What Data Integration Consulting Brings

Canonical Data Model

- A single, unified data model that serves as the common language across all source systems

- Defines how every key entity, customer, product, order, transaction, is represented in the integrated environment

- All source systems map into this model, not into each other

Collaborative Transformation Rules

- Transformation logic isn’t defined by engineers in isolation

- Business stakeholders and domain experts contribute to:

- How “revenue” is calculated and which source is authoritative

- How customer segments are defined and applied

- How fiscal periods, territories, and hierarchies are modeled

- Rules are documented, reviewed, and approved, not buried in code

Explicit Business Logic Documentation

- Every transformation rule is documented:

- What it does

- Why it exists

- Who approved it

- What source data it depends on

- This documentation is critical for auditability, compliance, and maintainability

- When someone asks “where does this number come from?”, there’s a clear, traceable answer

Edge Case Handling

- The 80/20 problem: 80% of transformation logic is straightforward. The remaining 20% handles:

- Null values in fields that downstream logic depends on

- Records that exist in one system but not another

- Historical data with different schemas than current data

- Currency conversions, timezone handling, and unit mismatches

- Experienced consulting teams typically encounter recurring edge cases across engagements, accelerating resolution compared to first-time internal efforts.

Governance Framework Implementation

Why It Matters

Without governance, integration creates a bigger mess, not a cleaner one. More data in one place without ownership, lineage, or access controls is a liability, not an asset.

What Data Integration Consulting Brings

Ownership and Stewardship Model

- Every data domain gets a defined owner, someone accountable for quality, accuracy, and availability

- Data stewards are assigned to handle day-to-day quality issues and escalations

- Clear escalation paths when systems disagree or data quality falls below thresholds

Golden Record Rules

- When two systems have conflicting data for the same entity, which one wins?

- Rules are defined by the business, not by engineers guessing:

Conflict | Resolution Rule | Rationale |

|---|---|---|

Customer address differs between CRM and billing |

Billing system is authoritative |

Most recently verified for invoicing |

Revenue amount differs between sales and finance |

Finance system is authoritative |

Subject to audit and reconciliation |

Product name differs between e-commerce and inventory |

E-commerce is authoritative |

Customer-facing, most frequently updated |

Lineage and Cataloging

- End-to-end tracking of where every data point originated and how it was transformed

- Data catalog implementation so teams can discover, understand, and trust available datasets

- Critical for compliance (GDPR, HIPAA, SOX) and for internal credibility

Access Controls and Security

- Role-based access, not everyone needs to see everything

- PII and sensitive data classified and protected throughout the integration pipeline

- Audit trails for who accessed what and when

Change Management and Stakeholder Alignment

Why It Matters

Even well-designed integration architectures underperform if adoption and stakeholder alignment are not addressed.. And nobody uses it if they weren’t involved in building it, don’t trust it, or don’t understand it.

What Data Integration Consulting Brings

Early Buy-In Across Departments

- Stakeholder engagement starts in discovery, not after go-live

- Every department that consumes or produces data has a voice in the design

- Concerns, requirements, and definitions are surfaced and resolved before they become blockers

Training and Enablement

- Hands-on training for analysts, engineers, and business users on:

- The new data model and where to find what they need

- How to query integrated datasets correctly

- How to report data quality issues and request changes

- Training isn’t a one-time event, it’s ongoing as the integration evolves

Shared Vocabulary

- One of the most underrated deliverables in any data integration consulting engagement:

- Agreed-upon definitions for every key metric and entity

- Published in a business glossary accessible to everyone

- Eliminates the “my lead count doesn’t match your lead count” problem at the root

Expectation Management

- Integration is iterative and benefits from clear expectation setting regarding phased delivery.

- Stakeholders understand the phased roadmap, what’s coming when, and what to expect at each stage

- No surprises. No “I thought this would be done by now.” No trust erosion from misaligned timelines.

Why It Matters

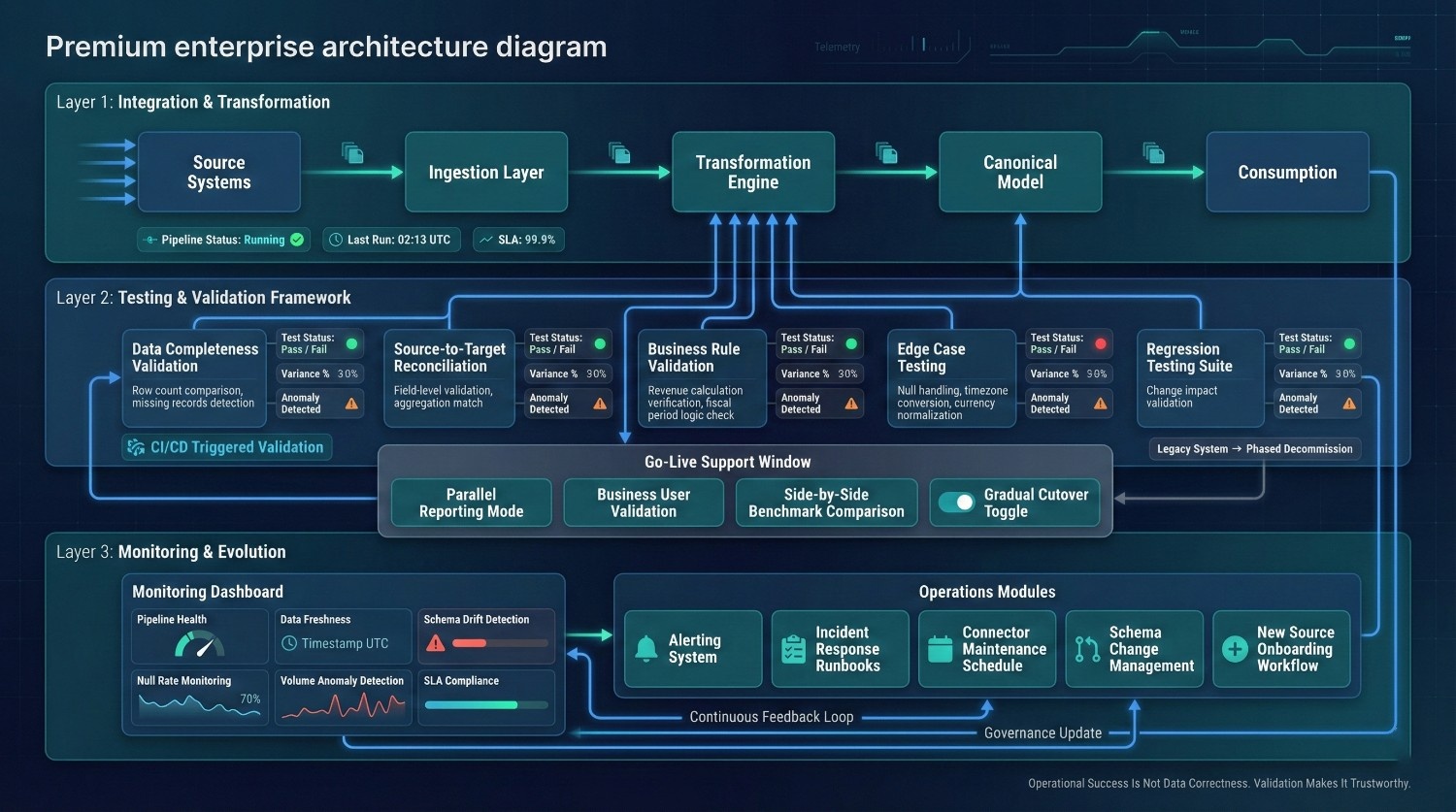

Operational success of a pipeline does not guarantee correctness of the underlying data. Testing is where you prove, with evidence, that the integrated data is accurate, complete, and trustworthy.

What Data Integration Consulting Brings

Comprehensive Test Plans

Test Type | What It Validates |

|---|---|

Data completeness |

All expected records arrived, no silent drops |

Data accuracy |

Transformed values match expected results based on business rules |

Data timeliness |

Data freshness meets SLA requirements |

Edge case coverage |

Null handling, special characters, timezone conversions, high-volume loads |

Regression testing |

New changes don't break existing logic |

Parallel Testing with Business Users

- Business stakeholders validate integrated data against their own domain knowledge

- Side-by-side comparison with existing reports and known benchmarks

- This is the trust-building step, if finance confirms the revenue numbers match, adoption follows

Automated Reconciliation and Monitoring

- Source-to-target row count validation on every pipeline run

- Automated alerts for anomalies, unexpected volume changes, null rate spikes, schema drift

- Not just “did the pipeline run?” but “is the data still right?”

Go-Live Support

- Consultants on hand during the transition period to troubleshoot, adjust, and stabilize

- Rapid response for issues that surface when real users hit real data at real scale

- Gradual cutover from old processes to new, not a risky overnight switch

Ongoing Support and Evolution

Why It Matters

Integration doesn’t end at go-live. It evolves, or it decays.

What Data Integration Consulting Brings

Monitoring and Alerting

- Dashboards tracking pipeline health, data quality scores, freshness, and error rates

- Automated alerts for failures, anomalies, and SLA breaches

- The team knows about problems before stakeholders do, not after

Maintenance Planning

- Source systems change. APIs deprecate. Schemas evolve. New fields appear.

- A maintenance plan ensures these changes are handled proactively, not reactively after pipelines break

- Scheduled reviews of all connectors, transformation logic, and governance rules

Runbooks for Common Scenarios

- What to do when a connector fails

- How to handle a schema change in a source system

- How to onboard a new data source

- How to respond to a data quality alert

- Documented, tested, and accessible, so the team isn’t debugging from scratch every time

Planning for What’s Next

- New data sources, the company acquires a business, launches a product, adopts a new tool

- New requirements, a new KPI, a new compliance mandate, a new analytics use case

- New regulations, GDPR evolves, new data residency requirements emerge

- Effective consulting engagements establish monitoring, governance, and operational processes that enable sustainable evolution beyond initial implementation.

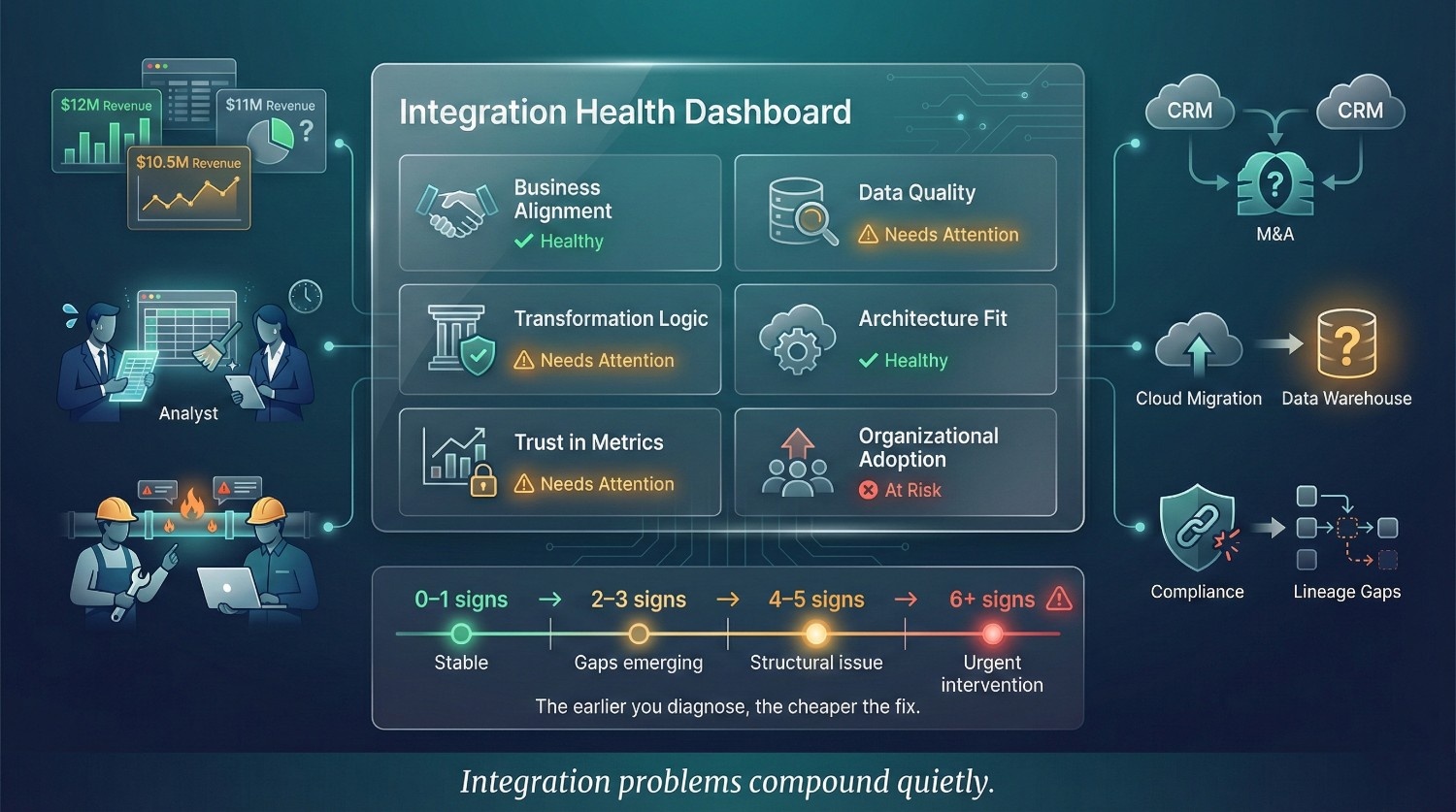

Signs Your Organization Needs Data Integration Consulting

Not sure whether your data challenges warrant outside help? Here’s a honest checklist. If even two or three of these sound familiar, tooling alone may not be sufficient to resolve the underlying integration issues and into territory where data integration consulting delivers the highest ROI.

You’ve Invested in Connectors and Tools, But Aren’t Seeing Business Value

The symptoms:

- Fivetran, Airbyte, or another connector platform is running. Data is flowing. Green lights everywhere.

- But nobody is using the data for actual decisions

- The dashboards exist but leadership doesn’t reference them

- The warehouse has hundreds of tables that nobody queries

What this means: You solved the movement problem. The integration problem, transformation, quality, modeling, governance, was never addressed.

Different Departments Report Different Numbers for the Same Metrics

The symptoms:

- Finance says Q3 revenue was $22M. Sales says $24.5M. Marketing has a third number.

- Customer count differs depending on which team you ask

- Churn rate, conversion rate, pipeline value, every metric has multiple “versions of the truth”

What this means: Data from multiple systems was never reconciled against shared definitions. There’s no canonical model, no golden record logic, and no agreed-upon business rules.

Your Data Team Spends More Time Fixing Pipelines Than Building Insights

The symptoms:

- Engineers are in firefighting mode, debugging failures, patching pipelines, manually reconciling data

- Analysts spend days cleaning and preparing data before they can analyze anything

- The backlog of “data fixes” keeps growing. The backlog of “new capabilities” never shrinks.

- Your most expensive technical talent is doing janitorial work

What this means: The integration architecture may not have been designed with sufficient maintainability, observability, or resilience. The team is paying the tax of an under-engineered foundation, every single day.

A Recent Merger or Acquisition Has Left You with Duplicate Systems

The symptoms:

- Two (or more) CRMs, ERPs, HR systems, and finance platforms, all with overlapping data

- “Customer” is defined differently in each system. So is “employee,” “product,” and “revenue.”

- Nobody has reconciled which system is authoritative for what

- Both organizations are still running on their pre-merger processes

What this means: Post-acquisition data consolidation is one of the most complex integration challenges that exist. It requires entity resolution, schema mapping, governance alignment, and organizational change management, all at once. This is a common scenario where structured data integration consulting can add significant value.

You're Planning a Cloud Migration and Need to Rethink Integration

The symptoms:

- You’re moving from on-prem to Snowflake, BigQuery, Databricks, or another cloud platform

- The migration plan covers moving the data, but not how it will be modeled, governed, or consumed in the new environment

- There’s an assumption that “once we’re in the cloud, everything will work better”

What this means: Cloud migration without an integration strategy can relocate existing problems into a new environment without resolving root causes. The move is the easy part. Designing the integration architecture for the new environment is where the value lives.

Compliance or Regulatory Pressure Requires Consolidated Data

The symptoms:

- Auditors are asking for data lineage and you can’t provide it

- GDPR, HIPAA, SOX, or CCPA compliance requires you to know exactly where sensitive data lives, how it flows, and who has access

- Regulatory reports require reconciled data from multiple systems, and producing them is a manual, error-prone scramble every time

What this means: Compliance isn’t a data engineering problem, it’s a governance and integration problem. You need consolidated, auditable data with clear lineage, access controls, and documented business rules.

You've Attempted Integration Internally and It Stalled or Failed

The symptoms:

- The project started strong, connectors configured, initial data flowing

- Then it hit the hard parts: transformation logic, schema conflicts, data quality, stakeholder disagreements

- Momentum slowed. Scope expanded. The budget ran over. The project quietly stalled.

- Nobody officially killed it, it just stopped making progress

What this means: Internal teams often have the technical skills but lack the methodology, cross-functional facilitation, and battle-tested frameworks that come from doing integration across dozens of organizations. Data integration consulting doesn’t replace your team, it accelerates them past the parts where projects typically stall.

Leadership Is Losing Trust in the Organization's Data

The symptoms:

- Executives have stopped referencing dashboards in decision meetings

- The phrase “I don’t trust the data” has become common

- Strategic decisions are made on gut instinct and anecdotal evidence

- Data team morale is dropping because their work isn’t being used

What this means: This is one of the most serious warning signs, because once trust is gone, it takes years to rebuild. Every additional month of operating with untrustworthy data deepens the cultural damage and makes recovery harder.

The Honest Assessment

Number of Signs That Apply | What It Suggests |

|---|---|

0–1 |

You're likely in good shape. Keep monitoring. |

2-3 |

You have integration gaps that are costing you, start scoping a strategy |

4-5 |

You need data integration consulting, the problem is too layered for tooling or internal effort alone |

6+ |

This is urgent. The longer you wait, the more expensive the fix becomes, and the more trust you lose |

What to Do Next

If several of these signs resonated, purchasing additional tools without addressing structural integration issues often fails to resolve the root causes. The second worst thing is to keep doing nothing while the costs compound silently.

The best next step is an honest assessment, either internal or with a data integration consulting partner, that answers three questions:

- Where are we today? What’s working, what’s broken, and what’s the real state of our data?

- Where do we need to be? What business outcomes should our data support?

- What’s the fastest path between the two? What’s the right architecture, approach, and sequence?

Those three questions are the starting point of every successful integration initiative. And they’re the starting point of every data integration consulting engagement worth its fee.

How to Choose the Right Data Integration Consulting Partner

Data integration consulting capabilities vary significantly across providers. The difference between a great consulting partner and a mediocre one isn’t just technical skill, it’s methodology, business acumen, and the ability to navigate the organizational complexity that kills most integration projects.

Here’s how to evaluate, filter, and select the right partner, and how to spot the ones you should walk away from.

What to Look For

Deep Experience Across Industries and Integration Patterns

- They’ve done this across multiple industries, retail, healthcare, finance, SaaS, manufacturing, not just one vertical

- They’ve worked with different integration patterns: warehouse consolidation, real-time streaming, data virtualization, post-acquisition unification, API-led integration

- They demonstrate tool-agnostic evaluation grounded in business and architectural requirements.

- They’ve seen the edge cases, the political landmines, and the unexpected complications, because they’ve encountered them dozens of times before

A Methodology That Starts with Business Outcomes

- Their first question isn’t “what tools are you using?”, it’s “what business problem are you trying to solve?”

- They have a structured discovery process, stakeholder interviews, data landscape assessment, current-state mapping

- They define success criteria and KPIs before recommending architecture or tools

- If they jump straight to technology without understanding your business, they’re implementers, not consultants

Proven Ability to Handle Technical and Organizational Complexity

The best data integration consulting partners operate across both dimensions:

Technical Complexity | Organizational Complexity |

|---|---|

Schema mapping across dozens of sources |

Stakeholder alignment across departments |

Complex transformation logic with business rules |

Change management and adoption planning |

Architecture design for scale and resilience |

Governance framework and ownership models |

Data quality assessment and remediation |

Training and knowledge transfer |

The best data integration consulting partners operate across both dimensions:If they’re strong technically but ignore the people’s side, or vice versa, the project will stall at the layer they can’t address.

References and Case Studies with Measurable Results

- Not just “we integrated their systems”, but “we reduced reporting time by 70%, eliminated data discrepancies across three departments, and delivered a unified customer view that improved retention by 12%”

- Ask for references you can actually speak with, not just logos on a slide deck

- Look for case studies that match your situation: similar industry, similar complexity, similar scale

Flexibility in Engagement Model

Different organizations need different things. The right partner offers multiple engagement models:

Model | When It Fits |

|---|---|

Advisory / Strategy |

You have a capable team but need architecture guidance and a strategic roadmap |

Full Implementation |

You need end-to-end delivery, from discovery through go-live and beyond |

Team Augmentation |

You have the strategy but need specialized skills to execute, data modeling, governance design, transformation engineering |

Assessment Only |

You're not sure what you need yet, start with a diagnostic to understand the current state and define next steps |

A good partner meets you where you are, not where their sales model needs you to be.

Red Flags to Watch For

Leading with a Specific Tool Before Understanding Your Needs

“We recommend Informatica for this.” “Have you heard about it? We haven’t even described our problem yet.”

If the consultant’s first instinct is to recommend a platform, especially one they’re certified in or have a resale agreement with, they may be prioritizing product alignment over objective solution design.

The right partner defines the problem before prescribing the solution.

No Emphasis on Data Quality, Governance, or Change Management

If their proposal focuses entirely on:

- Connector setup

- Pipeline development

- Dashboard delivery

But doesn’t mention:

- Data profiling and quality assessment

- Governance framework

- Stakeholder alignment

- Change management

They’re building the technical layer without the strategic foundation. You may receive technical deliverables without achieving measurable business outcomes.

Promising a Quick Fix or One-Size-Fits-All Solution

Warning phrases to listen for:

- “We can have this done in 6 weeks”, for a complex, multi-source integration? No.

- “Our platform handles all of this automatically”, it doesn’t. No platform does.

- “We’ve done this exact same thing for another client”, every integration is unique. Copy-paste approaches fail.

- “You won’t need to involve business stakeholders much”, this guarantees failure

Data integration consulting that promises simplicity is usually delivering shortcuts that create long-term debt.

No Plan for Ongoing Support or Knowledge Transfer

If the engagement ends at go-live with no transition plan, you’ll be:

- Dependent on the consultant for every change and fix

- Unable to onboard new data sources without bringing them back

- Left with undocumented transformation logic that nobody internal understands

A strong partner emphasizes capability building and knowledge transfer rather than long-term dependency.

Can't Explain Their Approach in Business Terms

If every answer is deeply technical with no connection to business outcomes:

- “We’ll set up a Kafka cluster with Avro serialization and Schema Registry…”

- But can’t answer: “How will this help us close the books faster?”

Technical depth is essential. But if a consultant can’t translate their approach into language that a CFO, CMO, or CEO can understand, they won’t be able to align stakeholders, and the project will lose executive support.

Questions to Ask Prospective Partners

Before signing anything, have a real conversation. These questions will tell you everything you need to know about whether a data integration consulting partner is the right fit.

On Discovery and Planning

“How do you approach requirements gathering and stakeholder alignment?”

What you want to hear:

- Structured discovery process, stakeholder interviews, data landscape mapping, current-state assessment

- Business outcome definition before technical design

- Cross-functional engagement, not just IT, but finance, marketing, ops, compliance

Red flag answer:

- “We’ll review your systems and put together an architecture.”, no clear mention of stakeholder engagement or defined business objectives.

On Data Quality

“What’s your methodology for data quality assessment?”

What you want to hear:

- Source-level profiling before integration design begins

- Quantitative quality metrics, null rates, duplicate percentages, format consistency scores

- Risk prioritization framework, which quality issues are critical vs. cosmetic

- Baseline establishment so improvement can be measured

Red flag answer:

- “We handle data quality during the transformation phase.”, Ideally, data quality assessment should begin before architecture and transformation design.

On Conflict Resolution

“How do you handle schema conflicts and business rule disagreements?”

What you want to hear:

- Collaborative workshops with business and technical stakeholders

- Documented decisions with clear rationale and approval

- Golden record rules defined by the business, not assumed by engineers

- A process for handling exceptions and edge cases

Red flag answer:

- “Our engineers will figure out the best mapping.”, Engineers shouldn’t be making business logic decisions in isolation.

On Testing and Validation

“What does your testing and validation process look like?”

What you want to hear:

- Multi-layer testing, technical, data accuracy, and business validation

- Source-to-target reconciliation on every pipeline

- Edge case coverage, nulls, special characters, timezones, high-volume loads

- Business user validation before go-live, not after

Red flag answer:

- “We test that the pipelines run successfully.”, That reflects operational monitoring rather than comprehensive data validation

On Knowledge Transfer and Independence

“How do you ensure knowledge transfer so we’re not dependent on you long-term?”

What you want to hear:

- Documentation of all architecture decisions, transformation rules, and governance frameworks

- Runbooks for common operational scenarios

- Training sessions for internal teams, engineers, analysts, and data stewards

- Gradual handoff with decreasing consulting involvement over time

- The explicit goal: your team runs this independently within a defined timeframe

Red flag answer:

“We offer ongoing managed services.”, That’s fine as an option, but if it’s the only answer, they’re building dependency, not capability.

The Summary

What Great Partners Do | What Bad Partners Do |

|---|---|

Start with business outcomes |

Start with tool recommendations |

Assess data quality before building |

Skip profiling and hope for the best |

Involve stakeholders across departments |

Work only with IT |

Design for maintainability and evolution |

Deliver and disappear |

Transfer knowledge to your team |

Create ongoing dependency |

Explain their approach in business terms |

Hide behind technical jargon |

Phase delivery and manage expectations |

Promise fast, simple results |

The right data integration consulting partner doesn’t just build your integration, they build your organization’s capability to sustain and evolve it. That’s the difference between a project that delivers value once and a foundation that delivers value for years.

A Better Approach: What Successful Data Integration Looks Like

We’ve spent most of this post talking about what goes wrong. This section is about what goes right, and what it looks like when an organization approaches data integration with the right strategy, structure, and discipline.

The principles below reflect recurring patterns observed in successful integration initiatives across industries, and the framework that experienced data integration consulting teams bring to every engagement.

Start with the Business Question, Not the Technology

The Wrong Starting Point

“We need to set up Fivetran and get our data into Snowflake.”

The Right Starting Point

“We need to understand why customer churn increased 18% last quarter, and we can’t answer that because our CRM, support, billing, and product usage data are all disconnected.”

The business question defines everything:

- Which data sources matter

- What level of quality and timeliness is required

- Who the consumers are and what they need

- How success will be measured

Technology is the how. The business question is the why. Start with the business context and measurable outcomes.

Invest in Discovery and Assessment Before Building

Many successful integration initiatives allocate a meaningful portion of early effort to discovery and assessment before implementation begins..

What this phase delivers:

- A complete inventory of source systems, data domains, and existing data flows

- Data quality profiles for every critical source

- A stakeholder map, who produces data, who consumes it, who governs it

- A gap analysis between current state and desired outcomes

- A prioritized, phased roadmap based on business value and technical feasibility

What this prevents:

- Building on top of data you don’t understand

- Discovering quality problems mid-project

- Scope creep from undefined requirements

- Political landmines from stakeholders who were never consulted

The organizations that skip this phase don’t save time. They spend it later, debugging, reworking, and rebuilding.

Treat Data Quality as a Prerequisite, Not an Afterthought

The Pattern in Failed Projects

- Data is moved to the warehouse

- Transformation logic is built

- Dashboards are created

- Stakeholders start using the dashboards

- Someone finds wrong numbers

- Root cause: source data quality issues that nobody checked

- Weeks of debugging and rework begin

The Pattern in Successful Projects

- Source data is profiled and assessed

- Quality issues are identified and prioritized

- Critical issues are fixed or accounted for before integration begins

- Quality gates are built into the pipeline, not bolted on after

- Data moves through the pipeline with quality validated at every stage

- Stakeholders receive data they can trust from day one

A major differentiator is sequencing and prioritization, particularly placing data quality and stakeholder alignment early in the lifecycle. Successful projects put quality first.

Design for Evolution, Not Just the Current State

Business requirements and data landscapes typically evolve over time, requiring adaptable design.

Design decisions that enable evolution:

Design Choice | Why It Matters |

|---|---|

Modular pipeline architecture |

New sources can be added without rewriting existing pipelines |

Configuration-driven transformation |

Business rule changes don't require code deployments |

Schema evolution support |

Source schema changes are handled gracefully, not with pipeline crashes |

Decoupled ingestion and transformation |

Movement and integration layers can evolve independently |

Documented, version-controlled logic |

Anyone on the team can understand and modify transformation rules, not just the person who wrote them |

The goal isn’t to predict the future. It’s to build a foundation that adapts to it without requiring a rebuild.

Involve Business Stakeholders Throughout

Not at the kickoff. Not at go-live. Throughout.

Where Stakeholders Add Irreplaceable Value

Phase | Stakeholder Contribution |

|---|---|

Discovery |

Define business outcomes, priorities, and success criteria |

Data Mapping |

Clarify what fields mean in their domain, nuances no engineer can guess |

Transformation Design |

Approve business rules, metric definitions, and golden record logic |

Testing |

Validate outputs against their domain knowledge and expectations |

Go-Live |

Confirm the integrated data supports their actual workflows |

Ongoing |

Report quality issues, request new data sources, evolve requirements |

When stakeholders are involved throughout, they have ownership of the integrated data, not just access to it. That ownership drives adoption, trust, and long-term success.

Governance, lineage, and observability are most effective when embedded early rather than retrofitted later.

Governance from day one means:

- Data owners and stewards are defined before pipelines are built

- Access controls are designed alongside the data model, not retrofitted

- Golden record rules exist before the first transformation runs

Lineage from day one means:

- Every pipeline tracks source, transformation, and destination

- An auditor or analyst can trace any number back to its origin

- Compliance requirements are met by design, not by scramble

Observability from day one means:

- Pipeline health, data freshness, and quality scores are monitored in real time

- Anomalies trigger alerts, not silent failures that go unnoticed for weeks

- The team knows the state of the integration at all times, not just when something breaks

Plan for Ongoing Maintenance and Iteration

Integration is a living system. Treating it as a project with a fixed end date guarantees decay.

What ongoing maintenance includes:

- Monitoring, automated alerting for pipeline failures, data quality degradation, and SLA breaches

- Source system change management, handling API updates, schema changes, and new fields without pipeline breakage

- Business rule evolution, updating transformation logic as definitions, KPIs, and strategies change

- New source onboarding, a documented, repeatable process for adding data sources without disrupting existing flows

- Regular governance reviews, quarterly check-ins on data quality, ownership accountability, and policy relevance

The best integration architectures aren’t the ones that work perfectly on launch day. They’re the ones that still work perfectly 18 months later, because maintenance and evolution were planned from the start.

Leverage Data Integration Consulting to Accelerate and De-Risk

Internal teams are smart and capable. But data integration consulting brings something that’s hard to build internally:

- Pattern recognition, they’ve seen your exact problem at 20 other organizations

- Battle-tested methodology, a structured approach that prevents the common failure modes

- Cross-functional facilitation, the ability to align business and technical stakeholders who speak different languages

- Accelerated delivery, frameworks, templates, and experience that compress timelines without cutting corners

- Risk mitigation, knowing where projects typically fail and proactively preventing it

Consulting isn’t a sign that your team isn’t good enough. It’s a recognition that integration is one of the hardest problems in enterprise data, and that the organizations who succeed are the ones who bring the right experience to the table.

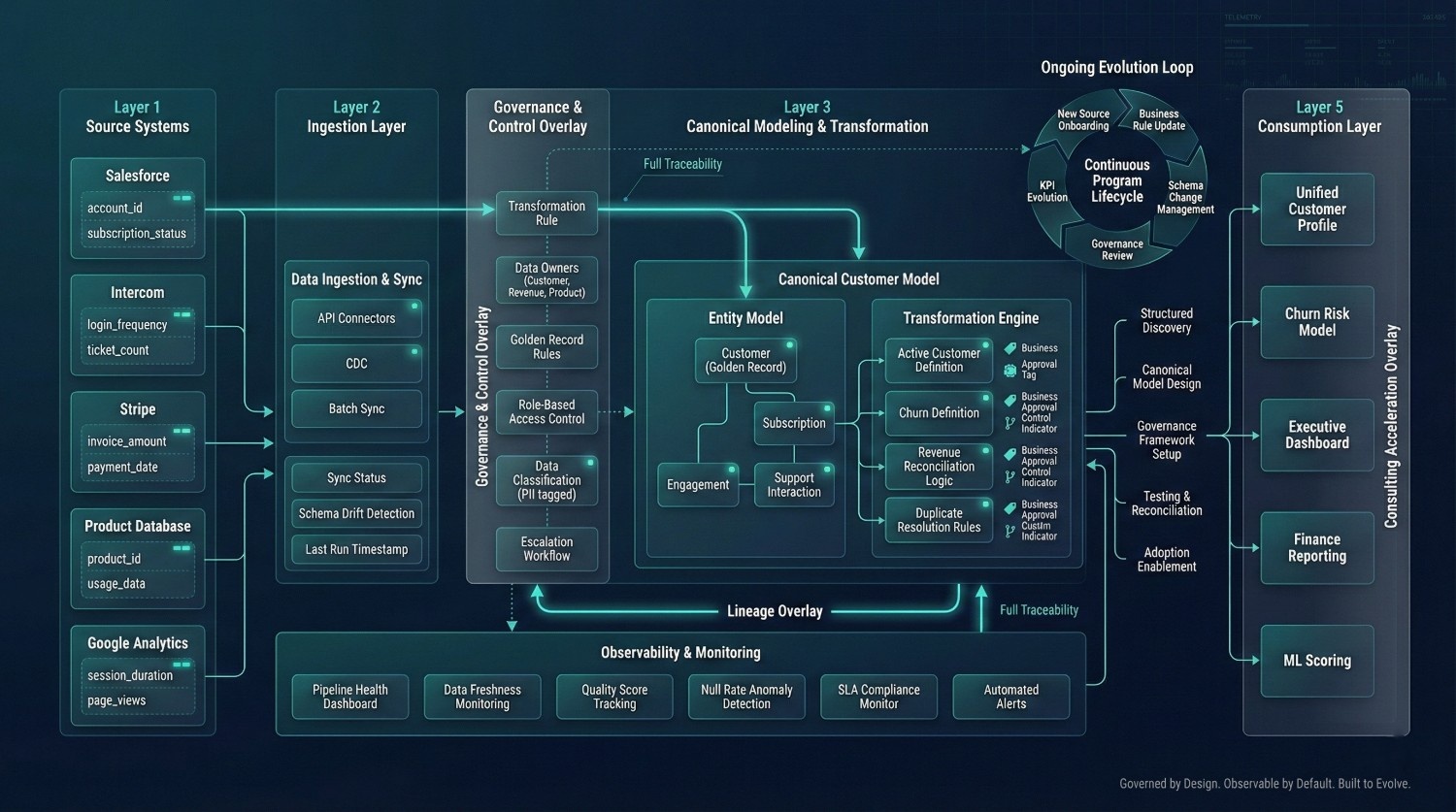

What This Looks Like in Practice: A Story

The Scenario

A mid-market B2B SaaS company, 800 employees, 15,000 customers, wanted to reduce churn. Their data lived in five disconnected systems:

System | Data |

|---|---|

Salesforce |

Deals, accounts, contacts |

Intercom |

Support conversations, ticket volumes |

Stripe |

Subscriptions, invoicing, payment history |

Product database |

Feature usage, login frequency, engagement scores |

Google Analytics |

Website behavior, acquisition channels |

The Previous Attempt (Without Consulting)

- Connected all five systems to Snowflake using Fivetran, took 2 weeks

- Built dbt models to combine the data, took 3 months

- Launched a churn dashboard in Looker, took 2 more weeks

- Result: nobody trusted it

- Customer counts didn’t match between Salesforce and Stripe

- “Active customer” was defined differently in every model

- Churn rate varied depending on which dashboard you looked at

- The project stalled. The team moved on to other priorities.

The Second Attempt (With Data Integration Consulting)

Week 1–3: Discovery and Assessment