Table of Contents

Introduction

At some point in every data integration consulting engagement, usually before it starts, sometimes midway through, and almost always afterward, someone in leadership asks the same question:

“What are we getting for this money?”

It’s a fair question. A necessary question. And one that most organizations struggle to answer clearly.

Not because the value isn’t there. But because integration ROI doesn’t fit neatly into the models that leadership is used to seeing.

Why Integration ROI Is Hard to Pin Down

Marketing ROI is often more direct to model than integration ROI, though attribution still varies by channel, lifecycle, and sales cycle length. Product ROI is measurable, launch a feature, track adoption, calculate revenue impact.

Data integration consulting ROI is different. The value often appears across multiple line items and stakeholders rather than a single owner:

- Prevented costs, the migration failure that didn’t happen, the compliance violation that was avoided, the rework cycle that was never needed

- Distributed benefits, faster reporting for finance, better personalization for marketing, cleaner training data for the AI team, more reliable dashboards for leadership. No single team “owns” the full ROI.

- Indirect impact, integration doesn’t generate revenue directly. It enables the initiatives that generate revenue. The value is real but one step removed from the P&L.

- Time-shifted returns, the investment happens in Q1. The full returns materialize in Q3 and Q4. By then, nobody’s connecting the dots back to the integration work.

This measurement challenge creates a dangerous dynamic.

The Cost of Not Measuring

When organizations can’t quantify integration ROI, predictable things happen:

Integration Gets Treated as a Cost Center

- Leadership sees the consulting invoice but can’t see the value it created

- The engagement gets categorized alongside infrastructure expenses, necessary but not strategic

- Future investment requests are harder to justify because there’s no demonstrated return from previous ones

Budgets Get Cut Prematurely

- The integration initiative is delivering value, but nobody’s measuring it

- A budget review happens. The CFO asks which line items can be reduced.

- Integration consulting, with no documented ROI, is an easy target

- The engagement gets cut. The partially built foundation starts to degrade. Months later, the same problems often return.

The Same Mistakes Get Repeated

- Without ROI measurement, there’s no organizational memory of what worked

- The next integration initiative starts from scratch, same discovery, same mistakes, same stalled outcomes

- The cycle of invest → can’t prove value → cut budget → problems return → invest again becomes permanent

The Thesis

The ROI of data integration consulting is absolutely measurable, it just requires knowing what to measure, when to measure it, and how to connect integration outcomes to business impact.

The problem isn’t that integration value is unmeasurable. The problem is that most organizations are looking for ROI in the wrong places, at the wrong times, using the wrong metrics.

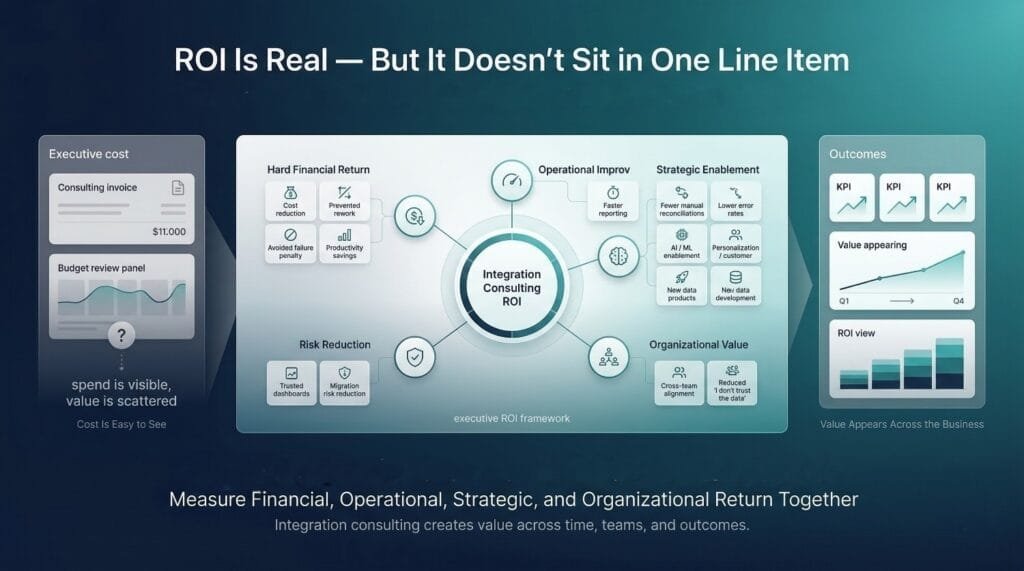

Integration ROI isn’t a single number. It’s usually a multi-dimensional picture that spans:

- Hard financial returns, reduced costs, avoided waste, recovered productivity

- Operational improvements, faster processes, fewer errors, less manual work

- Strategic enablement, analytics, AI, personalization, and compliance initiatives that couldn’t have happened without integrated data

- Risk reduction, failed projects prevented, compliance violations avoided, trust preserved

- Organizational value, culture shift from “I don’t trust the data” to data-driven decision-making

Each of these dimensions to a practical degree using a mix of financial metrics and well chosen proxies. Each contributes to the total ROI picture. And together, they make a compelling case that data integration consulting isn’t a cost, it’s one of the highest-leverage investments a data organization can make.

What You'll Walk Away With

By the end of this post, you’ll have:

- A practical framework for measuring integration consulting ROI across multiple dimensions, financial, operational, strategic, and organizational

- Specific metrics and formulas you can apply to your own organization, not theoretical models, but calculations you can run with real numbers

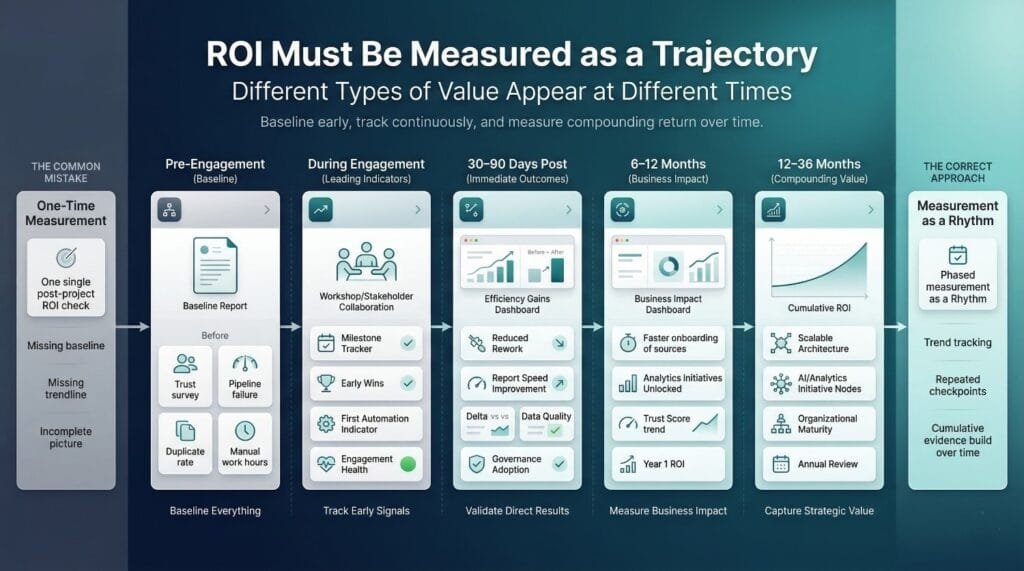

- Guidance on when to measure, because ROI shows up at different times for different value dimensions

- A communication strategy for presenting integration ROI to leadership, in language that resonates with CFOs, CEOs, and boards

- The confidence to make the case for data integration consulting as a strategic investment, not just a line item to be defended

Let’s build the framework.

Why Measuring Integration Consulting ROI Is Difficult (But Not Impossible)

Before building the measurement framework, let’s be honest about why this is hard. Not to make excuses, but because understanding the measurement challenges is essential to designing metrics that actually work.

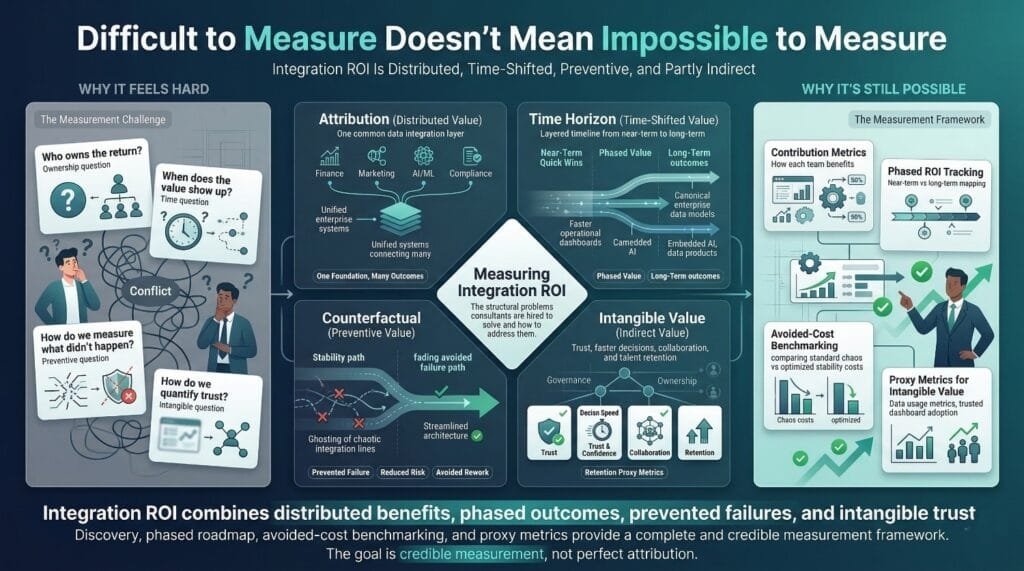

There are four core problems. Each one is real. None of them are unsolvable.

The Attribution Problem

The Challenge

Data integration consulting improves the foundation. But foundations are invisible. What people see, and what leadership measures, are the things built on top of that foundation.

Consider what happens after a successful integration engagement:

- Finance closes the books 3 days faster, is that an integration win or a finance win?

- The AI team’s churn model is 22% more accurate, is that a data science achievement or an integration achievement?

- Marketing’s personalization engine increases conversion by 15%, is that marketing ROI or integration ROI?

- The compliance team passes an audit without findings, is that a governance success or a consulting success?

The honest answer to all of these is both. Integration made each of them possible. But the visible credit goes to the team that delivered the final output, not the team that built the data foundation underneath it.

Why This Makes ROI Hard

- Multiple teams and projects benefit from the same integration work

- No single initiative “owns” the full return

- The people who experience the benefit often don’t know integration was involved

- Standard ROI models expect a clean line from investment to return, integration creates a web, not a line

The Analogy

Measuring the ROI of data integration consulting is like measuring the ROI of a building’s foundation. Nobody buys a building because of its foundation. But without the foundation, nothing above it stands.

The foundation doesn’t get credit for the visible outcomes. But without it, many outcomes do not hold up in scale, auditability, or reliability.

How to Solve It

Don’t try to claim 100% attribution for downstream outcomes. Instead, establish contribution metrics, documented evidence that integration was a necessary enabler for specific business results. We’ll build this into the framework in later sections.

The Time Horizon Problem

The Challenge

Integration ROI doesn’t arrive all at once. Different types of value materialize on different timelines.

Near term returns (weeks to months):

- Reduced manual data preparation time

- Faster data delivery to the warehouse

- Eliminated spreadsheet-based workarounds

- Fewer pipeline failures and faster recovery times

Medium-term returns (3–12 months):

- Improved reporting accuracy and consistency

- Analytics initiatives unblocked and delivering insights

- Analyst productivity recovered from data janitor work

- Stakeholder trust in data increasing

Long-term returns (1–3 years):

- Better strategic decisions based on trusted, unified data

- Technical debt significantly reduced

- Architecture is positioned to handle material growth with fewer redesign cycles, depending on workload patterns and governance maturity

- AI/ML initiatives producing reliable results

- Organizational culture shifted toward data-driven decision-making

Why This Makes ROI Hard

- Leadership typically wants ROI demonstrated within the first quarter of an engagement

- The most valuable returns, strategic enablement, cultural change, avoided rebuilds, take 12+ months to fully materialize

- Short-term ROI calculations capture the quick wins but miss the compounding value

- Budget reviews happen on annual cycles, but integration ROI compounds over multi-year horizons

How to Solve It

Measure ROI in phases, immediate, medium-term, and long-term, with different metrics appropriate to each horizon. Set expectations upfront that the full ROI picture emerges over time, while demonstrating quick wins early to build confidence. We’ll build this timeline into the framework.

The Counterfactual Problem

The Challenge

Some of the highest-value outcomes of data integration consulting are things that didn’t happen:

- The cloud migration that didn’t fail because architecture was designed correctly from the start

- The compliance violation that didn’t occur because governance and lineage were implemented before the audit

- The integration project that didn’t stall for the third time because consulting addressed the root causes that previous attempts missed

- The six months of rework that didn’t happen because the data model was designed right the first time

The executive trust erosion that didn’t occur because dashboards showed accurate numbers from day one

Why This Makes ROI Hard

It’s genuinely difficult to prove the value of something that was prevented.

- You can’t measure revenue from a crisis that didn’t happen

- You can’t quantify the cost of a project failure that was avoided

- You can’t show a before-and-after for a problem that never materialized

- Leadership is naturally skeptical of claims like “we saved you $500K by preventing a failure you never saw”

How to Solve It

Use benchmarking and industry data to estimate counterfactual costs. If 85% of big data projects fail (Gartner), and your consulting-guided project succeeded, the counterfactual cost is estimable. If the average failed migration costs $X in rework and delays, and yours didn’t fail, the avoided cost is calculable.

It’s not perfect attribution. But it’s reasonable estimation, and it’s far better than ignoring prevented costs entirely.

The Intangible Value Problem

The Challenge

Some of the most impactful outcomes of data integration consulting are hard to quantify directly:

- Data trust, the shift from “I don’t trust the data” to leadership confidently citing dashboards in board meetings

- Cross-team collaboration, departments that used to guard their data now sharing willingly because there’s a governed, unified model

- Decision speed, questions that used to take days of manual data assembly now answered in minutes

- Organizational data literacy, teams understanding where data comes from, what it means, and how to use it correctly

Talent retention, data professionals staying because they’re doing meaningful work instead of maintaining broken infrastructure

Why This Makes ROI Hard

- These benefits are real and significant, often the most significant long-term outcomes

- But they don’t translate directly into dollar amounts

- CFOs are understandably skeptical of “trust improved” as an ROI metric

- Purely financial ROI calculations exclude these benefits entirely, creating an incomplete and misleading picture

How to Solve It

Do not force intangible value into a single financial formula, but do connect it to operational proxies and, where possible, downstream cost or revenue sensitivity. Instead, measure it with proxy metrics that leadership can understand and track:

- Data trust → adoption rates (percentage of decisions referencing integrated data)

- Decision speed → time-to-insight (hours from question to answer, before vs. after)

- Collaboration → cross-departmental data usage (number of teams actively querying unified datasets)

- Talent retention → attrition rates in data roles, before and after the engagement

These aren’t dollar amounts. But they’re measurable, trackable, and meaningful, and they complete the ROI picture that financial metrics alone can’t capture.

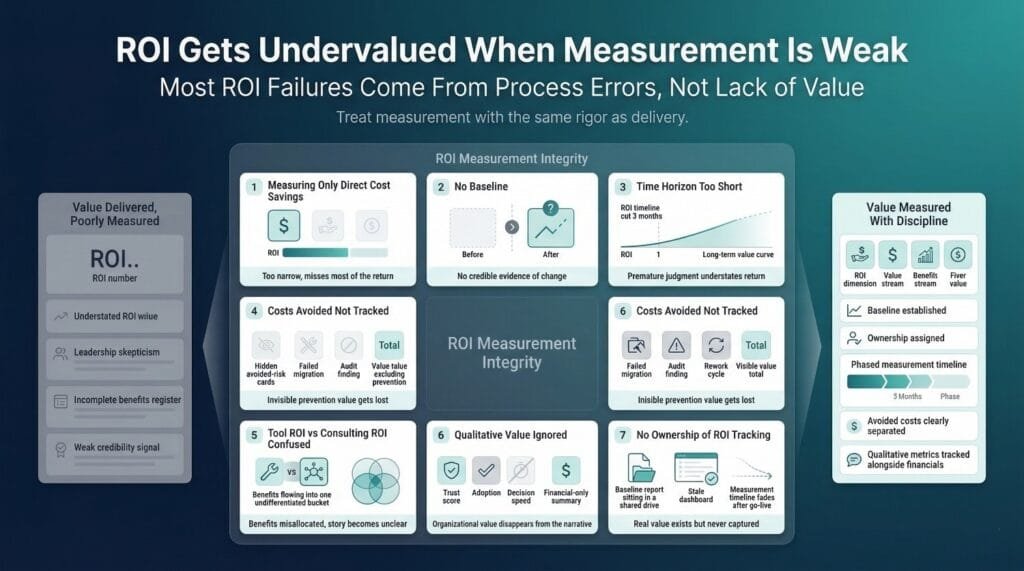

Why Organizations Still Must Measure It

Despite these challenges, not measuring integration ROI is worse than measuring it imperfectly.

Accountability for Investment Decisions

Leadership allocated significant budget to this engagement. They deserve, and will demand, evidence that the investment produced results. Having an imperfect measurement framework is infinitely better than having no answer at all.

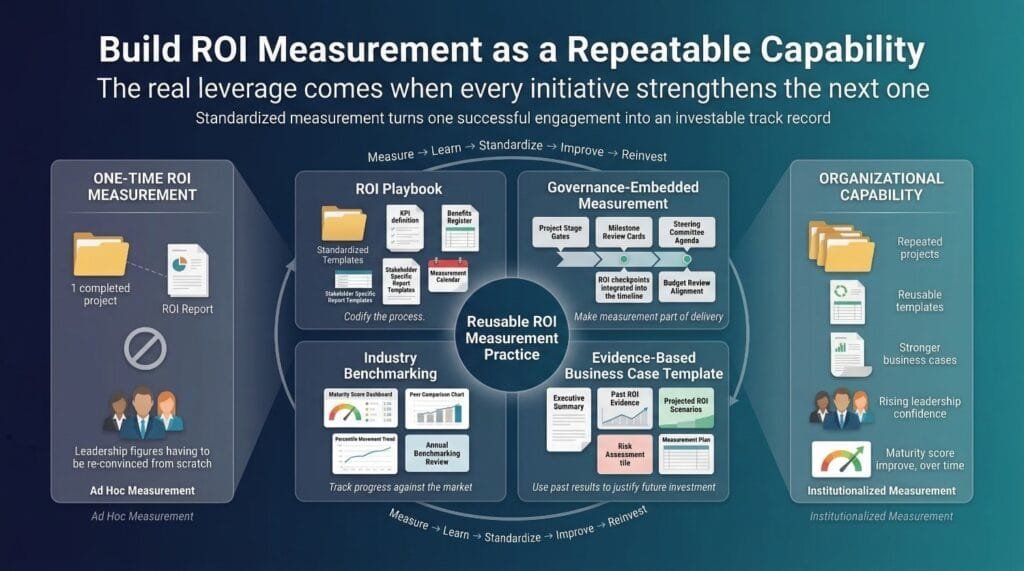

Justifying Future Integration Spending

Integration isn’t a one-time investment. New sources, new requirements, new regulations, and business growth all create ongoing integration needs. Without documented ROI from previous engagements, every future budget request starts from zero, fighting for justification instead of building on proven returns.

Identifying What's Working and What Isn't

ROI measurement isn’t just for leadership reporting. It’s for learning. Which aspects of the engagement delivered the most value? Where were the returns lower than expected? What should be adjusted for the next phase? Without measurement, you can’t optimize.

Building the Case for Ongoing Data Investment

The organizations that invest consistently in data infrastructure, integration, governance, quality, outperform those that invest sporadically. But consistent investment requires consistent evidence of return. ROI measurement creates the feedback loop that sustains ongoing investment.

The Bottom Line

Imperfect measurement of real value is always better than precise measurement of nothing.

The measurement challenges are real. But they’re not reasons to skip measurement, they’re reasons to build a framework that accounts for them. That’s exactly what we’ll do in the next sections.

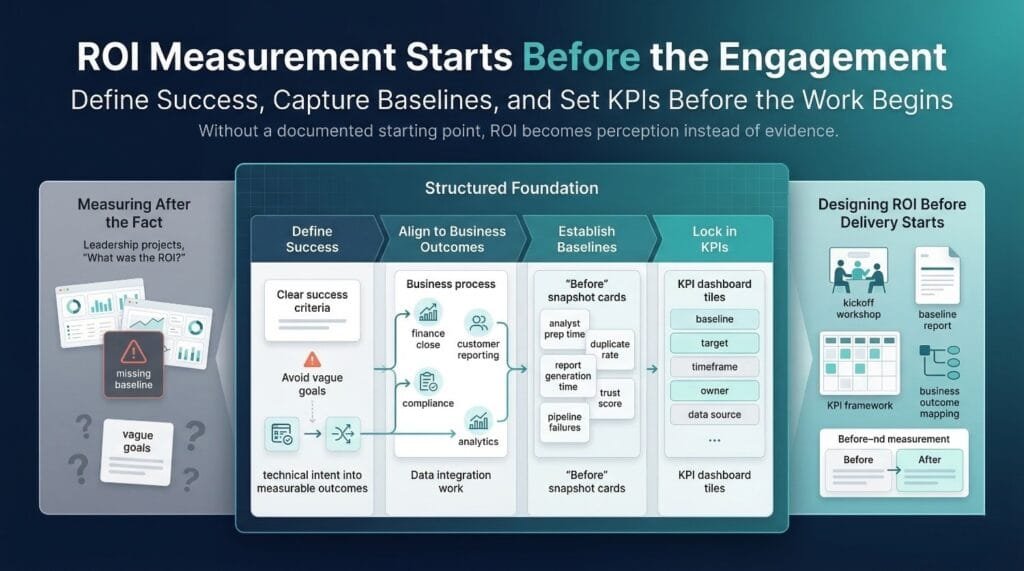

Setting the Foundation: Define Success Before You Start

Most ROI measurement failures trace back to the same root cause: success and baselines were not defined before the engagement began.

This section fixes that. Before a single pipeline is built or a single architecture diagram is drawn, these four steps need to happen, or your ROI measurement will always be guesswork.

The Biggest Mistake: Measuring ROI After the Fact

The Pattern

- Organization engages data integration consulting

- The engagement often runs for several months, depending on scope, data landscape complexity, and compliance requirements

- The work is delivered, architecture, pipelines, governance, documentation

- Leadership asks: “What was the ROI?”

- The data team scrambles to find metrics that show value

- Nobody documented the starting point, so there’s nothing to compare against

- The ROI conversation becomes a debate about perception instead of a discussion grounded in evidence

Why This Happens

- The team was focused on delivery, not measurement

- Success criteria felt obvious, “make the data better”, so nobody formalized them

- Baselining the current state felt like extra work that delayed the “real” work

- Leadership assumed ROI would be self-evident after the project shipped

Why It Destroys ROI Measurement

Without a documented starting point, you cannot measure improvement credibly. You can only make claims.

- “Reporting is faster now” → How much faster? Compared to what?

- “Data quality improved” → From what baseline? By what percentage?

- “Teams trust the data more” → Based on what evidence? How did you measure trust before?

Claims without baselines aren’t ROI, they are anecdotes, and anecdotes rarely hold up under CFO level scrutiny.

The Fix

Define success criteria and establish baselines before the engagement starts. Not during. Not after. Before.

This should be a formal deliverable of the engagement’s kickoff, agreed upon by the consulting team, the internal data team, and the business stakeholders who will ultimately judge whether the investment paid off.

Aligning Integration Goals with Business Outcomes

The Problem with Vague Goals

Most integration initiatives start with goals like:

- “Improve our data”

- “Integrate our systems”

- “Build a single source of truth”

- “Modernize our data infrastructure”

These aren’t goals. They’re directions. You can’t measure ROI against a direction because you can never determine whether you’ve arrived.

What Business-Aligned Goals Look Like

Every data integration consulting engagement should map to specific, measurable business outcomes, not technical activities.

Vague goal → Business-aligned goal:

- “Improve our data” → “Reduce customer record duplication from 35% to under 3%, enabling accurate customer count reporting across all departments”

- “Integrate our systems” → “Create a unified financial view across NetSuite, Salesforce, and our billing platform to reduce monthly close time from 15 days to 5 days”

- “Build a single source of truth” → “Deliver a governed customer data model that supports a 10% improvement in cross-sell conversion by enabling personalization based on unified purchase and engagement history”

“Modernize our data infrastructure” → “Achieve SOX compliance for consolidated financial data by Q3, with full lineage tracking from source to regulatory report”

How to Get There

For every integration initiative, answer three questions:

- What business decision or process will this integration improve? Not “what data will move”, what will change for the business.

- How will we measure that improvement? A specific metric with a current value and a target value.

- By when should the improvement be measurable? A realistic timeframe tied to business cycles, not just project milestones.

If you can’t answer all three, the goal is likely not defined clearly enough to measure ROI against.

Establishing a Baseline

Why Baselines Are Critical

A baseline is the documented current state, the “before” snapshot that every “after” measurement is compared against. Without it, ROI is speculation.

With baseline: “Analyst time spent on data preparation decreased from 62% to 18%, a 71% reduction.”

Without baseline: “We think analysts spend less time on data prep now.”

One is evidence. The other is a feeling. Leadership funds evidence.

Quantitative Baselines to Document

Before the engagement begins, measure and record:

Time metrics:

- Hours per week analysts spend on manual data cleaning and reconciliation

- Time to generate standard reports (monthly close, quarterly board deck, customer analytics)

- Time to onboard a new data source into the existing architecture

- Time to resolve a data-related support ticket or inquiry

Quality metrics:

- Duplicate record rate across key entities (customers, products, transactions)

- Data error rate, percentage of records with known quality issues

- Pipeline failure rate, number of failures per week or month

- Schema drift incidents, unplanned changes that break downstream systems

Cost metrics:

- Engineering hours spent maintaining existing pipelines vs. building new capabilities

- Annual spend on tools and infrastructure supporting the current integration state

- Cost of manual workarounds, spreadsheets, CSV exports, email-based data sharing

Volume metrics:

- Number of data sources currently integrated

- Number of active pipelines

- Total data volume being moved and processed

Qualitative Baselines to Document

Quantitative metrics tell part of the story. Qualitative baselines tell the rest:

- Stakeholder trust survey, a simple 1–5 rating from key stakeholders: “How confident are you in the accuracy and reliability of the data you use for decisions?” Conducted before the engagement, repeated after.

- Data dispute frequency, how often do departments escalate conflicting numbers? Track the count and severity for 30–60 days before the engagement.

- Decision-making patterns, are executives referencing dashboards in meetings, or are they relying on gut instinct and spreadsheets? Document the observed pattern.

Team satisfaction, are data engineers and analysts frustrated with the current state? A brief survey captures sentiment that turnover data alone won’t show.

How to Capture Baselines Efficiently

This doesn’t need to be a month-long research project. A focused baselining effort typically takes 1–2 weeks:

- Pull quantitative metrics from existing monitoring tools, project management systems, and time tracking

- Run a short stakeholder survey, 5 questions, 5-point scale, distributed to 15–20 key stakeholders

- Interview 3–5 team leads for qualitative context

- Document everything in a shared baseline report that becomes the official reference point

The consulting team should help with this, in fact, baselining should be a standard deliverable in the discovery phase of any data integration consulting engagement.

Defining KPIs for the Engagement

How Many KPIs

Aim for 5–10 measurable KPIs that span multiple value dimensions. Fewer than 5 may not capture the full picture. More than 10 often creates measurement overhead that is difficult to sustain.

KPI Categories

Spread your KPIs across these five categories to ensure comprehensive coverage:

Efficiency KPIs, Are we doing things faster?

- Time to generate monthly financial reports

- Hours per week spent on manual data preparation

- Time to onboard a new data source

Quality KPIs, Is the data better?

- Duplicate record rate across key entities

- Pipeline failure rate per month

- Data accuracy score (percentage of records passing validation rules)

Speed KPIs, Is data arriving faster?

- Data freshness, latency between source event and warehouse availability

- Time from data request to data delivery

- Query performance on integrated datasets

Cost KPIs, Are we spending less on waste?

- Engineering hours on maintenance vs. new development

- Cost of manual workarounds eliminated

- Total integration infrastructure spend relative to data volume

Business Impact KPIs, Is the business performing better?

- Revenue attributed to initiatives enabled by integrated data

- Compliance audit outcomes (findings reduced or eliminated)

- Stakeholder adoption rate of integrated data products

- Decision-making speed for data-dependent questions

Making KPIs Actionable

For a mid-market company engaging data integration consulting to unify customer data across CRM, billing, and product systems:

- Duplicate customer record rate: Baseline 34% → Target under 3% → 6 months → Owned by data engineering lead

- Monthly close time: Baseline 14 days → Target 5 days → 9 months → Owned by finance controller

- Analyst data prep time: Baseline 58% of working hours → Target under 20% → 6 months → Owned by analytics manager

- Pipeline failures per month: Baseline 11 → Target under 2 → 4 months → Owned by data engineering lead

- Stakeholder data trust score: Baseline 2.1/5.0 → Target 4.0/5.0 → 12 months → Owned by data product manager

- Cross-sell conversion rate: Baseline 4.2% → Target 6.0% → 12 months → Owned by marketing director

- Time to answer ad hoc data questions: Baseline 3–5 days → Target under 4 hours → 6 months → Owned by analytics manager

Notice how this set spans efficiency, quality, speed, cost, and business impact. No single category dominates. The full picture emerges from the combination.

Example KPI Set

The organizations that invest consistently in data infrastructure, integration, governance, quality, outperform those that invest sporadically. But consistent investment requires consistent evidence of return. ROI measurement creates the feedback loop that sustains ongoing investment.

The Non-Negotiable Rule

If a KPI isn’t defined before the engagement starts, it should not be heavily weighted in the ROI calculation afterward.

Retroactive KPIs, metrics identified after the work is done to make the results look good, aren’t credible. They’re cherry-picked. Leadership knows the difference.

Define the KPIs upfront. Measure them honestly. Report the results, including the ones that fell short. That’s how you build credibility for this engagement and every future investment in data integration consulting.

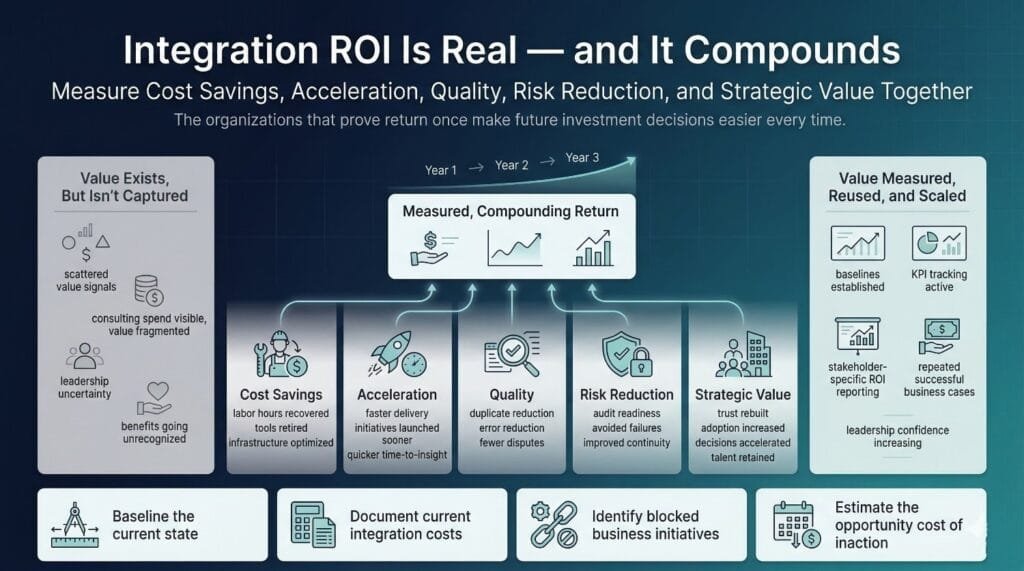

The ROI Measurement Framework

This is the framework that makes data integration consulting ROI measurable, communicable, and defensible.

Most organizations make the mistake of looking for ROI in a single dimension, usually cost savings. That captures maybe 20% of the value. The other 80% lives in acceleration, quality, risk reduction, and strategic enablement.

This framework covers all five.

Dimension 1, Direct Cost Savings

The most tangible, easiest-to-communicate dimension. This is where you start when talking to the CFO.

Reduction in Manual Data Work

The hours your team currently spends on manual extraction, transformation, reconciliation, and reporting, that either get automated or eliminated through proper integration.

How to calculate:

(Hours saved per week × Fully loaded hourly cost) × 52 weeks = Annual savings

Example: 3 analysts each save 12 hours/week on manual data prep. Fully loaded cost is $75/hour.

(36 hours × $75) × 52 = $140,400/year

This is often one of the largest and fastest appearing cost savings from a data integration consulting engagement, especially in analytics heavy organizations.

Elimination of Redundant Tools and Licenses

Successful integration often reveals, and enables the retirement of, overlapping tools, middleware, custom scripts, and duplicate platforms that exist because data wasn’t unified.

How to calculate:

Annual cost of eliminated tools and infrastructure.

Example: Two overlapping ETL tools ($45K/year each) and a legacy middleware platform ($30K/year) are retired after consolidation.

$45K + $45K + $30K = $120,000/year

Reduced Data Infrastructure Costs

Better architecture often means more efficient use of cloud compute, storage, and processing, eliminating redundant data copies, optimizing query patterns, and right-sizing infrastructure.

How to calculate:

Monthly infrastructure spend (before) − Monthly infrastructure spend (after) × 12

Example: Warehouse compute costs drop from $18K/month to $11K/month after architecture optimization.

($18K − $11K) × 12 = $84,000/year

Reduced Rework and Error Correction

Every hour spent fixing broken pipelines, correcting bad data, and re-running failed processes is an hour that proper integration eliminates.

How to calculate:

(Average monthly rework hours × hourly cost) before − (Average monthly rework hours × hourly cost) after × 12

Example: Team spent 60 hours/month on rework at $85/hour. After consulting, rework drops to 10 hours/month.

(60 − 10) × $85 × 12 = $51,000/year

Avoided Hiring

When integration is done well, you may avoid hiring additional engineers or architects that would otherwise have been required to manage a fragmented environment.

How to calculate:

Number of FTEs avoided × Fully loaded annual cost per FTE

Example: The engagement eliminated the need for 1 additional senior data engineer ($165K fully loaded).

$165,000/year avoided

How to Track This Dimension

- Compare time logs and resource allocation before and after the engagement

- Pull infrastructure and tooling invoices on a quarterly basis

- Use project management data to quantify rework reduction

- Track headcount plans, what was budgeted vs. what was actually needed

Dimension 2, Time-to-Value Acceleration

This dimension captures the value of getting results sooner. It’s less intuitive than cost savings but often higher in total impact, because delayed business value compounds.

Faster Project Delivery

How much sooner did the integration deliver usable results compared to a documented internal estimate or prior similar project?

How to calculate:

(Estimated internal timeline − Actual timeline with consulting) × Business value per month of delay

Example: Internal estimate was 14 months based on prior delivery velocity. With consulting, the project was delivered in 7 months. The analytics initiative this unblocked generates $50K/month in identified savings.

(14 − 7) × $50K = $350,000 in accelerated value

This is often one of the largest and fastest appearing cost savings from a data integration consulting engagement, especially in analytics heavy organizations.

Reduced Time-to-Insight

How quickly can analysts and business users access integrated, trustworthy data, from question to answer?

How to calculate:

Average time-to-insight (before) vs. (after)

Example: Two overlapping ETL tools ($45K/year each) and a legacy middleware platform ($30K/year) are retired after consolidation.

Example: Before integration, answering a cross-system customer question took 3–5 business days of manual assembly. After, it takes under 2 hours.

This doesn’t have a clean dollar amount, but it directly enables faster decision-making, which the strategic dimension captures.

Track it as: Average hours from data request to delivered insight, measured monthly.

Faster Onboarding of New Data Sources

A well-architected integration environment makes adding new sources dramatically faster.

How to calculate:

Average onboarding time (before) vs. (after)

Example: Before consulting, adding a new data source took 6–8 weeks of custom development. After, it takes 3–5 days using the architecture and patterns established during the engagement.

Track it as: Days to integrate a new source, measured for each new addition post-engagement.

Accelerated Dependent Initiatives

Faster integration often unblocks other high-value projects that were waiting on data.

How to calculate:

Estimated revenue or cost impact of dependent initiatives × Months of acceleration

Example: The AI-powered churn prediction model was blocked by data readiness. Integration consulting unblocked it 4 months ahead of schedule. The model is projected to save $200K/year in reduced churn.

4 months × ($200K ÷ 12) = $66,667 in accelerated value

How to Track This Dimension

- Compare planned vs. actual project timelines and document the delta

- Survey business users on time-to-insight before and after, a simple quarterly pulse

- Track new source onboarding times as a recurring operational metric

- Maintain a dependency map showing which initiatives were unblocked by integration

Dimension 3, Data Quality Improvement

Quality is the dimension that connects most directly to data trust, the intangible factor with the most outsized long-term impact.

Duplicate Record Reduction

How to calculate:

(Duplicate count before − Duplicate count after) ÷ Total records × 100 = Percentage reduction

Example: 340,000 customer records. 119,000 duplicates identified (35%). After entity resolution, 6,800 remain (2%).

Duplicate rate reduced from 35% to 2%, a 33 percentage point reduction and approximately a 94% relative reduction in duplicates.

Data Error Rate Reduction

How to calculate:

Errors per 10,000 records (before) vs. (after)

Example: Data profiling found 847 errors per 10,000 records before the engagement. After quality remediation and governance implementation, the rate dropped to 23 per 10,000.

97.3% reduction in error rate

Data Completeness Improvement

How to calculate:

Percentage of records with all required fields populated (before) vs. (after)

Example: Customer address completeness was 64% before the engagement. After standardization and enrichment, it’s 96%.

32 percentage point improvement in completeness

Reduction in Data Disputes

How to calculate:

Number of data escalations or dispute tickets per quarter (before) vs. (after)

Example: Before the engagement, the data team received an average of 14 escalations per quarter about conflicting metrics. After governance and shared definitions were implemented, escalations dropped to 2 per quarter.

86% reduction in data disputes

Business Impact of Improved Quality

Better data quality doesn’t just look good in a profiling report, it drives measurable downstream improvements.

Track these proxy metrics:

- Forecast accuracy improvement (finance)

- Campaign targeting precision (marketing)

- Customer satisfaction scores tied to data-driven personalization

- AI/ML model accuracy improvement attributable to cleaner training data

How to Track This Dimension

- Run data profiling reports at regular intervals, monthly or quarterly

- Use automated quality monitoring tools like Great Expectations, Monte Carlo, or Soda

- Track data dispute tickets through your existing ticketing system

- Survey business users quarterly on data trust and usability with a simple 1–5 scale

Dimension 4, Risk Reduction and Compliance

This dimension captures the value of things that didn’t go wrong, the hardest to measure but often the most financially significant.

Compliance Audit Readiness

How to calculate:

Hours spent on audit preparation (before) vs. (after)

Example: The finance team previously spent 320 hours per audit cycle assembling data, tracing lineage manually, and filling documentation gaps. After integration consulting implemented governance and lineage tracking, preparation dropped to 45 hours.

275 hours saved × $95/hour = $26,125 per audit cycle

Audit Findings Reduction

How to calculate:

Number and severity of data-related findings (before) vs. (after)

Example: Previous SOX audit surfaced 7 data-related findings, 2 classified as significant deficiencies. Post-integration audit surfaced 1 minor observation.

Quantify the cost of remediating findings, each significant deficiency typically costs $50K–$200K in remediation effort, external auditor scrutiny, and management attention.

Avoided Penalties and Fines

How to calculate:

Potential fine amount × Probability of violation (before) − Potential fine

amount × Probability of violation (after)

This requires estimation, but the estimates are defensible using regulatory penalty schedules and your organization’s pre-engagement risk assessment.

Example: GDPR fine exposure for a data subject access request failure. Maximum statutory penalty under GDPR can reach €20M or 4% of global revenue. Use internally assessed probability ranges before and after the engagement, documented by compliance and security teams, rather than single point estimates.

Even conservative estimates produce significant risk-adjusted savings.

Data Breach Risk Reduction

How to calculate:

Estimated breach cost × Risk reduction percentage

Use industry benchmarks, IBM’s annual Cost of a Data Breach report provides average costs by industry and region. If data integration consulting improved access controls, data classification, and lineage tracking, estimate the risk reduction with your security team.

Business Continuity Improvement

How to calculate:

Downtime hours (before) vs. (after) × Revenue impact per hour of downtime

Example: Critical pipeline failures caused an average of 14 hours of data unavailability per quarter. After architecture improvements, downtime dropped to under 1 hour per quarter.

13 hours recovered × $5,000 revenue impact per hour × 4 quarters = $260,000/year

How to Track This Dimension

- Partner with compliance and legal teams to quantify audit preparation effort and findings

- Track audit results year-over-year with specific attention to data-related findings

- Conduct annual risk assessments with security and compliance, document the before and after

- Monitor pipeline uptime and data availability SLAs

Dimension 5, Strategic and Organizational Value

This is the dimension that doesn’t fit into spreadsheets, but often has the highest long-term impact on organizational performance.

Data Trust and Adoption

The shift from “I don’t trust the data” to leadership confidently citing dashboards in board meetings.

How to measure:

- BI platform adoption rates, daily/weekly active users, before and after

- Dashboard usage metrics, views, queries, exports

- Stakeholder trust survey, the same 1–5 scale used in baselining, repeated quarterly

- Qualitative indicator: are executives referencing data in decision meetings?

Decision-Making Speed

How to measure:

- Survey executives quarterly: “How quickly can you get the data you need to make a decision?”, before vs. after

- Track time from question to answer for common business inquiries

- Track the frequency of documented decision delays attributed to unavailable or unreliable data in leadership meetings

Cross-Functional Alignment

How to measure:

- Number of formally agreed-upon shared KPI definitions, zero before, documented count after

- Reduction in cross-departmental data escalations

- Stakeholder satisfaction surveys specifically measuring inter-team data collaboration

- Existence and adoption of a published business glossary

Internal Capability Uplift

How to measure:

- Reduction in external consulting dependency post-engagement, measured by tasks completed internally that previously required outside help

- Team skill assessments, formal or informal evaluation of integration competencies before and after

- Number of new data sources onboarded by the internal team without consulting assistance

- Quality of documentation and runbooks produced by the internal team

Scalability and Future-Readiness

How to measure:

- Time and cost to onboard new data sources, trending over time

- Architecture flexibility, can the current architecture support 2–3x growth without redesign?

- Number of new business initiatives supported by the integration architecture without requiring additional consulting

Employee Satisfaction and Retention

How to measure:

- Team satisfaction surveys, specifically targeting data engineering and analytics roles

- Retention rates in data roles, before and after the engagement

- Qualitative feedback on work quality, are engineers building new things or maintaining broken things?

- Recruitment ease, is the data infrastructure now an asset in hiring conversations rather than a warning?

How to Track This Dimension

- Conduct quarterly stakeholder surveys with consistent questions for trending

- Pull BI platform analytics for adoption and usage metrics

- Monitor team composition, retention, and satisfaction through HR data and direct feedback

- Maintain an annual architecture fitness assessment

The Five Dimensions, Summary

For quick reference, here’s the complete framework at a glance:

Dimension 1, Direct Cost Savings: Reduced manual work, eliminated tools, lower infrastructure costs, less rework, avoided hiring.

Dimension 2, Time-to-Value Acceleration: Faster project delivery, reduced time-to-insight, faster source onboarding, unblocked dependent initiatives.

Dimension 3, Data Quality Improvement: Fewer duplicates, lower error rates, better completeness, fewer data disputes, improved downstream outcomes.

Dimension 4, Risk Reduction and Compliance: Audit readiness, fewer findings, avoided penalties, reduced breach risk, improved business continuity.

Dimension 5, Strategic and Organizational Value: Data trust, decision speed, cross-functional alignment, capability uplift, scalability, talent retention.

No single dimension tells the full story. Together, they create a comprehensive and defensible multidimensional ROI picture that captures both immediate financial returns and longer term strategic value.

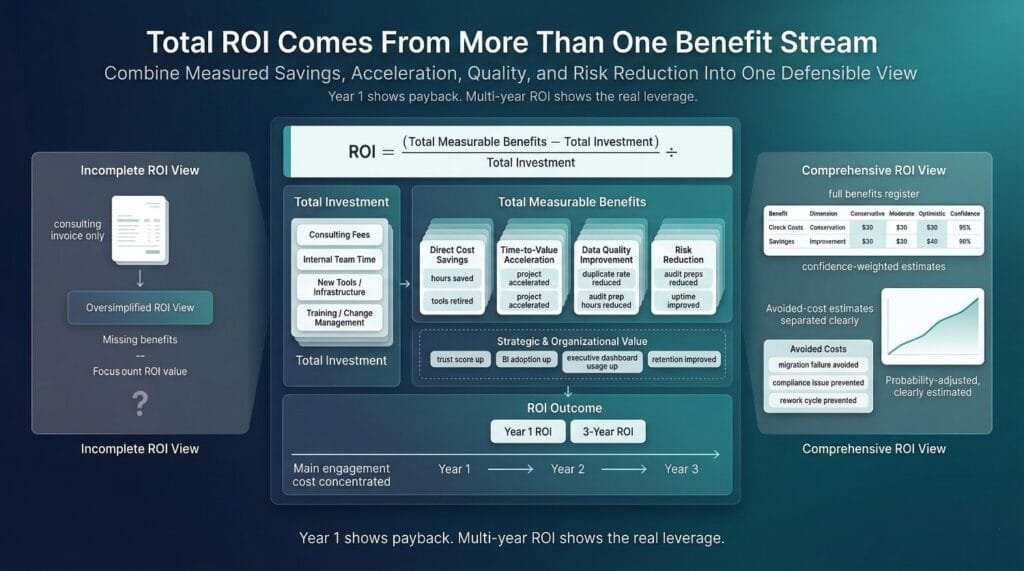

Calculating Total ROI: Putting It All Together

The five dimensions give you what to measure. This section gives you the how, the actual mechanics of turning those measurements into a defensible ROI number that leadership can evaluate, compare, and act on.

The Basic ROI Formula

The formula itself is simple:

ROI = (Total Measurable Benefits minus Total Cost of Engagement) divided by Total Cost of Engagement multiplied by 100 percent

The complexity isn’t in the formula. It’s in making sure both sides of the equation are honest and complete.

What to Include in Total Cost

Don’t just count the consulting invoice. Count everything the engagement required:

Consulting fees, the obvious one. The contract value of the engagement including any change orders.

Internal team time, your engineers, analysts, and stakeholders spent time working alongside the consultants. That’s real cost. Calculate it as hours contributed × fully loaded hourly rate.

New tools and infrastructure, if the consulting engagement recommended and you purchased a new iPaaS platform, data quality tool, or governance platform, include the incremental first year cost attributable to the initiative. These were part of the investment, even though they weren’t on the consulting invoice.

Change management and training, workshops, training sessions, communication efforts, and the stakeholder time invested in adoption. If it wouldn’t have happened without the consulting engagement, it’s part of the cost.

Example total cost calculation:

A mid-sized company’s engagement:

- Consulting fees: $300,000

- Internal team time (estimated 1,200 hours at $85/hour average): $102,000

- New iPaaS platform (Year 1): $75,000

- Training and change management: $25,000

- Total cost: $502,000

Using only the $300K consulting fee in the denominator would overstate the ROI. Using the full $502K gives leadership an honest picture.

That said, there’s a judgment call here. Some organizations exclude tool costs because those tools deliver value beyond the consulting engagement. Others exclude internal team time because those employees would have been paid regardless, although finance teams often treat opportunity cost as real when comparing investment alternatives. Be transparent about what you include and why, and be consistent across calculations.

Building a Comprehensive Benefits Register

The benefits register is where all five dimensions come together into a single, organized accounting of value delivered.

How to Build It

For every benefit identified across the five ROI dimensions, document:

The benefit, a clear, specific description of what improved.

The dimension, which of the five value categories it falls under.

The measurement, the actual metric, with before and after values.

Three estimates, because precision varies by benefit type:

- Conservative, the floor. Only includes value you can prove with hard data. This is the number you defend to skeptics.

- Moderate, the realistic middle. Includes reasonable estimates where direct measurement isn’t perfect but the logic is sound.

- Optimistic, the ceiling. Includes the full potential value, including downstream impacts that are harder to attribute directly.

Confidence level, your honest assessment of how reliable the estimate is. High confidence for directly measured savings. Medium for reasonable estimates. Low for extrapolations and projections.

Example Benefits Register

Benefit: Reduced analyst data preparation time

- Dimension: Direct Cost Savings

- Measurement: 36 hours/week saved across 3 analysts at $75/hour

- Conservative: $112,000/year (accounting for only 80% of measured savings to be safe)

- Moderate: $140,400/year (full measured value)

- Optimistic: $168,000/year (including productivity gains from analysts doing higher-value work)

- Confidence: High, measured directly through time tracking

Benefit: Analytics initiative launched 4 months early

- Dimension: Time-to-Value Acceleration

- Measurement: Customer analytics platform generating $50K/month in identified savings

- Conservative: $100,000 (2 months of accelerated value, heavily discounted)

- Moderate: $200,000 (4 months of accelerated value)

- Optimistic: $300,000 (including secondary initiatives unblocked)

- Confidence: Medium, timeline acceleration is documented, value per month is estimated

Benefit: Reduced compliance audit preparation

- Dimension: Risk Reduction

- Measurement: 275 hours saved per audit cycle

- Conservative: $20,000/year

- Moderate: $26,125/year

- Optimistic: $35,000/year (including reduced external auditor fees from cleaner documentation)

- Confidence: High, hours directly measurable through time tracking

Benefit: Duplicate record reduction from 35% to 2%

- Dimension: Data Quality

- Measurement: 112,200 duplicate records resolved

- Conservative: $40,000/year (reduced rework and customer service errors)

- Moderate: $80,000/year (including improved campaign targeting and personalization)

- Optimistic: $120,000/year (including improved customer lifetime value from better experience)

- Confidence: Medium, quality metrics measured directly, business impact estimated

Using a Weighted Approach

For the final ROI calculation, use the confidence-weighted moderate estimate as your primary number:

- High confidence benefits, use the moderate estimate at full value provided the measurement method remained consistent before and after

- Medium confidence benefits, apply a discount factor such as 75 percent to reflect estimation uncertainty

- Low confidence benefits, apply a deeper discount such as 50 percent or less depending on attribution strength

This approach is conservative enough to be credible and comprehensive enough to capture real value. When presenting to leadership, show all three scenarios, conservative, moderate, and optimistic, so they can see the range.

Accounting for Costs Avoided

This is the counterfactual value, the cost of bad outcomes that didn’t happen because the consulting engagement prevented them.

Why This Matters

Some of the highest-value outcomes of data integration consulting are preventive. Excluding them from the ROI calculation systematically undervalues the investment.

How to Estimate

Avoided cost equals Cost of the negative outcome multiplied by the difference in probability of occurrence before and after consulting

Example 1: Migration failure avoided

Your organization was planning a cloud migration. Without consulting, the estimated probability of a significant stall or failure (based on industry data and your own history) was 40%.

- Estimated cost of a materially failed or stalled migration, including rework, extended timeline, and productivity loss, for example $600,000 based on internal or industry benchmarks

- Probability without consulting: 40%

- Probability with consulting: 5%

- Avoided cost: $600,000 × (40% − 5%) = $210,000

Example 2: Compliance violation prevented

Pre-engagement, the compliance team estimated a 15% probability of a data-related regulatory finding in the next audit cycle.

- Estimated remediation cost of a significant finding: $150,000

- Probability without consulting: 15%

- Probability with consulting: 2%

- Avoided cost: $150,000 × (15% − 2%) = $19,500

Example 3: Third integration project failure prevented

The organization had two prior failed integration attempts. Without consulting, the estimated probability of a third failure was high, say 60% based on the pattern.

- Estimated cost of another failure (wasted budget, delayed initiatives, team attrition): $400,000

- Probability without consulting: 60%

- Probability with consulting: 10%

Avoided cost: $400,000 × (60% − 10%) = $200,000

Where to Get the Estimates

- Industry benchmarks, Gartner, IBM, Forrester publish failure rates, breach costs, and compliance penalty data

- Internal history, your own track record of past integration attempts, audit findings, and project failures

- Expert judgment, your compliance team, your security team, and your engineering leads can estimate probabilities based on their domain knowledge

Consulting team input, experienced consultants have seen enough projects to provide calibrated probability estimates

The Credibility Rule

Always present avoided costs separately from directly measured benefits and clearly label them as probability adjusted estimates.

Don’t mix them into the same total without labeling them. Leadership should see a clear distinction between “value we measured” and “value we estimate was prevented.” Both are real. Both are important. But they carry different confidence levels and should be presented accordingly.

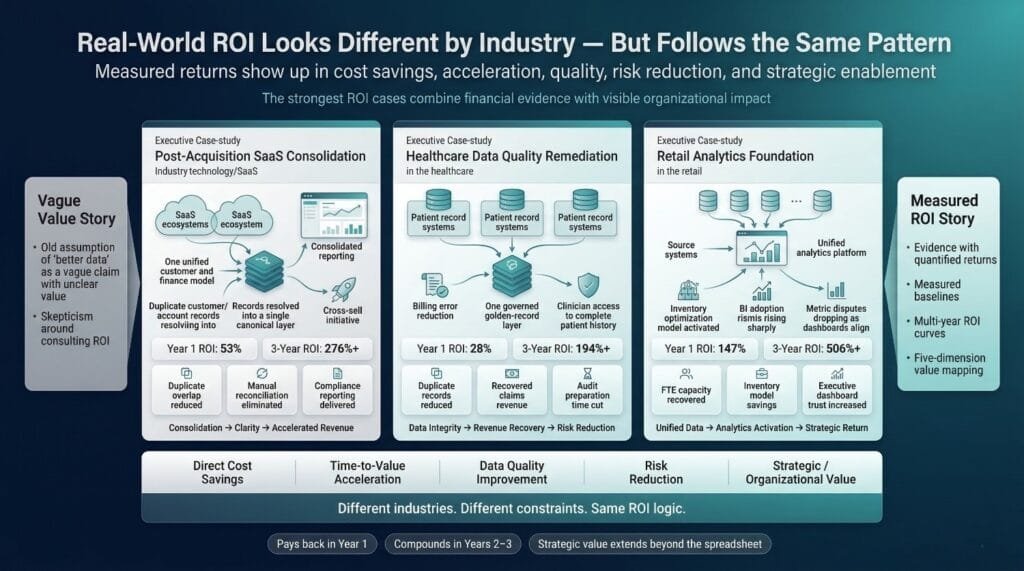

Time-Adjusted ROI

Why Year 1 Understates the Real Return

Most organizations calculate ROI based on Year 1 benefits vs. total engagement cost. This can understate the return when integration benefits persist and compound over multiple years.

Year 1 captures the immediate wins, manual work reduction, tool consolidation, initial quality improvement.

Year 2 captures the secondary effects, analytics initiatives delivering full-year results, governance preventing new quality issues, architecture supporting new sources without rework.

Year 3 captures the compounding value, AI/ML models trained on clean data producing measurable business impact, the architecture supporting 2–3x growth, organizational culture fully shifted toward data-driven decision-making.

The 3-Year ROI Horizon

For strategic data integration consulting investments, a three year horizon is often a reasonable planning frame, subject to the organization’s budgeting model and strategy cycle:

Year 1:

- Direct cost savings begin (but may not reach full run-rate until mid-year)

- Time-to-value acceleration captured

- Initial quality improvements measured

- Consulting costs fully incurred

Year 2:

- Cost savings at full annual run-rate

- Dependent initiatives delivering measurable returns

- Quality improvements compounding (fewer errors means less rework means more capacity for new work)

- Risk reduction benefits accumulating

- No additional consulting costs (unless ongoing advisory was engaged)

Year 3:

- All annual savings continue

- New initiatives enabled by the architecture deliver additional returns

- Scalability benefits realized as growth occurs without re-architecture

- Organizational capability fully transferred, team operating independently

- Strategic value (trust, adoption, decision speed) at full maturity

Discounted Cash Flow for Long-Term Benefits

For a rigorous financial presentation, apply a discount rate to future benefits to account for the time value of money:

Present Value = Future Benefit ÷ (1 + discount rate)^year

Using an illustrative discount rate such as 10 percent, consistent with the organization’s weighted average cost of capital:

- Year 1 benefit of $530K → Present value: $530K

- Year 2 benefit of $480K → Present value: $480K ÷ 1.10 = $436K

- Year 3 benefit of $520K → Present value: $520K ÷ 1.21 = $430K

This approach is especially useful when presenting to a CFO or finance committee that evaluates all investments on a net present value basis.

Sample ROI Calculation Walkthrough

Let’s put the entire framework together with a realistic scenario.

The Scenario

A mid-sized B2B company (300 employees, $60M revenue) engages data integration consulting to unify customer and financial data across Salesforce, NetSuite, a product database, and HubSpot. The goal is a governed customer 360 and reliable financial reporting.

Total Investment

- Consulting engagement (16 weeks): $300,000

- Internal team time (800 hours at $90/hour): $72,000

- New data quality monitoring tool: $24,000/year

- Training and change management: $18,000

- Total Year 1 investment: $414,000

Year 1 Benefits

Dimension 1, Direct Cost Savings:

- Manual data preparation reduction equivalent to approximately 2.5 FTE capacity reallocated to higher value work, estimated at $95,000 based on fully loaded cost assumptions

- Retired legacy ETL tool and middleware: $55,000

- Reduced pipeline maintenance (rework down 70%): $38,000

- Subtotal: $188,000

Dimension 2, Time-to-Value Acceleration:

- Customer analytics platform launched 4 months early, generating $50K/month in identified cross-sell revenue: $200,000

- New source onboarding reduced from 6 weeks to 5 days, enabling two additional integrations in Year 1 that would have slipped to Year 2: value captured in quality and cost savings above

- Subtotal: $200,000

Dimension 3, Data Quality Improvement:

- Duplicate customer records reduced from 32% to 2.5%, improving campaign targeting and reducing customer service errors: $65,000

- Data disputes between departments dropped 85%, recovered leadership time and reduced friction: $15,000

- Subtotal: $80,000

Dimension 4, Risk Reduction:

- Compliance audit preparation reduced by 260 hours: $24,700

- Estimated avoided compliance finding (probability-weighted): $19,500

- Improved pipeline uptime (data availability SLA from 91% to 99.5%): $55,000

- Subtotal: $99,200

Dimension 5, Strategic and Organizational Value:

- Stakeholder data trust score improved from 2.1 to 3.8 (out of 5.0)

- BI platform daily active users increased 140%

- Executive decision meetings now reference dashboards in 85% of sessions (up from 20%)

- One senior data engineer retention saved (was actively interviewing due to frustration with broken infrastructure)

- Subtotal: Documented through surveys and adoption metrics, not included in financial calculation

Year 1 ROI Calculation

Financially quantified benefits: $188,000 + $200,000 + $80,000 + $99,200 = $567,200

Total investment: $414,000

Year 1 ROI = ($567,200 − $414,000) ÷ $414,000 × 100% = 37%

A 37% return in Year 1, with the strategic dimension not even included in the financial calculation.

Year 3 Cumulative View

Year 2 benefits (conservative, assuming no new initiatives, just continuing returns):

- Annual cost savings continue: $188,000

- Analytics platform full-year impact: $600,000

- Quality and risk benefits continue: $179,200

- Year 2 incremental tool cost: $24,000

- Year 2 net benefits: $943,200

Year 3 benefits (conservative, adding one new initiative enabled by the architecture):

- Continuing annual benefits: $967,200

- New AI-driven customer retention initiative (enabled by clean, unified data): $150,000

- Architecture supports 40% data volume growth without additional infrastructure: $45,000 in avoided scaling costs

- Year 3 net benefits: $1,162,200

3-Year Cumulative:

- Total benefits: $567,200 + $943,200 + $1,162,200 = $2,672,600

- Total investment (Year 1 engagement + 3 years of tool costs): $414,000 + $48,000 = $462,000

3-Year ROI = ($2,672,600 − $462,000) ÷ $462,000 × 100% = 479%

What This Tells Leadership

- Year 1 ROI of 37%, the engagement paid for itself and then some in the first year alone

- 3-year ROI of 479%, the compounding value of a sound data foundation dramatically outpaces the initial investment

- And the strategic dimension, trust, adoption, decision speed, talent retention, isn’t even included in these numbers

This is why data integration consulting, when well executed and measured rigorously, is often a high return investment within the data portfolio.

When to Measure

Knowing what to measure is half the challenge. Knowing when to measure is the other half.

Measure too early and you’ll miss the compounding value. Measure too late and you’ll lose the baseline. Measure only once and you’ll capture a snapshot instead of a trajectory.

ROI measurement isn’t a single event. It’s a rhythm, timed to capture different types of value as they materialize.

Pre-Engagement (Baseline)

When: 2–4 weeks before the consulting engagement begins

Purpose: Establish the “before picture” that every future measurement compares against.

This is the most important measurement window, and the one most organizations skip. Everything that follows depends on having an honest, documented starting point.

What to Capture

Quantitative baselines across all five dimensions:

- Hours per week spent on manual data preparation and reconciliation

- Average time to generate key reports (monthly close, board deck, customer analytics)

- Pipeline failure rate per month

- Duplicate record rate across key entities

- Data error rate per 10,000 records

- Infrastructure spend, compute, storage, tooling

- Engineering time split between maintenance and new development

- Average time to onboard a new data source

- Compliance audit preparation hours

- Number of data-related audit findings from the most recent cycle

Qualitative baselines:

- Stakeholder data trust survey, a simple 1–5 scale distributed to 15–20 key decision-makers

- Data dispute frequency, how often do teams escalate conflicting numbers

- Decision-making patterns, are leaders referencing data or relying on instinct

- Data team satisfaction, brief pulse survey on infrastructure frustration and work quality

Who Owns This

The data integration consulting team should help capture baselines as part of their discovery phase. But the internal data team owns the data, because they’ll need to run the same measurements at every future checkpoint.

The Non-Negotiable

If a metric isn’t baselined before the engagement starts, it is difficult to use it credibly in the ROI calculation afterward.

Document everything. Store it somewhere accessible. You’ll reference it repeatedly over the next 12–36 months.

During the Engagement (Leading Indicators)

When: Continuously throughout the engagement, reviewed at weekly and monthly checkpoints

Purpose: Track early signals that predict long-term ROI, and identify course corrections before they become expensive.

What to Track

Early wins that demonstrate momentum:

- First manual process automated, and the hours it’s already saving

- First data quality improvement measured, duplicates resolved, error rates dropping

- First pipeline migrated from manual/legacy to the new architecture

- First stakeholder reaction to improved data, the moment someone says “these numbers actually match”

Engagement health indicators:

- Are milestones being hit on schedule?

- Are stakeholders showing up to workshops and reviews, or disengaging?

- Are internal team members actively participating, or passively watching?

- Are blockers being resolved quickly, or accumulating?

- Is scope stable, or creeping without formal change management?

Leading indicators of long-term ROI:

- Stakeholder engagement levels during the engagement predict adoption after it. If leadership isn’t engaged during design, they won’t trust the output.

- Speed of cross-departmental agreement on shared definitions predicts governance adoption. If teams are aligning quickly, governance will stick. If every definition is a battle, post-engagement sustainability is at risk.

- Reduction in data-related escalations during the engagement signals that quality and trust are already improving.

- Internal team confidence, are your engineers feeling capable of maintaining what’s being built, or overwhelmed by its complexity?

Why This Matters for ROI

Leading indicators may not appear directly in the final ROI calculation. But they tell you whether the ROI you’re expecting is likely to materialize, or whether intervention is needed now to protect the investment.

A data integration consulting engagement that’s hitting milestones, engaging stakeholders, and producing early wins is more likely to deliver strong ROI, assuming adoption continues. One that’s missing deadlines, losing stakeholder attention, and producing no visible improvements needs immediate course correction, before the ROI window closes.

Immediately Post-Engagement (30–90 Days)

When: Starting the day the consulting engagement formally ends, measured at 30, 60, and 90 days.

Purpose: Capture the direct, tangible outcomes that are immediately measurable, and validate that what was built is actually being used.

What to Measure

Direct efficiency gains:

- Hours saved per week on manual data tasks, re-measure using the same methodology as the baseline

- Pipeline failure rate, is it down? By how much?

- Report generation time, is it faster? Measure the same reports baselined pre-engagement

- Rework hours, how much time is the team still spending on error correction?

Quality improvements:

- Run the same data profiling reports used in the baseline, duplicates, error rates, completeness

- Compare current quality scores against pre-engagement baselines

- Document any quality issues that surfaced post-launch and how they were resolved

Governance and process adoption:

- Are data ownership roles active, are stewards actually performing stewardship?

- Are golden record rules being followed, or being bypassed?

- Is documentation being maintained, or already stale?

- Is the governance framework operational, or already gathering dust?

Stakeholder sentiment:

- Re-run the data trust survey, same questions, same audience as the baseline

- Capture specific feedback on what’s improved and what hasn’t

- Document any departments or users still relying on old processes, this signals adoption gaps that need addressing

The Critical Check

The 30 to 90 day window is often where you discover whether the engagement delivered sustainable value or just only short term improvement. If governance is already slipping, adoption is low, or the internal team is struggling to maintain what was built, the long-term ROI is at risk.

Address issues in this window, as they are typically less costly to correct early than after patterns solidify.

Medium-Term (6–12 Months)

When: Formal measurement at 6 months and 12 months post-engagement.

Purpose: Capture the downstream business impacts that take time to materialize, and confirm that the integration foundation is delivering on its strategic promise.

What to Measure

Business impact of integrated data:

- Are analytics initiatives that were blocked now producing results? What results?

- Has reporting accuracy and consistency improved in ways stakeholders notice and value?

- Are cross-sell, upsell, or personalization initiatives leveraging the unified data? With what measurable impact?

- Has forecast accuracy improved? By how much?

Operational maturity:

- Is the team onboarding new data sources faster than before? Measure average onboarding time.

- Are pipeline failures continuing to decline, or have they plateaued or increased?

- Is the architecture handling growth without strain, or are performance issues emerging?

- Has maintenance burden decreased, is the engineering team spending more time on new capabilities vs. firefighting?

Quality trends:

- Run data profiling again, are quality scores holding steady, improving, or degrading?

- Are data disputes still rare, or creeping back?

- Is the governance framework still active, or has it atrophied?

Compliance and risk:

- If an audit has occurred in this window, what were the results compared to pre-engagement?

- Has audit preparation time remained reduced?

- Are lineage and access controls still being maintained?

Financial tracking:

- Sum up actual cost savings realized to date, compare against the benefits register projections

- Track tool and infrastructure spend, has it decreased as projected?

- Calculate Year 1 ROI using actual measured data, not estimates

Why This Window Matters Most

The 6–12 month window is where the moderate-confidence benefits in your register either materialize or don’t. It’s where time-to-value acceleration shows its full impact. And it’s where you get the first credible data point on whether the 3-year ROI projection is tracking.

This is the measurement that justifies, or challenges, the next phase of integration investment.

Long-Term (12–36 Months)

When: Annual measurement at 12, 24, and 36 months post-engagement

Purpose: Capture the compounding strategic value, calculate cumulative ROI, and assess whether the integration foundation is delivering lasting organizational change.

What to Measure

Scalability realized:

- How many new data sources have been added since the engagement? How long did each take?

- Has the architecture supported business growth (new products, new geographies, acquisitions) without requiring redesign?

- What new initiatives has the integration foundation enabled that weren’t part of the original scope?

Strategic enablement:

- Are AI/ML initiatives running on the integrated data? What business value are they producing?

- Has the organization launched data products (internal or external) that depend on the integration architecture?

- Has the data infrastructure become a competitive advantage, or is it still just keeping the lights on?

Organizational data maturity:

- Stakeholder trust survey, is trust continuing to improve, holding steady, or declining?

- BI adoption, are more teams and users engaging with integrated data over time?

- Decision-making culture, has the organization genuinely shifted from gut-driven to data-driven?

- Data literacy, do business users understand where data comes from and what it means?

Team capability:

- Is the internal team operating independently, handling integration tasks that previously required consulting?

- Has the team grown in skill and confidence, or are they still dependent on external support?

- Are runbooks and documentation still being maintained and used?

Cumulative financial ROI:

- Sum all measured benefits across all five dimensions for each year

- Calculate the 3-year cumulative ROI using actual data

- Compare against the original projections, where did reality exceed or fall short of estimates?

Industry benchmarking:

- How does your data maturity compare to industry peers?

- Are your integration costs, quality scores, and time-to-insight metrics competitive?

- Frameworks such as CMMI Data Management Maturity and other industry data maturity models can provide structured comparison points

The Ultimate Test

At the 36-month mark, ask one question:

“Is our data infrastructure enabling the business to move faster, decide better, and operate more efficiently than it could 3 years ago, and can we prove it?”

If the answer is yes, backed by measured data across all five dimensions, the data integration consulting investment has likely delivered its full return. If the answer is mixed, the measurement data tells you exactly where the gaps are and what to address next.

The Complete ROI Measurement Timeline

Pre-engagement: Baseline everything. No exceptions.

During engagement: Track leading indicators weekly. Course-correct early.

30–90 days post: Measure direct outcomes. Validate adoption. Fix gaps quickly.

6–12 months post: Capture business impact. Calculate Year 1 ROI with real data.

12–36 months post: Measure compounding value. Calculate cumulative ROI. Assess lasting organizational change.

Each window builds on the previous one. Skip any of them and the ROI picture is incomplete. Follow all of them and you’ll have the most comprehensive, defensible integration ROI measurement in your organization’s history.

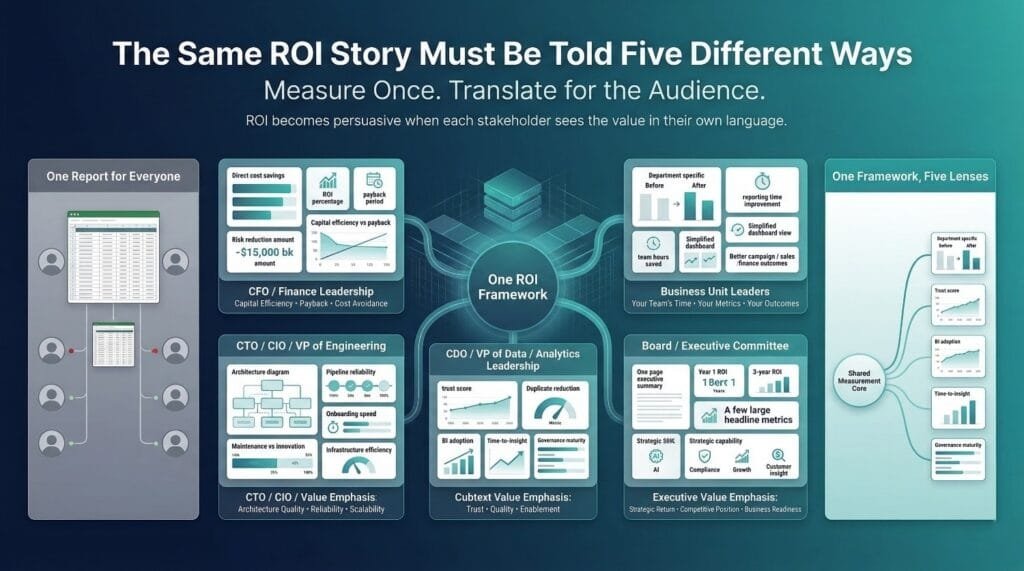

How to Communicate ROI to Different Stakeholders

You’ve measured the ROI. Now you have to communicate it, and the same numbers, presented the same way, will resonate with one audience and fall flat with another.

A CFO wants payback periods. A CTO wants architectural metrics. A business unit leader wants to know why their reports are faster. The board wants a paragraph, not a spreadsheet.

Presenting the right value to the right audience in the right language is as important as measuring it in the first place. A technically rigorous ROI analysis that is not understood by stakeholders delivers limited value.

To the CFO / Finance Leadership

What They Care About

The CFO’s question is always the same: “Was this a good use of capital, and should we invest more?”

They don’t care about data quality scores. They don’t care about pipeline reliability. They care about money, saved, avoided, earned, or accelerated.

How to Present

Lead with the financial summary. Don’t build up to it. Start with it.

“The 414K dollar integration investment delivered 567K dollars in measurable financial returns in Year 1, a 37 percent ROI. The three year projected return is 2.67M dollars, representing a 479 percent cumulative ROI based on stated assumptions.”

Then break it down by category:

- Direct cost savings: $188K/year in labor, tools, and infrastructure

- Revenue acceleration: $200K from launching the analytics initiative 4 months early

- Quality-driven savings: $80K/year in reduced rework and customer-facing errors

- Risk reduction: $99K in avoided compliance costs and improved uptime

Frame in terms they use every day:

- Payback period, how many months until the investment was fully recovered

- Cost of inaction, what would the next 3 years cost if the integration problems continued unchecked

- Comparison to alternatives, the consulting engagement vs. the cost of a failed internal attempt (using your own history or industry benchmarks)

Use the language of investment rather than technical implementation details. No mention of canonical data models, entity resolution, or governance frameworks. Instead: capital efficiency, cost avoidance, accelerated revenue capture, and risk mitigation.

What to Avoid

Don’t present intangible benefits as financial returns. The CFO will discount your entire analysis if you try to put a dollar sign on “improved data trust.” Keep the financial section rigorous and present strategic value separately as supporting context.

To the CTO / CIO / VP of Engineering

What They Care About

The technical leadership question: “Did this make our architecture better and our team more capable?”

They’ve lived with the technical debt, the fragile pipelines, and the late-night pages. They want to know the integration consulting delivered a foundation that’s sound, scalable, and maintainable, not just functional today.

How to Present

Lead with architectural improvement:

“We went from 47 point-to-point connections with no documentation to a hub-and-spoke architecture with full lineage tracking, automated monitoring, and documented runbooks for every pipeline.”

Then show the operational metrics:

- Pipeline failure rate: from 11/month to under 2/month

- Mean time to recovery: from 4.5 hours to 22 minutes

- New source onboarding: from 6–8 weeks to 3–5 days

- Engineering time on maintenance vs. new development: from 70/30 to 25/75

- Infrastructure cost per GB processed: 40% reduction through architecture optimization

Highlight capability and sustainability:

- Technical debt quantified and addressed, not just patched

- Team can now handle integration tasks that previously required external support

- Architecture has been validated through testing and capacity planning to support projected growth multiples without major redesign

- Documentation and runbooks are complete and actively maintained

Address what matters to them personally:

- Their team is no longer firefighting, they’re building

- On-call burden has decreased measurably

- The architecture reflects industry best practices and is defensible in technical design reviews.

- New hires can onboard to the integration layer in days, not months

What to Avoid

Don’t over-index on financial ROI with this audience. They understand the cost justification, but what they really want to know is whether the technical foundation is solid. If the architecture is right, they’ll help you make the financial case to everyone else.

To the CDO / VP of Data / Analytics Leadership

What They Care About

The data leadership question: “Can we now trust the data sufficiently to build advanced analytics and AI capabilities on top of it with predictable outcomes?”

They’ve been trying to deliver analytics, build models, and create data products, but the foundation kept undermining them. They want to know the foundation is finally trustworthy.

How to Present

Lead with data quality and trust:

“Duplicate customer records dropped from 34% to under 3%. The stakeholder data trust score improved from 2.1 to 3.8 out of 5. For the first time, all three departments report the same customer count.”

Then show the analytics enablement:

- BI platform daily active users: up 140%

- Time from data request to delivered insight: from 3–5 days to under 4 hours

- Analytics initiatives unblocked: customer 360, churn prediction, personalization engine

- AI/ML team now spends 30% of time on data prep, down from 80%

Show governance maturity:

- Shared business glossary published with agreed-upon definitions for all key metrics

- Data ownership model operational with active stewards in every domain

- Lineage tracking covering source through transformation to consumption

- Data quality monitoring automated with alerting and escalation procedures

Connect to their roadmap:

- What can they now build that they couldn’t before?

- What initiatives move from “blocked by data” to “ready to start”?

- How does the foundation support their 12–18 month analytics strategy?

What to Avoid

Don’t present this as a one-time achievement. Data leaders know that quality and governance degrade without ongoing attention. Show them the measurement rhythm and the sustainability plan, not just the current snapshot.

To Business Unit Leaders

What They Care About

The business leader’s question: “How does this help my team do our jobs better?”

They don’t care about integration architecture. They care about their reports, their customers, their numbers, and their time.

How to Present

Lead with their specific pain point, resolved:

For the VP of Sales:

“Your team was spending 6 hours per week manually reconciling pipeline data between Salesforce and the forecasting tool. That reconciliation is now automated. And the customer data powering your territory assignments is accurate for the first time, no more misattributed accounts.”

For the CFO’s finance team:

“Monthly close went from 14 days to 5. The revenue numbers in the dashboard now match the numbers in NetSuite, exactly. Your team recovered 320 hours per audit cycle in preparation time.”

For the VP of Marketing:

“You now have a unified customer profile across CRM, e-commerce, and engagement data. Campaign targeting accuracy improved by 23%. The cross-sell model your team requested is live and producing results.”

Use before-and-after comparisons on metrics they already track. Don’t introduce new metrics, show improvement on the ones they already care about.

Show time recovered. Every business leader understands the value of their team getting hours back. “Your analysts spend 18% of their time on data prep instead of 62%” is a powerful statement to someone managing a team budget.

What to Avoid

Don’t present the technical framework. Don’t mention data modeling, governance, or architecture. Translate everything into their language, their metrics, their outcomes. If you lead with technical terminology such as pipeline in a presentation to a business unit leader, you risk losing their attention.

To the Board / Executive Committee

What They Care About

The board’s question: “Is this company making smart investments in its data capabilities, and are we positioned competitively?”

They want the highest-altitude view, investment, return, strategic positioning, and risk management. In the fewest possible words.

How to Present

One paragraph. Then one page. Then supporting detail if asked.

The paragraph:

“We invested $414K in a data integration initiative to unify our customer and financial data across four core systems. The engagement delivered 567K dollars in measurable Year 1 returns, a 37 percent ROI, with three year projected returns of 2.67M dollars based on stated assumptions. Beyond financial returns, the initiative eliminated conflicting reporting across departments, reduced compliance preparation time by 80%, and created the data foundation for our AI and personalization roadmap. The investment has been fully recovered and is now generating ongoing annual returns.”